Capability Gating, Audit Trails, and Human-in-the-Loop Control Planes

In the last year, the AI agent conversation has been dominated by model capability: better reasoning, better tool use, better planning, better memory. That framing is incomplete. Once an agent is allowed to act on a system rather than merely answer questions, the central design problem is no longer just what the model can infer. It is what the runtime allows, what the surrounding system records, and where policy is enforced.

That is why the recent push toward “local” or enterprise-resident agent systems matters more than the usual cloud-versus-local marketing suggests. In March, Axios reported on Perplexity’s move toward a Mac-based agent form factor for enterprise use. The interesting part is not the device branding by itself. The interesting part is the systems pattern underneath it: placing the execution environment, the policy layer, and the approval surface much closer to the data and the tools being used.

This changes agent architecture in a fairly deep way. A conventional cloud agent is usually designed around remote orchestration: the model lives in the cloud, tool calls are brokered through APIs, and enterprise data is fetched into that remote loop as needed. A secure local AI computer, by contrast, can be designed around controlled proximity. The model or orchestrator may still rely on remote services, but the sensitive context, capability boundaries, logging, and approval logic can live near the machine that actually executes work. That shift changes what can be trusted, what can be observed, and what can be safely automated.

The headline claim is simple: secure local AI computers do not merely make agents more private. They make it possible to build a different control architecture for high-autonomy systems.

The cloud-agent pattern optimized for reach, not containment

The default cloud-agent pattern has a recognizable shape. A user sends a task to a hosted agent. The model decomposes the task, calls tools through a broker, retrieves documents or application state over APIs, and returns actions or artifacts. Even when the system is well-built, most of the control logic sits in a centralized orchestration layer. Permissions are often expressed as API credentials, integration scopes, or per-tenant policy objects stored remotely. Logs are captured centrally. Review workflows are usually added as application-layer checkpoints around important tool calls.

This architecture is attractive because it scales operationally. One hosted control plane can serve many users, many connectors, and many models. It also fits how software teams already think about SaaS: central deployment, uniform updates, aggregated telemetry, and one place to impose policy.

But for high-autonomy agents, the pattern has structural weaknesses. The first is that the agent often needs sensitive local context to be useful: files, clipboard history, browser state, enterprise desktop applications, locally cached credentials, device identity, network topology, and user workflow traces that never cleanly exist in a cloud API. The second is that once the agent reaches into those systems from afar, the distance between decision and execution becomes a liability. Every time context is copied upward into a remote reasoning loop, every time an action is routed through a broad integration token, and every time logs are reconstructed after the fact, the system becomes harder to contain.

This is not just a privacy objection. It is a control objection. Cloud agents tend to concentrate intelligence and authority in the same place. The more capable the hosted orchestrator becomes, the more tempting it is to give it wide, reusable permissions and to let the rest of the system trust that the model will behave. That is a poor trust model for autonomous execution.

Secure local AI computers move the trust boundary

A secure local AI computer changes the question from “How smart is the agent?” to “Where does authority actually live?” In a traditional mental model, the model is the main locus of trust: if the model is aligned enough, prompt-guarded enough, and monitored enough, then tool use is considered manageable. In a local-first architecture, trust shifts away from model behavior alone and toward runtime design.

That shift matters because models are probabilistic decision engines, not security boundaries. They can be instructed, steered, evaluated, and constrained, but they should not be treated as the final line of defense. Security has to come from the execution substrate: capability gating, sandboxing, approval routing, network controls, and traceable event logs that survive model error.

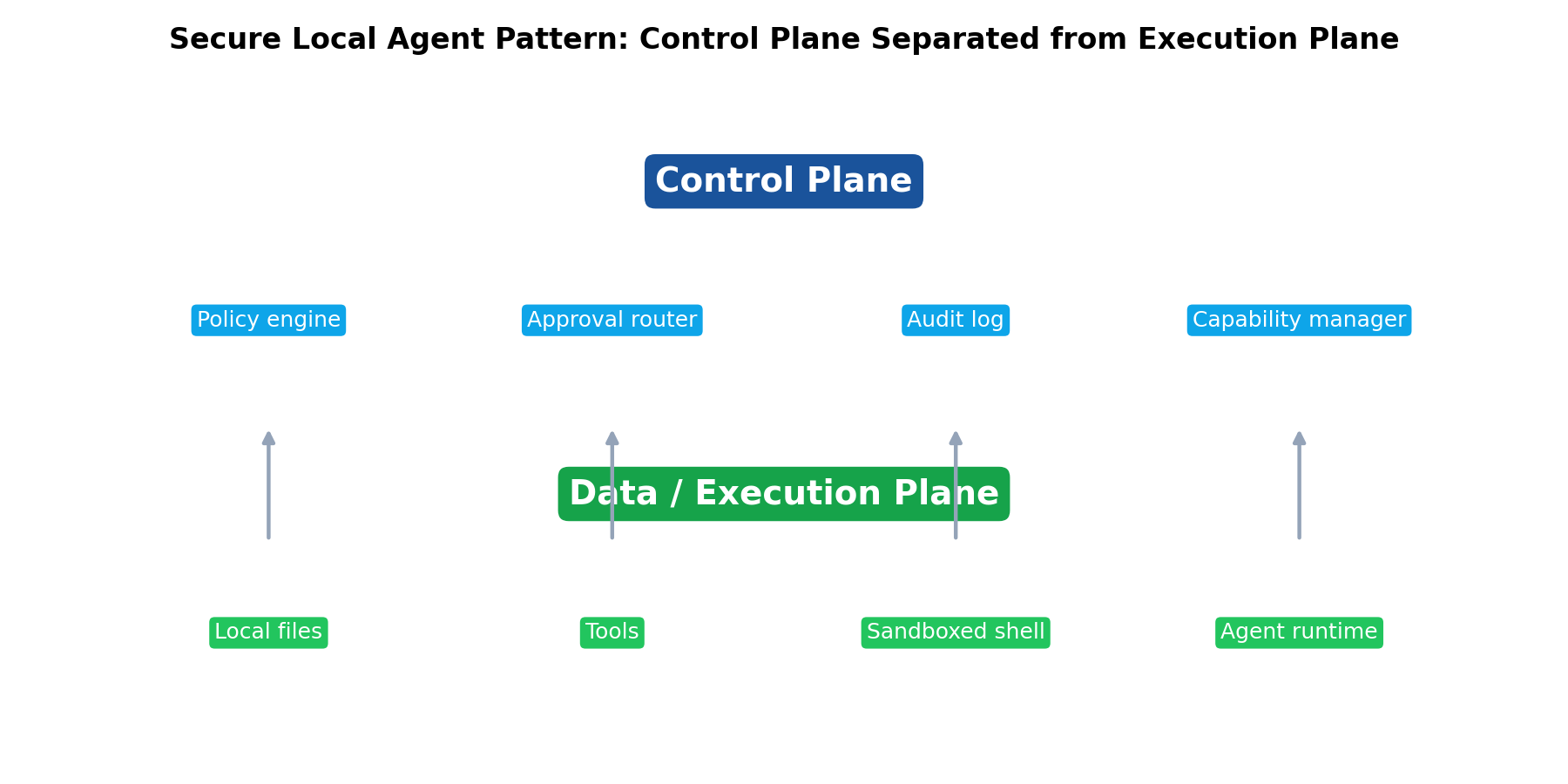

A secure local agent system therefore looks more like an operating environment than a chatbot with plugins. The local machine becomes the data plane where actions happen and sensitive context resides. A separate control plane decides what classes of actions are allowed, under what scopes, under what approvals, and with what level of observability. The model may propose actions, but the runtime mediates them.

That is the real architectural difference. Locality by itself is not the point. Proximity plus mediation is the point.

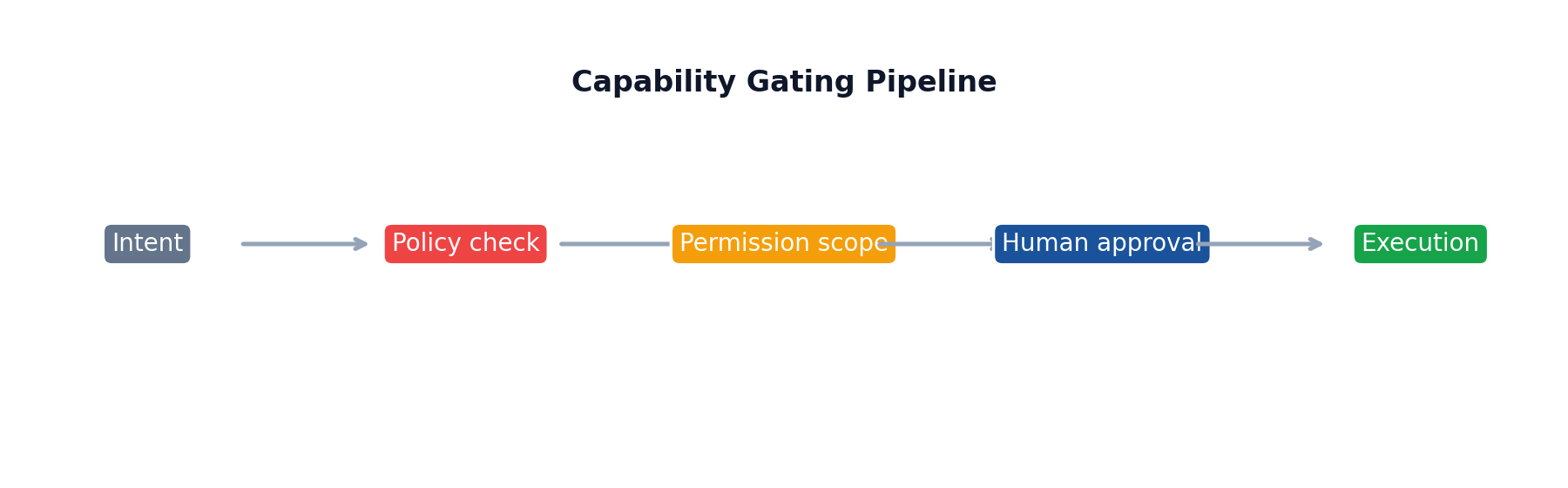

Capability gating becomes the first-class primitive

In a safe agent system, the core unit is not “tool available or unavailable.” It is a capability with explicit scope, duration, and context. A local runtime can enforce this far more precisely than a generic cloud broker.

Consider a simple example: “summarize the latest contract revision and send a redline to legal.” A naïve cloud agent might need wide access to a drive, email, document system, and perhaps desktop files. A secure local AI computer can instead grant a much narrower capability token for this session only: read access to one folder, write access to one output directory, no arbitrary network upload, and a pending-approval route for external email transmission. The model never receives a blanket “can use email” authority. It receives a mediated capability graph.

This is where scoped tool permissions become more than application UX. They become the grammar of the runtime. The system can express constraints such as read-only file access, write-only access to a staging directory, browser automation limited to approved domains, shell execution inside a container with a temporary filesystem, or database access restricted to parameterized queries against one schema. These are not prompt instructions. They are enforcement boundaries.

Once capability gating is local, it can also become more context-sensitive. The runtime can inspect device state, user presence, current network conditions, classification labels on files, and enterprise policy before granting a tool invocation. A model may request “open CRM records and compose reply,” but the local policy engine can decide that customer export is prohibited on unmanaged networks, that access to certain accounts requires step-up approval, or that copying records into the model context exceeds data minimization rules.

This is a fundamentally stronger pattern than hoping a remote orchestrator remembers a rule embedded three abstraction layers higher.

Execution boundaries become concrete, not rhetorical

Agent demos often blur planning and acting. In real systems, those need separation. Secure local AI computers make that separation easier to enforce because execution can happen inside hardened local boundaries rather than through one broad, shared agent process.

Sandboxing is the obvious example. If an agent needs shell access, code execution, document parsing, or browser automation, those operations can run in isolated sandboxes with tightly controlled filesystems, ephemeral credentials, and outbound network restrictions. A local machine can spawn these sandboxes near the user’s environment while still keeping them compartmentalized from the rest of the device.

This matters for two reasons. First, it contains mistakes. If the model generates a destructive shell command or misuses a tool, the blast radius is limited to the sandbox’s declared scope. Second, it contains data. Sensitive inputs do not have to be copied to a distant orchestration service just to perform a local transformation. They can be mounted into a bounded workspace, processed there, and either retained locally or emitted only through approved channels.

The architectural implication is that tool execution stops being a monolithic capability. Instead, each action happens in a bounded execution domain with its own identity, policy, and audit trail. File manipulation may occur in one sandbox. Browser automation may occur in another. Enterprise connector access may be proxied through a local policy daemon. The model sees a unified tool interface, but underneath it the system is segmented.

That segmentation is what makes high-autonomy operation survivable. Without it, one prompt-injected browser session or one over-scoped credential effectively turns the entire agent into a lateral movement surface.

Audit trails stop being an afterthought

If a system is allowed to act, it must be able to explain itself after the fact. In cloud-agent products, logging is often implemented as developer observability: request traces, tool call logs, model outputs, perhaps some evaluation metadata. Useful, but not sufficient.

A secure local AI computer invites a more rigorous event model. The right question is not merely “what did the model say?” but “what capability was requested, what policy evaluated it, what approval state applied, what exact action executed, what data crossed a boundary, and who can reconstruct that timeline later?”

That is where event logging and traceability become architectural, not operational. Every meaningful transition can be recorded as a structured event: model proposal, tool request, capability grant, policy denial, human approval, sandbox launch, filesystem write, network egress, external message dispatch, and final artifact hash. In other words, the audit trail follows the action lifecycle, not just the conversation lifecycle.

Local placement improves this in two ways. First, the runtime can observe things that cloud systems usually abstract away: OS-level process launches, local file reads, clipboard access, USB device interactions, and policy decisions bound to machine state. Second, the logs themselves can remain within enterprise control boundaries, which is crucial when the trace contains sensitive context or incident evidence.

This is the difference between an agent that is merely observable and an agent that is accountable. Observability helps you debug. Accountability helps you govern.

Human-in-the-loop control planes become practical instead of ceremonial

Many agent systems claim human oversight, but in practice the human is often presented with a binary prompt at the wrong moment: “Allow this action?” That is not a control plane. It is an interruption.

A secure local runtime makes a more disciplined approval architecture possible because approval routing can be attached to capability classes rather than bolted onto random tool calls. The control plane can define policies such as: low-risk read actions execute automatically; internal writes require local user presence; financial transactions route to a designated approver; external communications require recipient-specific confirmation; bulk export actions require dual approval; and high-risk operations trigger a pause plus a machine-readable justification bundle.

The important systems idea here is separation between control plane and data plane. The data plane is where the agent actually reads, writes, executes, and communicates. The control plane is where policies, approvals, escalation rules, identities, and audit state live. In a secure local AI computer, these planes can be split even if they run on the same physical machine. The model may produce a plan in the data plane, but it cannot self-authorize crossing a policy threshold in the control plane.

This reduces a classic failure mode of autonomous agents: self-escalation through persuasive reasoning. The model can recommend why a step is necessary. It should not be able to mint the authority to do it.

Local deployment improves the ergonomics of human review as well. Approval can be routed to the user currently at the device, to a nearby enterprise dashboard, or to a secure local notification surface with access to the relevant context. That makes approvals faster and more specific, while reducing the amount of sensitive information that has to be sent outward merely to ask for consent.

Local context access changes usefulness, but also changes exfiltration risk

One reason local agents are compelling is obvious: they can access the messy, high-signal context that makes real work possible. That includes local documents, app state, desktop history, cache layers, and environmental details that rarely make it into tidy APIs. For many enterprise workflows, this is the difference between a toy assistant and an actually useful one.

But that same proximity creates a dangerous illusion. Local access does not automatically mean safe access. A model that can read rich local context can also over-collect it, leak it through generated outputs, or route it through over-broad tools unless the runtime is disciplined.

That is why policy enforcement near the execution environment matters. The local system can inspect data before it leaves the device, apply classification and redaction rules, block network egress to unapproved destinations, and force sensitive artifacts through escrow or review queues. In other words, the system can treat exfiltration as a runtime event, not just a privacy policy violation.

This is one of the clearest architectural advantages of secure local AI computers. They can combine local-first access with local-first containment. The same machine that sees the sensitive data can enforce the last-mile rules around where that data may go.

Cloud systems can attempt this too, but they are often fighting upstream because the data has already been copied into the cloud control loop by the time a policy engine evaluates it. Once the sensitive context has left its original boundary, containment is already partly lost.

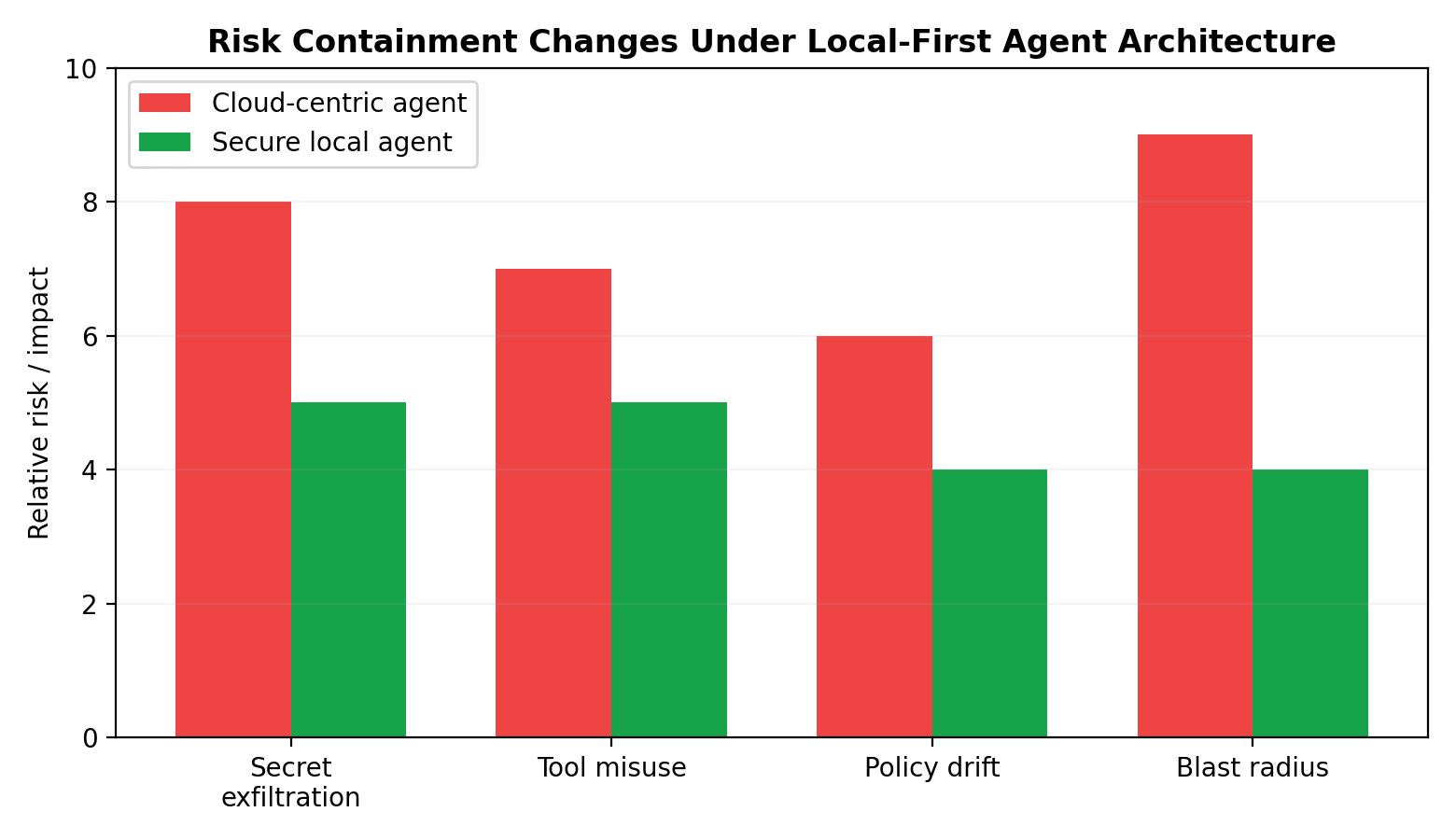

Cloud-agent pattern versus secure-local-agent pattern

The cleanest way to see the difference is to compare the two architectures at the level of failure handling.

In the cloud-agent pattern, usefulness comes from central intelligence and broad integration. Context is pulled into the agent. Permissions are often long-lived because repeated re-authentication harms UX. Logs are centralized but may lack machine-local detail. Approval checkpoints exist, but they frequently sit above the actual execution substrate. Failure containment is therefore largely policy-driven and retrospective.

In the secure-local-agent pattern, usefulness comes from controlled proximity to context and tools. Sensitive data can remain near the device or enterprise boundary. Permissions can be narrowed to session, task, or object level because the local runtime is closer to the resources being touched. Logging can include both semantic traces and concrete system events. Approval checkpoints can be attached directly to capability grants and execution boundaries. Failure containment is therefore more structural.

Neither pattern is inherently perfect. Cloud systems still win on fleet management, rapid updates, and centralized coordination. But for high-autonomy agents acting on sensitive environments, the local pattern offers a better security geometry. It reduces the distance between policy and execution, and that usually matters more than the slogan “runs locally.”

##

Residual risks do not disappear just because the box is local

There is a temptation to over-correct here and treat local deployment as a magic trust upgrade. That would be a mistake.

A compromised local machine is still a compromised local machine. If the host operating system is infected, if endpoint controls are weak, or if the device is physically exposed, then a local agent may become a very convenient mechanism for accelerating damage. Likewise, overbroad permissions remain overbroad whether granted in the cloud or on-device. An agent with unrestricted filesystem access, open network egress, and persistent tokens is dangerous in either setting.

There is also a softer but equally important risk: false trust in the word “local.” Teams may assume that because inference or orchestration happens near the user, data handling is automatically safe. In reality, many so-called local systems still call remote APIs, sync logs externally, fetch third-party tools, or permit unrestricted outbound communication. If those paths are not explicit and governed, the system can quietly recreate the same exposure pattern under a more reassuring label.

Finally, human approval can degrade into theater if the prompts are too frequent, too vague, or too detached from the actual risk. A control plane that floods users with routine approvals will train them to rubber-stamp the rare dangerous one. The answer is not more pop-ups. It is better risk stratification and clearer routing.

The real design lesson

The next phase of agent engineering will not be won by teams that merely improve planning quality. It will be won by teams that treat autonomy as a systems problem.

Secure local AI computers matter because they make a different systems architecture viable. They let builders move policy enforcement closer to execution. They allow capability gating to be granular and contextual. They make sandboxing practical as a default tool substrate. They support richer audit trails tied to concrete machine events. They enable control planes that govern action classes rather than blindly trusting a model to remain inside informal boundaries. And they improve local-first privacy not by eliminating risk, but by making failure containment more realistic.

The trust boundary, in other words, shifts away from the model as the sole object of confidence and toward a layered runtime design: model, policy engine, capability broker, sandbox executor, approval router, and event log. That is a healthier architecture because it assumes the model will sometimes be wrong, the prompt will sometimes be attacked, and the operator will sometimes need forensic clarity afterward.

This is why the current local-agent push deserves to be read as an architectural signal, not just a packaging decision. The important question is not whether an AI agent runs on a Mac mini, an enterprise workstation, or a server in a private rack. The important question is whether the system uses that placement to redesign control.

If it does, then “secure local AI computer” is not just a hardware story. It is the beginning of a more serious agent runtime: one where capability gating is explicit, execution boundaries are enforceable, audit trails are native, and human oversight is part of the control plane rather than a last-minute apology for autonomy.