Alibaba and Baidu are not just shipping another round of enterprise AI packaging. The more revealing signal in their agent-platform push is architectural: the market is drifting away from the story that one giant model, wrapped in enough tools and memory, can serve as the universal worker for the firm. What enterprise vendors are actually building looks much closer to a supervised computational graph: a system that decomposes requests, assigns bounded subproblems to specialists, mediates their interactions, records state transitions, and exposes a control plane that operations, security, and compliance teams can inspect. That convergence is not a stylistic preference. It is what happens when agentic systems leave the demo layer and collide with procurement rules, access boundaries, exception paths, audit obligations, and the ugly combinatorics of real workflows.

Why the single mega-agent breaks the moment the workflow becomes real

The single mega-agent is the natural first fantasy because it compresses the problem into one policy. Put enough context into one prompt, give the model access to every enterprise tool, and let it plan end-to-end. In abstract form, this is a centralized decision process

where a single policy chooses action from user objective , conversational history , memory , and tool set . It sounds elegant because it hides the orchestration problem inside the model. In production, that elegance turns into a concentration of

failure modes.

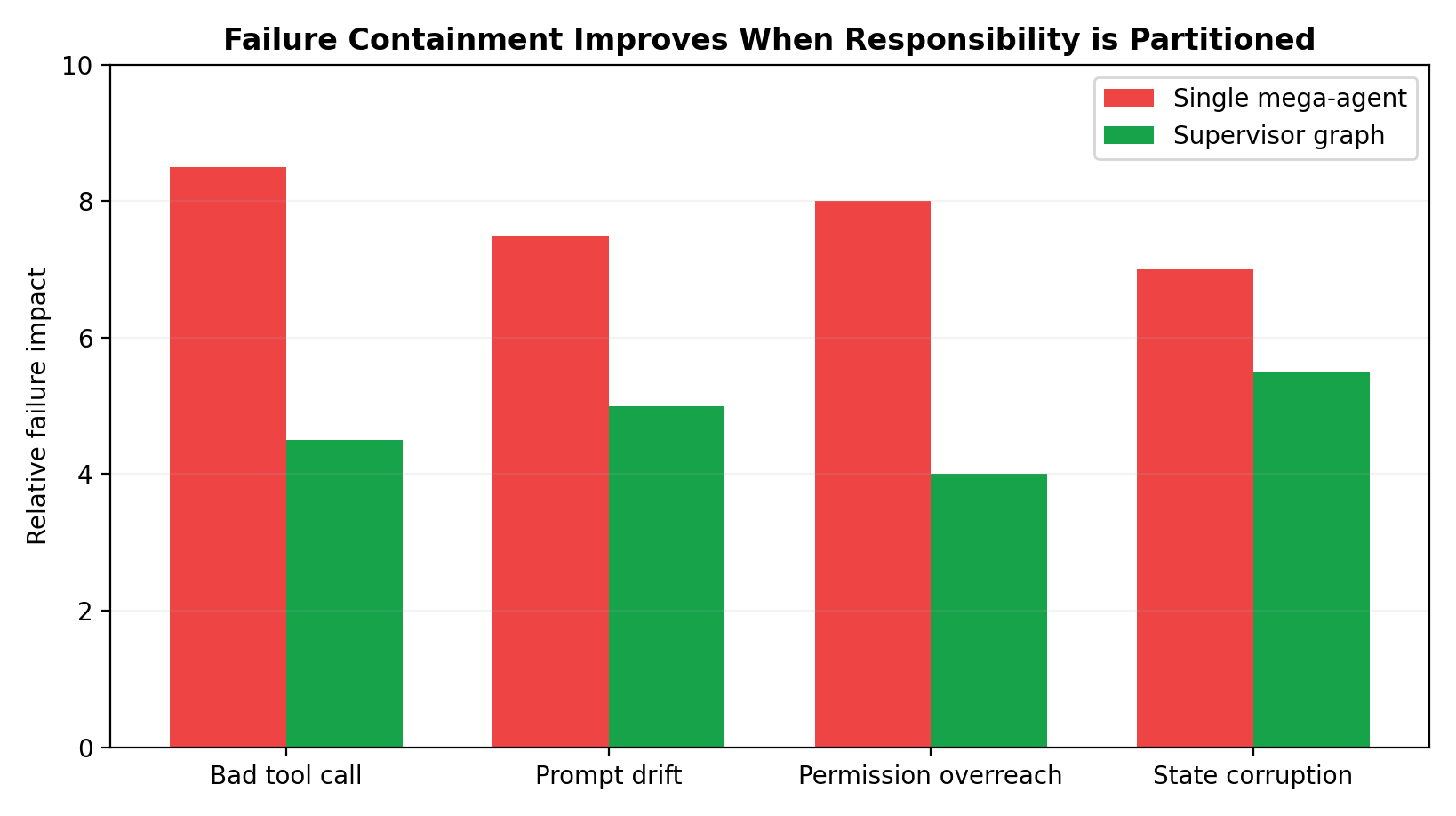

The first breakdown is representational overload. Enterprise tasks are not merely long; they are heterogeneous. A support escalation may require policy retrieval, transaction lookup, fraud screening, customer-history synthesis, compensation calculation, approval routing, and finally deterministic mutations in CRM and billing systems. These subproblems live in different semantic spaces. The model must keep legal constraints, operational state, raw tool outputs, partial plans, and natural-language rationales simultaneously alive in one rolling context. The larger the workflow horizon, the more the model is forced to compress earlier information into summaries rather than preserve it as executable state. Once that compression starts, decisions depend on model-authored recollections of prior facts rather than the facts themselves.

The second breakdown is objective entanglement. A mega-agent is usually asked to do too many logically distinct jobs at once: infer intent, decide decomposition, select tools, construct tool arguments, interpret results, validate its own work, manage exceptions, draft user-facing text, and decide when to ask for help. These are not merely multiple steps; they are different control roles. When one policy performs all of them, there is no principled boundary between planning, execution, and verification. A completion-seeking model will often smooth over uncertainty rather than expose it, because the same latent process that wants to finish the task is also the process supposedly responsible for checking whether the task should be finished.

The third breakdown is error geometry. In long-horizon tasks, mistakes do not stay local. They compound. If an early retrieval step omits the controlling policy document, the planner reasons over an incomplete state. If the planner misclassifies the task, the wrong tools are called. If the wrong tool output is then summarized as authoritative, every downstream step inherits the error. With a monolithic agent, the entire trajectory becomes one coupled chain:

where error state at time conditions later actions and observations . In practice, this creates hidden path dependence. The model is not just making one mistake; it is gradually constructing a world in which that mistake appears internally coherent.

The fourth breakdown is security architecture. Enterprises do not like universal principals. They do not want one software entity with standing authority to read contracts, trigger refunds, create tickets, send external emails, and update ERP rows. Even if one could technically grant that authority, it would violate separation-of-duties norms that already exist in the organization. The problem is not merely that the agent might hallucinate. The problem is that the architecture itself collapses distinctions between “may inspect,” “may recommend,” “may prepare,” and “may execute.”

The fifth breakdown is observability. When a single mega-agent fails, the postmortem often degrades into theatrical archaeology. Teams stare at a long transcript and ask whether the root cause was missing context, bad retrieval, a prompt regression, tool misuse, stale memory, model drift, or an upstream system outage. Because the architecture collapses all control logic into one conversational stream, diagnosis becomes interpretive rather than structural.

This is why the single-agent story remains strong in demos and weak in governed environments. Demos reward fluidity. Enterprises reward boundedness.

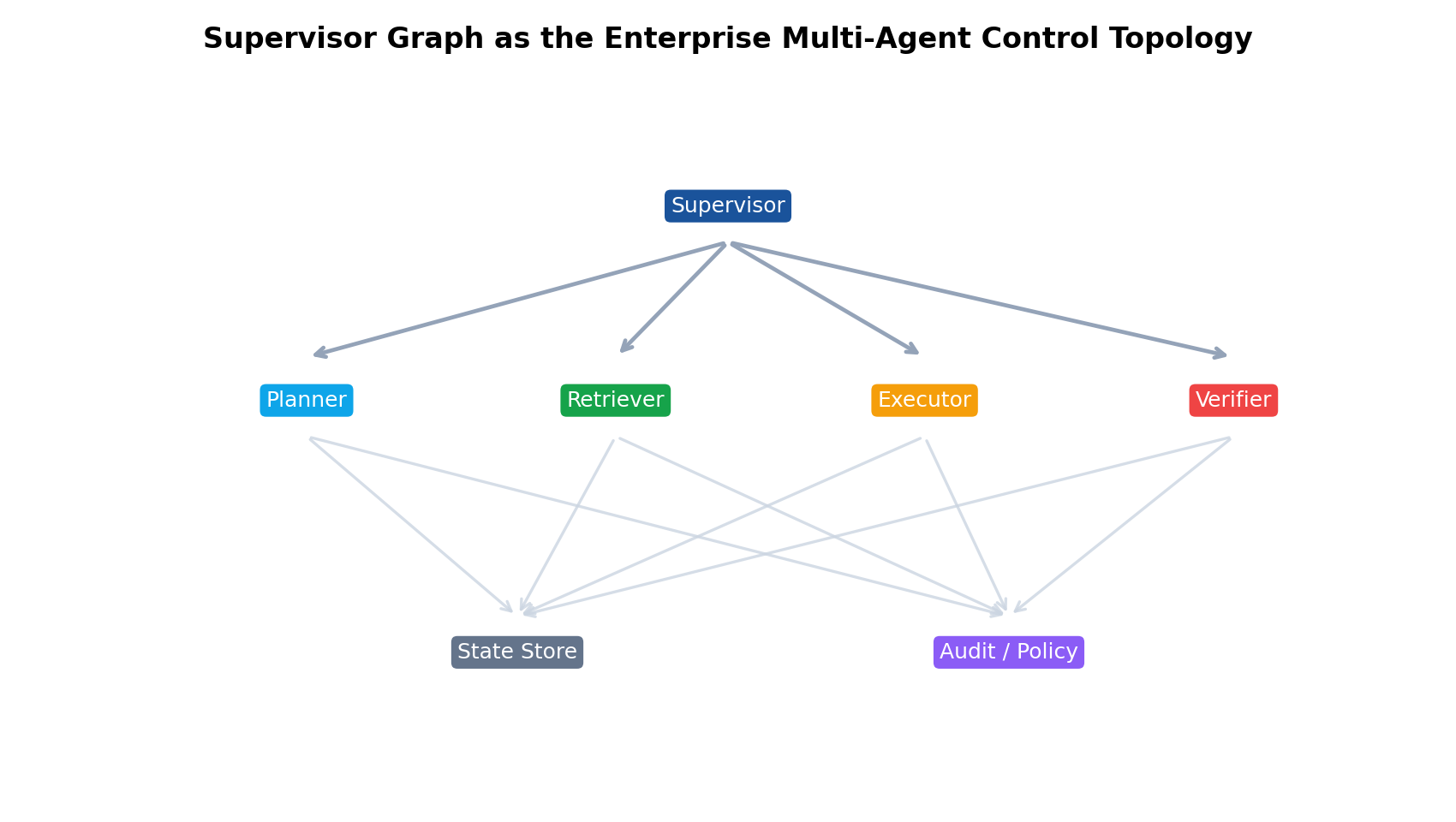

Supervisor graphs as the actual computational model

What is replacing the mega-agent is not just “multiple agents,” which is too vague to be useful. The more precise abstraction is a supervisor graph. Let

where is a set of computation nodes, is a set of allowed transitions, is the typed workflow state, and is the transition function that moves the system from one state to another. A node may be a specialist agent, a retrieval routine, a validator, a policy engine, a deterministic executor, or a human checkpoint. The supervisor is not just another node with a fancy label. It is the mechanism that interprets state, chooses which node fires next, enforces transition constraints, and decides when the graph should branch, merge, suspend, retry, or terminate.

This matters because the graph externalizes coordination. The system no longer pretends that orchestration is a hidden capability emergent from one prompt. Instead, orchestration becomes first-class software. That makes the control problem explicit enough to govern.

In practical enterprise systems, the graph often mixes several topologies. There is usually a hub-and-spoke layer in which a central supervisor routes work to specialists. There may be hierarchical subgraphs for domains like finance, support, legal, or IT operations. Some paths form DAGs because independent subtasks can run in parallel. Other paths are cyclic because validation can send work back upstream for revision. These are not academic design flourishes. Enterprise workflows already contain approvals, exception branches, compensating actions, and human escalations. The graph simply stops lying about that reality.

A good supervisor graph does three things that a mega-agent cannot do reliably. First, it constrains the action space at each stage. A policy-analysis node is not allowed to mutate enterprise records; an execution node is not allowed to reinterpret policy. Second, it makes transition conditions explicit. The system can say, in code, that a payment action is only reachable if fraud risk is below threshold, required evidence is present, and approval status is satisfied. Third, it supports localized failure handling. A retrieval node can fail and retry without forcing the entire workflow to regenerate its narrative of the world.

If one wants a distributed-systems analogy, the mega-agent is a monolith that keeps pretending it is stateless while secretly depending on a giant in-memory context. The supervisor graph is closer to a workflow engine with learned components embedded inside it.

Task decomposition and specialist allocation are not prompt tricks; they are complexity control

People often explain decomposition as a way to “help the model think step by step.” That undersells the engineering reason. Decomposition is how the platform reduces the entropy any one component must manage.

Suppose a user asks the system to resolve a failed enterprise order that includes duplicate billing, a shipping exception, and a contract-specific compensation clause. In a monolithic design, one agent must simultaneously infer what the problem is, collect evidence, identify controlling policy, estimate permissible remedies, and execute the remedy through tools. In a supervisor graph, the same request is transformed into typed subtasks: classification, evidence acquisition, policy interpretation, remedy synthesis, risk review, and deterministic execution. Each subproblem is smaller not only in length but in objective surface area.

One can think of the expected decision complexity at a node as some function

where is the entropy of information the node must consider, is the entropy of its allowed actions, and is the entropy of relevant failure modes. Specialization works because it lowers all three terms. A retrieval specialist sees less action space than a global agent. A policy specialist sees less tool ambiguity. A deterministic executor sees essentially no interpretive ambiguity at all.

This is also where model heterogeneity becomes a feature rather than an inconvenience. Not every node deserves the most expensive reasoning model. Lightweight classifiers can perform routing. Retrieval nodes may use embeddings and rerankers. Contract-analysis nodes may justify a frontier reasoning model. Execution nodes may be pure code with no model call at all. The graph therefore allocates compute where uncertainty actually lives:

where is the set of active paths. The enterprise optimization problem is not to minimize the number of model calls at all costs. It is to place expensive reasoning on the nodes where it changes correctness and remove it from nodes where it merely adds theatrical intelligence to deterministic work.

There is another, subtler benefit. Specialist allocation makes evaluation tractable. A global agent is hard to score because success hides too many latent causes. A retrieval node can be measured on citation precision and recall. A policy node can be tested against gold legal or compliance interpretations. An executor can be scored on schema adherence and side-effect correctness. Once the platform decomposes the workflow, it can decompose blame and improvement too.

Inter-agent protocols, arbitration, and conflict handling are the real heart of multi-agent systems

The phrase “multi-agent” invites people to imagine several LLMs chatting. That is usually the wrong mental model for enterprise systems. Free-form conversation between agents is charming until it has to survive scale, retries, and audits. What enterprises need is protocol, not banter.

A useful message abstraction is

where is message type, is payload, is provenance, is confidence or calibration metadata, encodes permissions or policy labels, and is freshness or expiry. This lets nodes exchange machine-interpretable outputs rather than paragraphs that must be re-understood from scratch by every downstream model.

The protocol question is not cosmetic. It determines whether the supervisor can perform principled routing. If a retrieval node emits “I found something relevant in policy docs,” that is human-readable and operationally weak. If it emits a typed structure saying that clause 7.2 from policy version 14 conflicts with a requested refund amount and carries source authority score 0.93, the supervisor has something it can actually reason over.

Conflict is inevitable in these systems because specialist views are partial and differently grounded. A retrieval node may surface documents suggesting one course of action while a policy node rejects that course under a newly effective contract clause. A fraud model may label an account risky while customer success metadata shows high-value status that would normally justify exception handling. The architecture therefore needs explicit arbitration.

The simplest arbitration model is rule-prioritized resolution: system-of-record facts outrank model inference, signed policy beats loose retrieval, human approval outranks automated recommendation, and hard business rules outrank heuristic scoring. But complex workflows often need a richer objective. One can write the arbitration problem as

subject to

where are specialist outputs, is current workflow state, is utility under business objectives, and are hard constraints such as permissions, regulatory boundaries, or monetary thresholds. This is the point where “agent orchestration” starts to look less like prompting and more like constrained control.

Conflict handling also requires knowing which disagreements should trigger retries and which should trigger escalation. Re-running the same model on the same contradictory evidence is usually not a strategy; it is a way of paying twice for indecision. A good supervisor distinguishes transient failures from semantic ones. Transient failures include timeouts, rate limits, and unavailable upstream systems. Semantic failures include unresolved evidence contradiction, invalid action plans, or calibration collapse. The first class invites retry logic. The second class invites arbitration, additional evidence gathering, branch rollback, or human review.

There is a practical lesson hiding here: once agents are decomposed, the interesting problem is no longer “how do we make them talk?” It is “how do we stop them from talking in ways the platform cannot adjudicate?”

State, memory, and execution semantics decide whether the system is a workflow engine or a hallucination engine

The phrase “agent memory” has been abused enough to become almost content-free. In enterprise systems, memory has to be divided according to mutation rights and execution semantics.

At minimum, there are three distinct layers. There is immutable event history: every node invocation, tool result, approval event, denial event, retry, timeout, and state transition. There is live workflow state: the current objective, branch statuses, pending actions, evidence references, confidence metadata, and outstanding exceptions. And there is long-lived business memory: customer records, durable facts, resolved cases, entity links, and versioned policy artifacts.

A useful representation is

where is append-only event history up to time , is current workflow state, and is business memory or persistent knowledge. Different nodes should have different read and write privileges over these components. A retrieval specialist might read and append a retrieval event to , but it should not rewrite prior approvals in . An execution node may update with a successful action result but should not be able to silently alter provenance already committed to .

This separation is what makes replay, checkpointing, and recovery possible. If the platform persists deterministic checkpoints at important cut lines, then a crashed or interrupted run can resume from a known state rather than from a model-generated summary of “what we were doing.” In formal terms, the workflow should approximate a transition system

where is the chosen action or node transition and is the resulting observation. The important thing is that is externally represented by the workflow engine, not merely implied inside model text.

Execution semantics matter especially once side effects enter the picture. Reading documents and writing summaries can tolerate some ambiguity. Mutating records, issuing refunds, creating tickets, sending legally meaningful messages, or triggering downstream workflows cannot. This is why mature platforms increasingly separate action intent from action execution. A planner may propose a structured intent like “issue partial credit under clause 7.2 and open expedited replacement if stock permits.” A deterministic execution layer then compiles that intent into actual API requests, verifies preconditions, enforces idempotency, and returns typed outcomes.

Without that separation, the system effectively asks a language model to both propose and perform side effects. That is cute right up until the second duplicate refund.

Observability, auditability, and enterprise control planes are not optional garnish

A consumer agent can get away with a transcript and a shrug. An enterprise platform cannot. Once agentic workflows touch regulated data, external communications, operational systems, or money movement, the platform needs a control plane.

The first job of that control plane is observability. Every node execution should produce a trace span with attributes such as model version, prompt or policy version, input references, tool schema version, latency, token usage, routing decision, and downstream effects. The interesting unit is not the chat transcript but the execution trace over the graph. When something goes wrong, operations teams need to ask precise questions: which node introduced the bad assumption, which policy version was applied, whether the system acted on stale retrieval, whether a validator was bypassed, whether the branch should have been blocked at a permission gate.

The second job is auditability. Enterprises need to reconstruct not only what happened but whether what happened was allowed. That requires durable provenance. Which evidence source justified the action? Which approval threshold was in effect? Which human approved a high-risk branch? Which node interpreted the policy, and was that interpretation itself validated? This is one reason supervisor graphs are so attractive: they provide natural attachment points for policy checks and approval records. The architecture can represent, explicitly, that a transition from recommendation to execution requires a signed approval artifact or a policy-engine pass.

The third job is control. Enterprises do not merely observe agentic systems; they shape them. They set budgets, force model routing, disable risky tools, mark systems of record as authoritative, enforce redaction policies, choose retry ceilings, and route low-confidence cases into human queues. In other words, they need something analogous to a cloud control plane for agents. The point of the platform is not to unleash cognition and hope. It is to expose enough levers that organizational governance can survive contact with model behavior.

This is also where the Alibaba/Baidu signal matters. Large cloud vendors are naturally biased toward control-plane thinking because their enterprise customers already expect governance surfaces. Once agent platforms are sold into that world, “agent quality” becomes inseparable from permission models, observability, policy enforcement, and lifecycle management. The architecture that fits those expectations is a graph with supervision, not a charismatic universal worker.

Where supervisor graphs fail, and when they become too expensive

It would be neat if the story ended with “therefore graphs win.” Real systems are uglier than slogans. Supervisor graphs can fail in ways that are slower, more expensive, and more organizationally annoying than a single-agent system.

The most obvious problem is orchestration overhead. Every decomposition step adds routing cost, serialization cost, message normalization cost, and merge complexity. If the graph is overdesigned, the system starts to resemble a bureaucracy simulator: one agent drafts, another reformats, another validates, another revalidates, and the supervisor spends half its time managing the meta-work of coordination. This can destroy latency and cost efficiency. If a simple task does not genuinely benefit from decomposition, the graph becomes performative complexity.

There is also the problem of fragmentation. Local specialists can become myopic. A node optimized for policy strictness may over-block valuable resolutions. A node optimized for customer satisfaction may recommend actions that create accounting risk. A retrieval node may maximize citation relevance but fail to surface the one document that matters because it scores oddly under generic relevance metrics. In distributed systems language, local optima do not guarantee global coherence. The supervisor must continuously reconstruct the task-level objective or the graph degenerates into a collection of competent partials that collectively miss the point.

A deeper issue is correlated failure. It is tempting to think that more nodes mean more robustness because failures are isolated. Sometimes the opposite happens. If multiple specialists share the same stale retrieval corpus, the same flawed ontology, or the same misconfigured policy layer, they will fail together while creating the illusion of cross-validation. Worse, if the supervisor itself is weak, then the entire graph inherits a brittle control plane. The architecture can then exhibit a new kind of pathology: highly structured confusion.

Cost can also become non-linear. Consider a branching factor over depth . In the worst case, the number of node invocations scales on the order of

if the graph explores too many branches before pruning. Real systems rarely hit the pure combinatorial worst case, but the intuition is correct: sloppy decomposition creates cost explosions. Add arbitration loops, retries, and validation passes, and the graph starts charging enterprise prices for committee behavior.

There is a human-systems failure too. If the control plane surfaces too many knobs, too many traces, too many node types, and too many policy options, the platform becomes hard to operate. Governance then mutates into configuration sprawl. In that regime, the graph is technically superior and operationally miserable.

So there is no free lunch. Supervisor graphs are not universally better. They are better under a specific regime: tasks with heterogeneous subproblems, meaningful side effects, explicit compliance constraints, non-trivial exception paths, and a need for replayable accountability. Outside that regime, a lean single-agent system may still be cheaper and faster.

That is exactly why the current enterprise convergence is so telling. The vendors closest to real operational deployment are not betting on the universal mega-agent because they have already learned where it breaks. They are betting on supervised graphs because enterprise work is, in a very literal sense, graph-shaped: delegated, stateful, permissioned, interruptible, auditable, and full of branches that only look simple if you hide them inside one very expensive prompt.