reward shaping

Recent reports that AI systems can outperform roughly 99% of human competitors in elite capture-the-flag hacking tournaments are striking, but the headline matters less than the mechanism. The important shift is not that a language model suddenly “learned hacking.” It is that autonomous agents can now couple large-scale search, exploit construction, tool-mediated execution, and dense intermediate feedback tightly enough to solve tasks that were previously bottlenecked by human trial-and-error. CTFs are one of the cleanest settings in which this transition becomes visible because they expose the real algorithmic structure of competent offense: not one-shot brilliance, but iterative hypothesis generation under constraints.

Capture-the-flag as a laboratory for offensive reasoning

A capture-the-flag competition is best understood as a structured distribution over security problems. Each challenge presents an artifact—binary, web application, protocol transcript, memory image, firmware blob, cryptographic scheme, or sandboxed service—and asks the player to recover a secret string, trigger a privileged state transition, or derive an input that proves control. The “flag” is merely the verification token. What matters computationally is that the environment exposes a hidden mechanism and rewards agents that infer and manipulate it.

This makes CTFs unusually useful for studying autonomous hacking systems. Unlike open-ended real-world security work, the task boundaries are explicit, the scoring function is immediate, and the environment permits repeated interaction. A binary exploitation problem may require discovering a memory corruption primitive, identifying an information leak, reconstructing control-flow constraints, and synthesizing a payload. A web challenge may require understanding session handling, template injection, path traversal, or authentication logic. A crypto challenge may require recognizing a malformed assumption and mapping it to a solvable algebraic structure. Each problem is adversarially designed, but it is still a well-formed computational object.

In other words, CTFs compress a large portion of offensive security into a benchmarkable loop. They require reverse engineering, abstraction, strategic decomposition, tool use, and verification. They also punish superficial pattern matching. A system that merely regurgitates exploit templates rarely succeeds because the instance-specific details matter: register state, heap layout, input grammar, nonce construction, parser behavior, side-channel surface, and network timing all affect whether a candidate exploit is semantically valid.

Why CTF tasks are computational search problems

Most CTF tasks can be formalized as partially observed search problems over program states. Let an environment be defined by hidden state , observations , actions , and a verifier that returns success when the secret condition has been met. The agent interacts through a transition process

and seeks a policy over history that maximizes expected reward

The terminal reward might be sparse—zero everywhere and one only when the flag is captured. But the internal structure is rich. A pwn challenge can be represented as a search over exploit traces that satisfy control and memory constraints. A reversing task is a search over latent program semantics consistent with the disassembly and dynamic behavior. A web challenge is a search over server-side state machines reachable through crafted HTTP sequences. A cryptographic puzzle is a search over mathematical models that explain the observed outputs.

This perspective matters because it changes how we think about capable agents. Success does not primarily come from producing the right final string. It comes from navigating a hypothesis space. The agent must construct candidate models of the target system, test them through interaction, update beliefs, and allocate compute to promising branches. If we define a latent vulnerability model , then the agent is effectively performing approximate Bayesian or heuristic search over , selecting experiments that maximize information gain and exploitability:

Human experts do this implicitly. Strong agents do it explicitly, repeatedly, and at machine speed.

Why one-shot generation breaks down

A single forward pass through a language model is poorly matched to this problem class. One-shot generation assumes that the model can infer the relevant latent structure, choose a valid exploit strategy, and emit a correct payload or solution without sufficiently grounding itself in the target instance. That assumption fails for the same reason it fails in difficult theorem proving or competitive programming: the solution is not just long; it depends on hidden constraints revealed only through interaction.

Suppose a model observes a vulnerable C program and immediately emits a return-oriented programming chain. Even if the chain looks plausible syntactically, it may be invalid because the binary was compiled with PIE, the stack canary leaks only after a different code path, the buffer length estimate is wrong by eight bytes, the libc version changes gadget offsets, or the remote service sanitizes one of the required bytes. In web exploitation, the generated request sequence may assume a templating engine that is not present, a database backend with different escaping rules, or an authentication flow that invalidates the session after one failed probe. In cryptographic tasks, a guessed technique may be nearly right but miss a modulus property that makes the attack inapplicable.

The underlying issue is combinatorial sensitivity. Let denote a proposed exploit program. We can decompose its success probability as

where each is a latent correctness condition: precise offset, protocol sequencing, address resolution, timing, constraint satisfaction, and output parsing. Even moderately competent exploits require many such conditions to align simultaneously. A language model may assign high likelihood to the overall exploit template while still missing one low-probability but essential condition, collapsing end-to-end success.

This is why autonomous hacking systems are built around repeated execution and revision. They do not trust initial generations. They treat them as proposals to be compiled, run, mutated, rejected, or refined. The core capability is not generation alone but search over generations conditioned on feedback.

Search is the dominant mechanism, not the supporting detail

The most useful mental model for modern hacking agents is closer to AlphaGo, AlphaCode, or program synthesis systems than to chatbot-style question answering. The agent generates many partial candidates, evaluates them in an external environment, and concentrates computation on promising trajectories. Search is not a wrapper around intelligence; it is the operational form intelligence takes in this domain.

Consider a search tree whose nodes are partial attack states: observations collected so far, hypotheses about the vulnerability class, scripts or payload fragments produced, and measurements from execution. An edge corresponds to a concrete action such as running checksec, fuzzing an input field, instrumenting the binary in GDB, rewriting a format-string payload, or extracting a linear relation from challenge output. The agent’s task is to expand the tree in a way that balances exploration and exploitation.

A useful abstraction is best-first search over a state value

where estimates direct solvability from node , estimates how informative the next experiment may be, and cost captures time, tokens, execution budget, or instability risk. A practical agent may implement this with beam search, Monte Carlo tree search, iterative repair loops, or population-based sampling. The exact algorithm matters less than the fact that the system keeps multiple live hypotheses and lets environment feedback decide which branches survive.

This search-centric view explains why seemingly modest model improvements can lead to outsized jumps in solve rate when coupled with better orchestration. A slightly better prior over candidate exploit strategies is useful, but the larger gain often comes from reducing the branching factor or increasing the fidelity of node evaluation. If the agent can more accurately tell whether a crash is due to bad offset calculation versus ASLR mis-handling versus malformed transport framing, then the search frontier becomes dramatically cleaner. The resulting system looks “smart” because it wastes less compute on dead branches.

Exploit synthesis as constrained program construction

Once the search process identifies a plausible avenue, the core synthesis problem resembles constrained program construction. An exploit is not merely text. It is a program that must satisfy a set of semantic constraints induced by the target. We can write the synthesis objective as

Here encodes parsability and language correctness; encodes compatibility with the binary, protocol, runtime, or service behavior; and encodes the exploit objective, such as arbitrary read, control-flow hijack, authentication bypass, or plaintext recovery.

For pwn tasks, this often means constructing a sequence of transformations on machine state: prepare memory, leak an address, compute a base, pivot control, call a primitive, and retrieve output. For web tasks, it means synthesizing a valid interaction program over HTTP requests, cookies, payload encodings, and timing. For crypto tasks, it means constructing a computational proof strategy: infer the algebraic weakness, derive a solvable system, and implement the recovery algorithm.

This is why exploit generation benefits from ideas imported from symbolic execution, SMT solving, and counterexample-guided inductive synthesis. The agent can ask a language model to draft an exploit scaffold, but the scaffold becomes useful only after it is checked against concrete constraints. Dynamic execution yields counterexamples: the offset was wrong, the gadget clobbered a register, the parser rejected the payload, the inferred modulus relation failed on held-out samples. These counterexamples prune the search space and refine the synthesis process.

A productive architecture therefore alternates between generative priors and hard checks. The language model proposes, external tools constrain, and the loop repeats. The best systems do not confuse fluent code with valid code. They treat fluent code as a high-entropy proposal distribution over candidate programs.

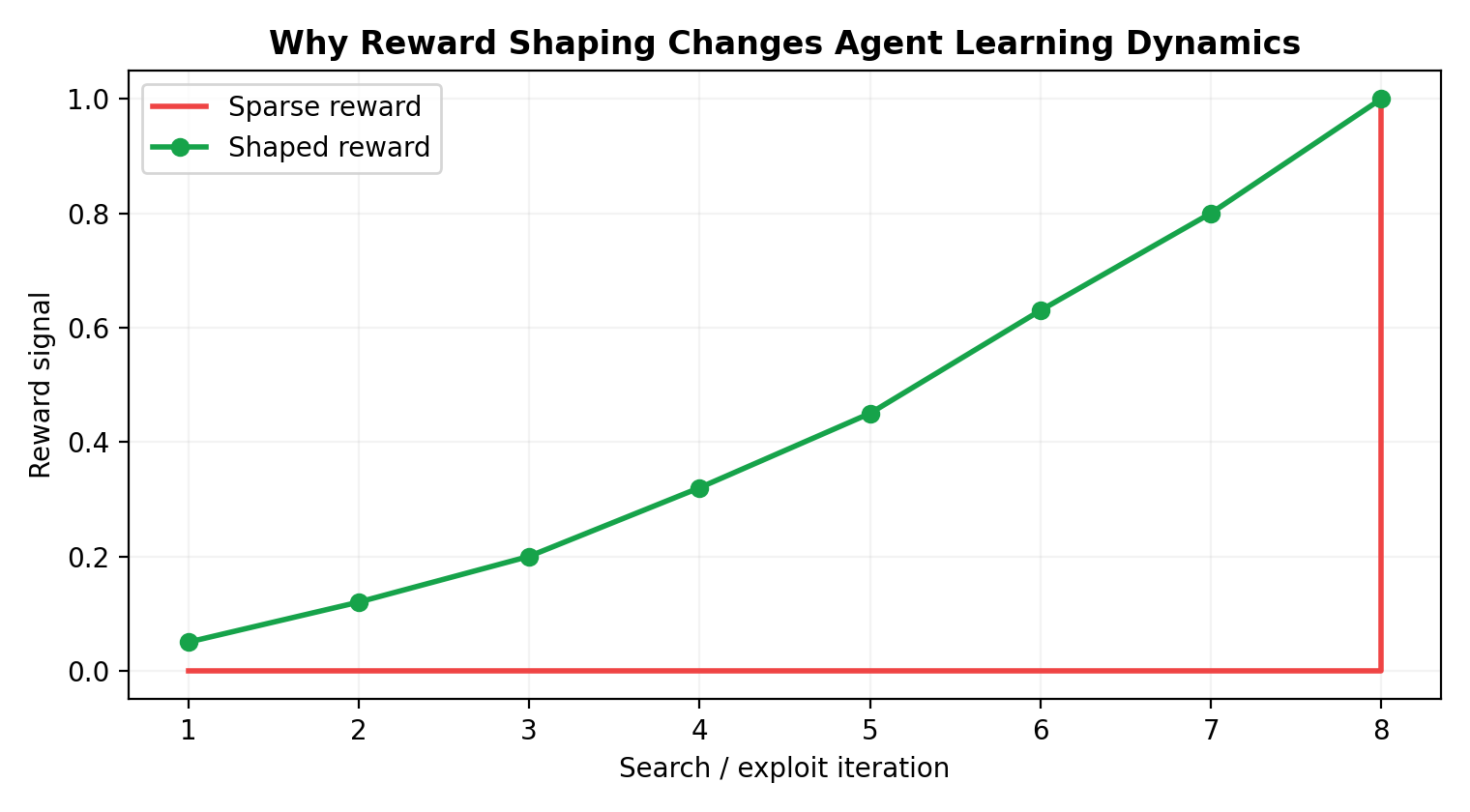

Reward shaping turns sparse verification into learnable progress

Pure CTF scoring is sparse. A challenge may yield reward only when the final flag is submitted, which is a terrible learning signal for long-horizon search. Reward shaping solves this by constructing intermediate signals that correlate with eventual success. The agent is then optimized not merely for terminal capture but for making measurable progress through the hidden causal graph of the task.

Let the base reward be . A shaped reward can be defined as

where each feature represents an intermediate event: unique crash obtained, instruction pointer controlled, canary leaked, stack offset inferred, binary protections identified, endpoint enumeration expanded, SQL error surfaced, ciphertext structure matched, or symbolic constraints reduced. The weights encode which subgoals are informative for the challenge family.

The shaping function matters because it effectively defines the optimization geometry. If the reward favors superficial activity—many requests, many tool calls, many generated scripts—then the agent learns busy behavior. If it rewards semantically meaningful subgoals, then it learns ladders of competence. In binary exploitation, “got a crash” is useful but much weaker than “reproduced crash with controlled overwrite,” which is weaker than “derived stable instruction-pointer control under remote settings.” In web tasks, “received an error” is weaker than “proved server-side template injection with arithmetic evaluation under the current rendering context.”

A deeper point is that reward shaping can be epistemic as well as instrumental. Intermediate rewards need not correspond only to exploit progress; they can measure uncertainty reduction. If an experiment sharply narrows the plausible vulnerability class, that information has future value even when it does not immediately move the score. Strong agents exploit both kinds of reward: one for changing the environment, another for improving the internal model of the environment.

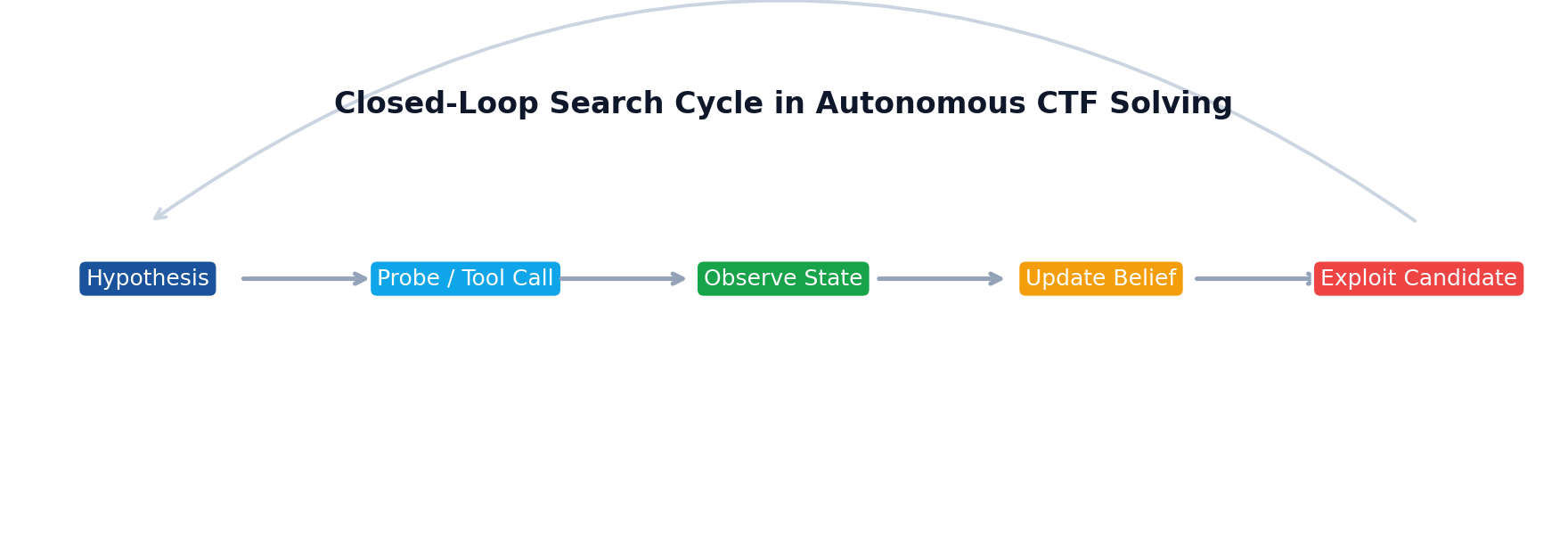

Tool use closes the loop between reasoning and reality

Autonomous hacking is impossible without tools because the target is not fully represented in the prompt. The agent must interrogate the world. Disassemblers, debuggers, emulators, packet capture tools, symbolic executors, fuzzers, browsers, HTTP clients, and custom scripts are not accessories; they are the sensory and actuation interface through which the policy becomes grounded.

The key systems insight is that tool use changes the computational graph. Instead of producing a single output token stream, the agent now executes a sequence of external programs whose results become new context. This creates an observe-act-update loop much closer to robotics than to standard text generation. The agent emits a command, receives noisy evidence, interprets it, and chooses the next command.

Execution feedback is especially valuable because it creates an objective correctness signal. If the exploit script segfaults before leaking libc, the environment says so. If the request triggers a 500 with a specific stack trace, that trace constrains the hypothesis space. If a Z3 query returns unsat, a whole family of candidate constructions can be discarded. This is a crucial difference from purely linguistic tasks, where correctness is often fuzzy and self-referential.

There is also a systems-engineering reason autonomous hacking agents can dominate CTFs once the loop is well designed: they can run thousands of micro-experiments without fatigue. Humans are excellent at abstraction but expensive in serial attention. An agent can enumerate payload variants, re-run network probes, collect traces, compare diffs, and maintain structured notes over many branches simultaneously. Given enough orchestration quality, this turns the environment into a massively amplifying error-correction mechanism.

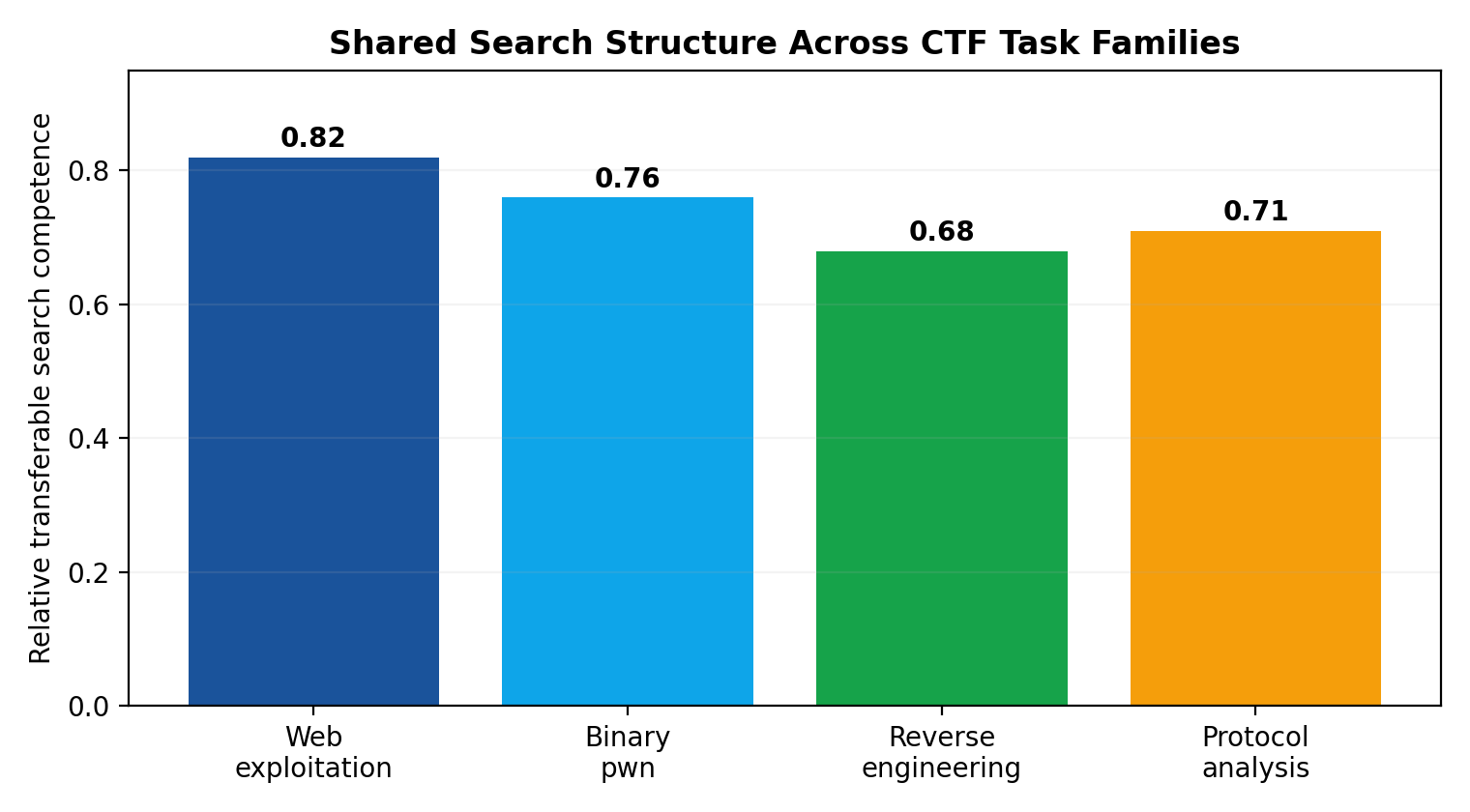

Why capability

transfers across heterogeneous challenge families

At first glance, pwn, web, reverse, crypto, forensics, and misc challenges look unrelated. In practice, strong autonomous agents transfer because the underlying computational motifs recur. The object being attacked changes, but the control loop does not.

Across challenge types, the agent repeatedly performs five operations. It constructs a latent model of the target, proposes interventions that discriminate among models, gathers external evidence, synthesizes candidate programs consistent with the evidence, and revises its search strategy based on measured progress. The tools differ—Ghidra for reversing, Burp-style probing for web, SageMath for crypto—but the architecture of competence is stable.

This is why improvements in one domain often spill into others. Better experiment planning helps both web enumeration and symbolic reverse engineering. Better memory for structured observations helps both heap exploitation and protocol analysis. Better exploit-program repair helps both pwntools scripts and cryptanalytic notebooks. In representation-learning terms, the agent develops reusable priors over state abstraction, decomposition, and validation.

A useful way to express this is to separate domain-specific operators from domain-general search policy. Let be the tool and action set for domain , and let denote the higher-level controller. Transfer occurs when learns reusable meta-skills—branching decisions, confidence calibration, evidence integration, repair heuristics—even though the low-level operators vary by domain. This is precisely why a well-designed autonomous agent can become broadly effective across heterogeneous CTF distributions without memorizing every exploit class in advance.

Where exploit search becomes brittle: specification drift, deceptive rewards, and frontier collapse

The same properties that make autonomous agents powerful also create brittle dynamics. Exploit search degrades when the agent’s internal specification diverges from the environment’s true constraints. This often begins with representation error. The model commits too early to “this is a format-string bug” or “this is RSA with weak padding” and then interprets ambiguous evidence through that lens. Search becomes locally coherent but globally wrong.

A second pathology is deceptive reward shaping. If the system overweights easy-to-trigger proxies—crashes, verbose errors, partial parser acceptance—it may optimize for states that look productive while moving away from the real exploit path. In optimization language, the reward landscape contains high local maxima corresponding to flashy but non-causal progress markers. The agent appears active and competent while its posterior over the true vulnerability mechanism drifts further from reality.

A third issue is frontier collapse. Many search systems rely on aggressive pruning to stay within budget. But if candidate scoring is even slightly miscalibrated, pruning can eliminate the only branch that contains the real exploit because that branch initially looks low probability or noisy. This is common in tasks where the decisive clue emerges only after an unusual sequence of probes. Humans often rescue such cases through intuition or stubbornness; agents need explicit mechanisms for diversity preservation, branch resurrection, or scheduled exploration.

There is also a subtle mismatch between symbolic constraints and physical execution. A payload may satisfy a solver-derived model yet fail because the model omitted I/O buffering, endianness in one stage, network fragmentation, race timing, ASLR entropy, or parser normalization. These are not generic “failures.” They are manifestations of under-modeled environment semantics. The more a challenge’s true behavior depends on such semantics, the more search can oscillate between plausible but invalid exploit constructions.

This brittleness suggests a concrete design principle: the agent’s state should include uncertainty not just over hypotheses, but over the adequacy of its own abstraction. Systems that track “how likely is this exploit path?” but not “how likely is my model missing a relevant variable?” are vulnerable to confident collapse.

Autonomous CTF solvers as a template for general-purpose agent design

The broader lesson is not that every AI system should become a hacking bot. It is that CTFs reveal a general architecture for autonomous intelligence under sparse supervision. Many real-world tasks—from software debugging to scientific discovery to infrastructure operations—share the same structure: a partially observed environment, a long-horizon objective, expensive mistakes, and success that depends on iterative experimentation rather than fluent first answers.

The agent template that emerges from CTFs has four parts. First, a generative prior proposes hypotheses, decompositions, and candidate programs. Second, external tools expose reality through measurement and intervention. Third, search allocates compute across alternative trajectories instead of committing to a single chain of thought. Fourth, shaped reward converts sparse end goals into actionable local progress signals.

This architecture is more general than offensive security. A debugging agent searching for the cause of a production incident performs the same loop. So does a data agent locating a schema mismatch across pipelines. So does a research agent deciding which experiments best disambiguate competing scientific explanations. In each case, the quality of the system depends less on raw eloquence and more on how effectively it couples hypothesis generation to execution-grounded feedback.

That is why autonomous hacking agents are such an important signal. They show that once language models are embedded in the right control system, capability begins to look less like “answering questions” and more like budgeted sequential decision-making over external state. The frontier is therefore not merely bigger models. It is better search policies, better reward design, better tool interfaces, better uncertainty tracking, and better mechanisms for preserving diversity when the first promising path is wrong.

If these systems now dominate CTF leaderboards, the reason is not mystical. CTFs reward exactly the combination of properties that agentic architectures are beginning to scale well: relentless experimentation, exploit synthesis under constraints, and optimization over intermediate evidence rather than final answers alone. That same combination is likely to define the next wave of high-value autonomous systems far beyond security.

References

- B. Katz, S. Upadhyay, and G. Barzilay, “Language Models as Zero-Shot Planners: Extracting Actionable Knowledge for Embodied Agents,” *arXiv preprint arXiv:2201.07207*, 2022.

- S. Yao, J. Zhao, D. Yu, N. Du, I. Shafran, K. Narasimhan, and Y. Cao, “ReAct: Synergizing Reasoning and Acting in Language Models,” *arXiv preprint arXiv:2210.03629*, 2022.

- Y. Chen, A. Efrat, O. Fried, and M. Naik, “InterCode: Standardizing and Benchmarking Interactive Coding with Execution Feedback,” *arXiv preprint arXiv:2403.07872*, 2024.

- Y. Li et al., “SWE-agent: Agent-Computer Interfaces Enable Automated Software Engineering,” *arXiv preprint arXiv:2405.15793*, 2024.

- Y. Li et al., “Competition-Level Code Generation with AlphaCode,” *Science*, vol. 378, no. 6624, pp. 1092–1097, 2022.

- A. Ellis, N. Kallus, A. T. Kalai, and R. R. R. et al., “Algorithms for Reinforcement Learning with Human Feedback and Preference Optimization,” survey literature relevant to reward design and shaped objectives.

- A. Solar-Lezama, “Program Synthesis by Sketching,” *PhD thesis*, University of California, Berkeley, 2008.

- S. Gulwani, O. Polozov, and R. Singh, “Program Synthesis,” *Foundations and Trends in Programming Languages*, vol. 4, no. 1–2, pp. 1–119, 2017.

- C. Cadar and K. Sen, “Symbolic Execution for Software Testing: Three Decades Later,” *Communications of the ACM*, vol. 56, no. 2, pp. 82–90, 2013.

- R. S. Sutton and A. G. Barto, *Reinforcement Learning: An Introduction*, 2nd ed. Cambridge, MA: MIT Press, 2018.

- D. Silver et al., “Mastering the Game of Go with Deep Neural Networks and Tree Search,” *Nature*, vol. 529, pp. 484–489, 2016.

- D. M. Blei, A. Kucukelbir, and J. D. McAuliffe, “Variational Inference: A Review for Statisticians,” *Journal of the American Statistical Association*, vol. 112, no. 518, pp. 859–877, 2017.

- Recent public reporting and competition commentary on autonomous AI systems outperforming the large majority of human participants in elite hacking competitions, used here only as the motivating news hook for the article.