Large language models (LLMs) are powerful, but they have two major limitations: their knowledge is frozen at the time of training, and they can't interact with the outside world. This means they can't access real-time data or perform actions like booking a meeting or updating a customer record.

The Model Context Protocol (MCP) is an open standard introduced by Anthropic in November 2024 that solves this problem. It provides a standardized way for LLMs to communicate with external data sources, applications, and services. Rather than building a custom integration for every combination of AI model and external system, developers can use MCP as a universal interface, similar to how USB-C standardized hardware connectivity.

MCP builds on existing concepts like tool use and function calling, but wraps them in a consistent protocol. This allows LLMs to retrieve current information, perform actions, and access specialized capabilities not included in their original training.

Architecture and Components

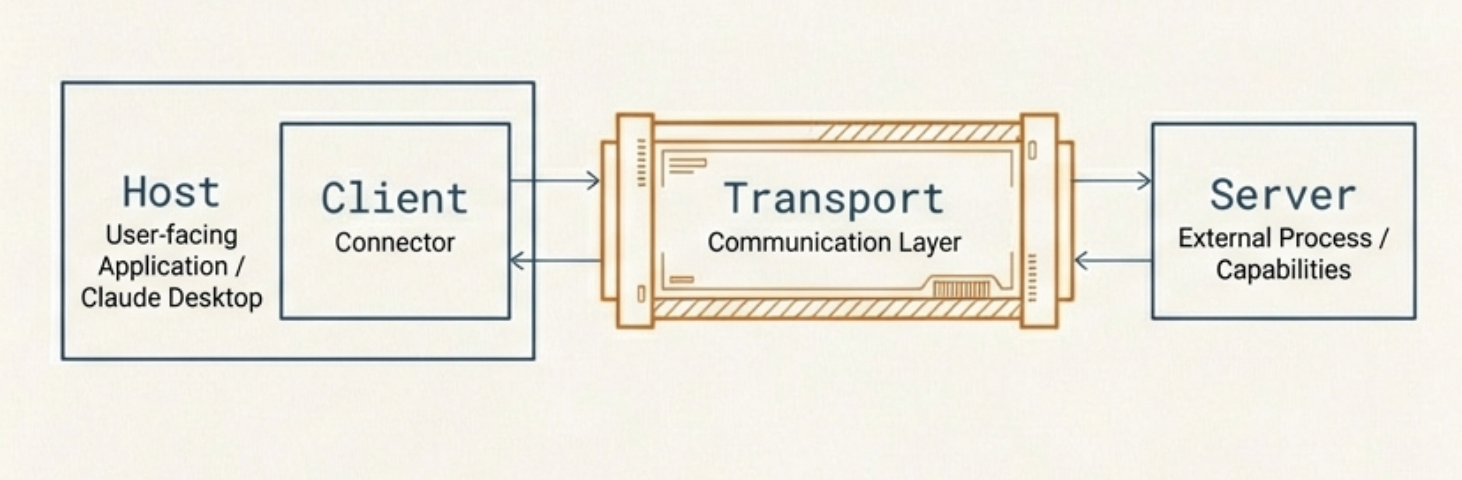

MCP follows a client-server architecture with four key components: the host, the client, the server, and the transport layer.

Host

The host is the application the user interacts with, such as Claude Desktop, an IDE plugin, or a custom AI application. It contains the LLM and orchestrates the overall workflow. When a user makes a request that requires external data or tools, the host coordinates with MCP clients to fulfill it.

Client

The client lives inside the host and manages the connection between the LLM and one or more MCP servers. Each client maintains a one-to-one connection with a specific server. It translates the LLM's requests into MCP protocol messages and converts server responses back into a format the LLM can process. It also handles discovery of available servers and their capabilities.

Server

The server is an external process that exposes specific capabilities to the LLM. It connects to external systems like databases, APIs, or file systems, and provides them to the client in a standardized format. Servers can expose three types of capabilities:

- Tools: Actions the LLM can invoke, such as querying a database or sending an email

- Resources: Data the LLM can read, such as file contents or API responses

- Prompts: Predefined templates that guide the LLM for specific tasks

Transport Layer

The transport layer handles communication between the client and server using JSON-RPC 2.0 messages. MCP supports two transport methods:

- Standard input/output (stdio): Used for local servers running on the same machine. The client launches the server as a subprocess and communicates through stdin/stdout. This is simple and fast, with no network overhead.

- Streamable HTTP: Used for remote servers. The client sends HTTP POST requests to a single server endpoint and receives responses that may optionally use Server-Sent Events (SSE) for streaming. This replaced the earlier SSE-based transport in the March 2025 spec revision, offering better scalability, simpler deployment on serverless infrastructure, and improved security by eliminating long-lived connections.

How It Works

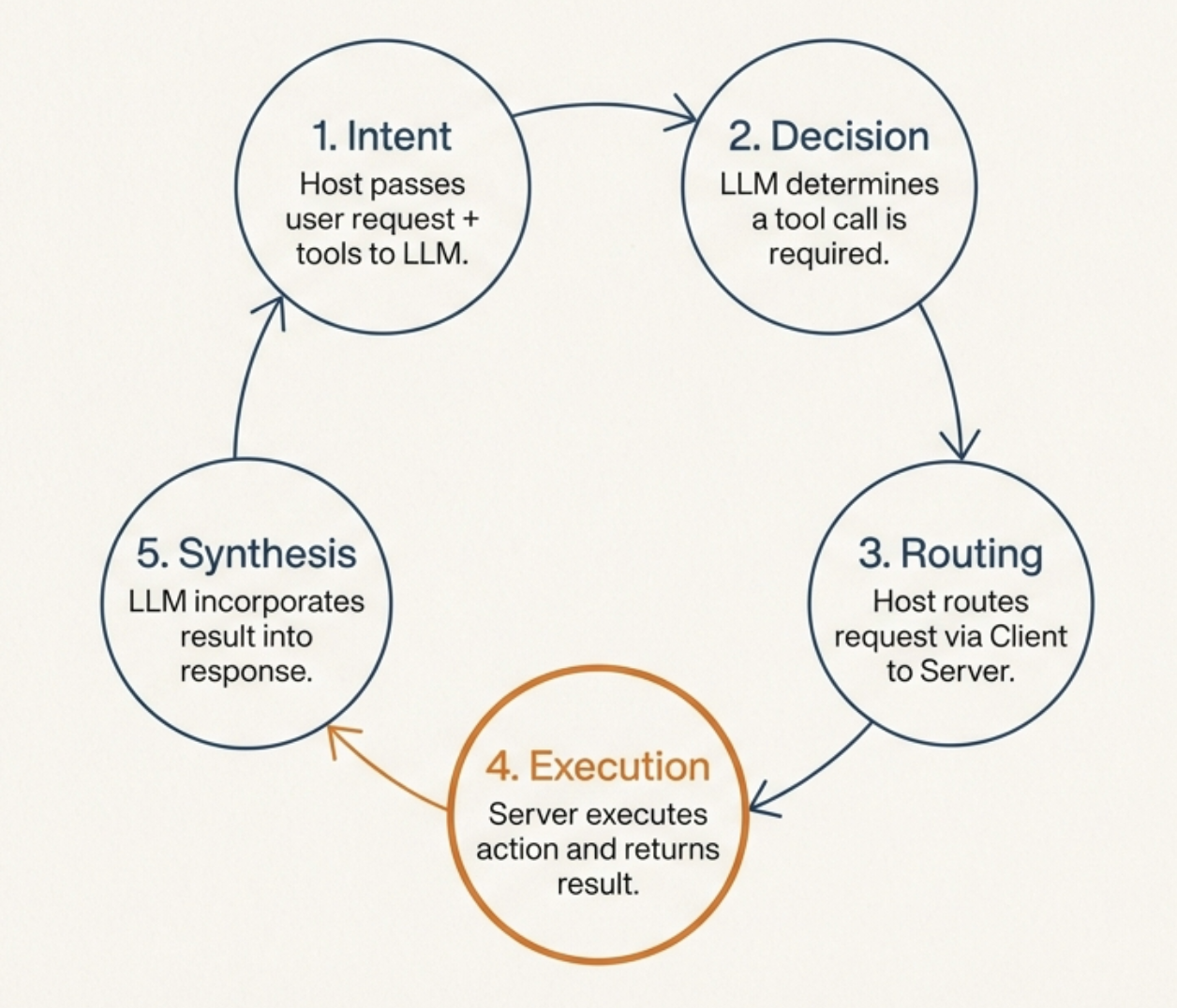

When a user sends a request to an MCP-enabled application, the flow works as follows:

- The host passes the user's request to the LLM along with descriptions of available tools from connected MCP servers.

- The LLM analyzes the request and determines whether it needs to call any tools.

- If a tool call is needed, the LLM returns a structured tool-call request to the host.

- The host routes the request through the appropriate MCP client to the corresponding MCP server.

- The server executes the action (e.g., queries a database) and returns the result.

- The result is passed back through the client to the LLM, which incorporates it into its response.

- The final response is presented to the user.

This loop can repeat multiple times within a single interaction if the LLM needs to call several tools or chain actions together.

Why It Matters

Before MCP, every integration between an AI application and an external service required custom code. With N models and M services, this created an N-times-M integration problem. MCP reduces this to N-plus-M: each model implements the MCP client protocol once, and each service implements an MCP server once, and they all work together.

This standardization means developers can build MCP servers for their services and have them work with any MCP-compatible AI application, and AI applications can access a growing ecosystem of servers without custom integration work.