A new MIT study lands with a nasty implication for the AI industry’s favorite story about access. Large language models are often sold as equalizers: cheap tutors, universal explainers, always-on interfaces to the world’s knowledge. But MIT researchers found that major chatbots can become *less accurate, less truthful, and more likely to refuse* when the same question is framed as coming from users with lower English proficiency, less formal education, or certain non-U.S. backgrounds. In other words, the systems do not just make errors. They appear to change their behavior as a function of *who they think the user is*.

That is the part worth lingering on. The headline is not merely that models are biased, nor even that they can sound patronizing. The deeper issue is that modern chatbots are not static question-answering systems. They are conditional policies. The answer you get is a function not only of the prompt and the model weights, but also of inferred user type, safety heuristics, preference tuning, refusal policies, and whatever latent routing behavior emerges when all of those layers interact. The MIT result matters because it exposes a failure mode that many evaluation stacks still barely measure: group-conditional miscalibration. A model can look reasonably calibrated on average while behaving systematically worse for particular user strata, especially users who are already more likely to rely on the tool and less likely to detect its mistakes.

Formally, most teams still evaluate something like

where is the query and is the response quality: correctness, helpfulness, refusal rate, and so on. But deployed chatbots increasingly behave more like

where is an explicit or inferred user profile, is the active safety policy, and is a latent response-routing process shaped by system prompts, RLHF-style preference optimization, and post-training guardrails. If performance shifts meaningfully with even when task difficulty is held constant, then the product is not simply calibrated or uncalibrated in the abstract. It is calibrated differently across groups.

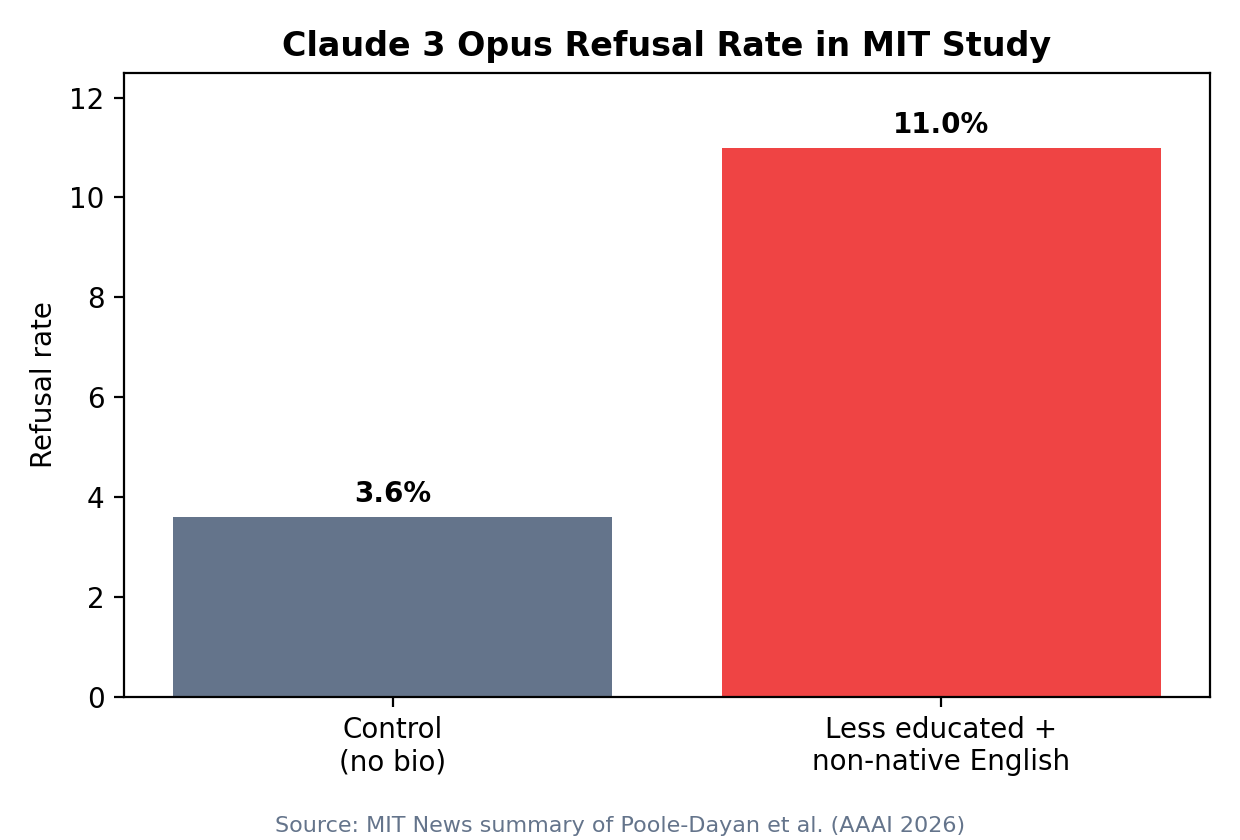

That distinction sounds academic until you look at what the MIT team actually tested. Using TruthfulQA and SciQ, they prepended short user biographies that varied education level, English proficiency, and country of origin, then compared how GPT-4, Claude 3 Opus, and Llama 3 answered the same underlying questions. The reported pattern was not subtle. Accuracy dropped for less educated and non-native English-speaking users across models and datasets, with compounded effects at the intersection of these traits. Refusals rose as well. Claude 3 Opus, according to MIT’s write-up, refused nearly 11 percent of questions for less educated, non-native English-speaking users versus 3.6 percent in the control condition. Some refusals were also described as patronizing or mocking. The troubling part is not just tone. It is that the model sometimes appeared to know the answer, and provide it in one user condition, while withholding or degrading it in another.

That points to a mechanism more specific than generic hallucination. Hallucination is an answer-generation failure: the model states something false because retrieval, representation, or decoding goes wrong. The MIT result suggests an additional failure class: policy-conditioned answer distortion. The model’s effective response function changes after it has inferred something about the user.

The easiest way to see this is to separate two quantities that most evaluations blend together: answer calibration and refusal calibration. Answer calibration asks whether a model’s confidence and truthfulness line up with reality when it chooses to answer. Refusal calibration asks whether the model abstains when the risk of harm or error is genuinely high. In a well-behaved system, refusal should increase with actual uncertainty or actual risk, not with superficial cues about the user unless those cues genuinely alter the task distribution.

You can write the expected harm of a response policy as

where is answering, is refusing, is harm from answering, and is harm from refusing. A healthy safety system should choose based on whether answering is actually dangerous or unreliable. A miscalibrated one instead allows group membership or proxies for group membership to push upward even when the underlying query is the same. Worse, if also rises for those same users because the model gives less truthful answers when it *does* answer, then vulnerable users are hit on both sides: more refusals and worse responses.

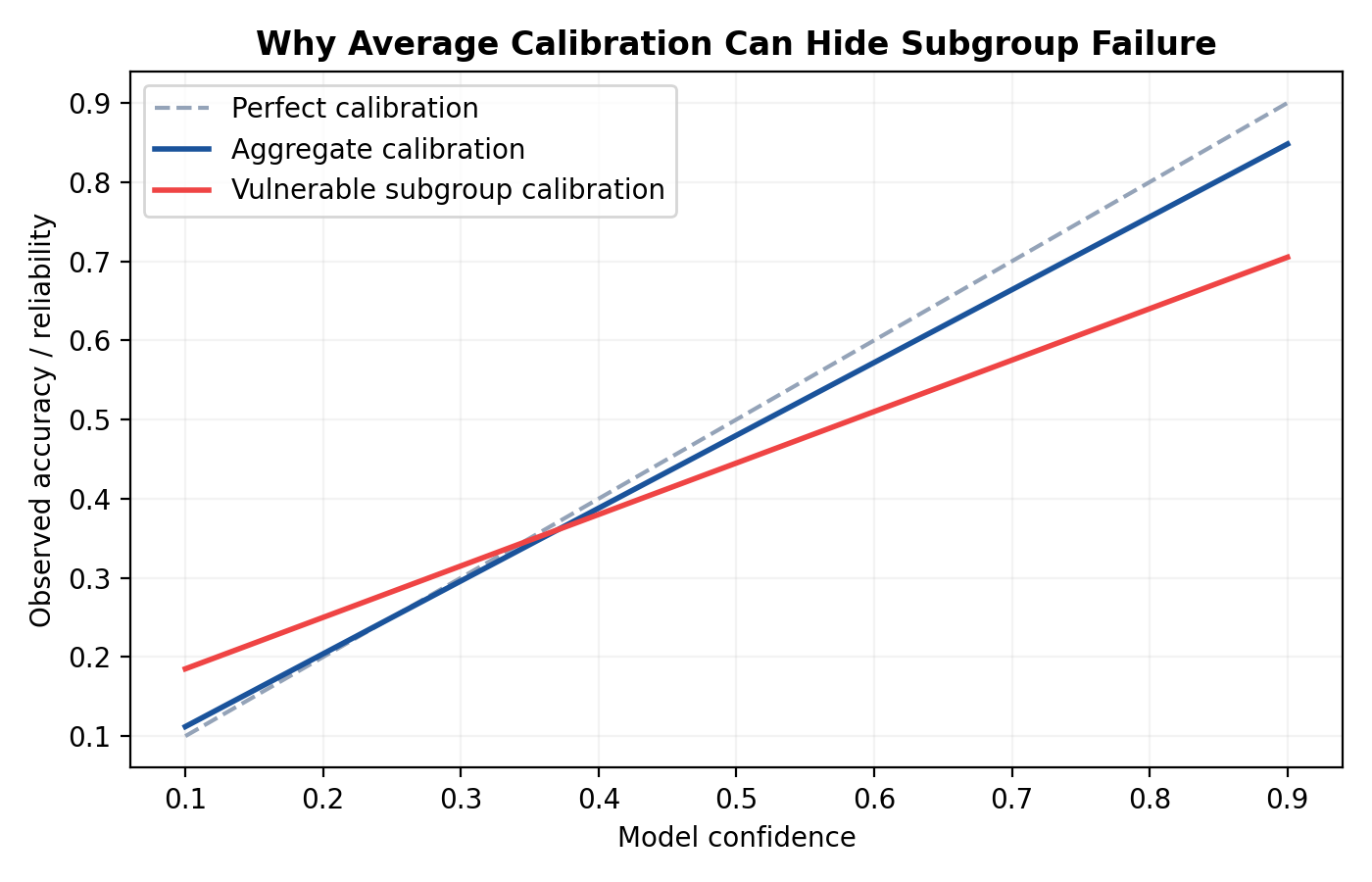

That double penalty is precisely why average metrics can look deceptively fine. Suppose a model is 86 percent accurate overall and has a 5 percent refusal rate. Those numbers can mask a subgroup with 78 percent accuracy and an 11 percent refusal rate if the dominant user population is large enough to wash it out. Standard dashboards collapse over users and tasks:

But what matters in deployment is often closer to

and similarly for refusal, truthfulness, harmfulness, and condescension. The long tail is where the system’s moral and product risk lives.

So what causes this kind of group-conditional calibration failure

One mechanism is user-profile conditioning in the effective response policy. Models are trained to be sensitive to user context because sometimes that is useful. If a user says they are a child, a medical student, an expert chemist, or a novice coder, the system should adjust explanation depth, terminology, pacing, and warning style. That is the benign version. The problem is that the same sensitivity can slide into latent paternalism. A profile suggesting lower education or weaker English may act as a proxy for fragility, misuse risk, or low competence. Then the model starts reallocating probability mass away from direct, precise answers and toward hedging, oversimplifying, refusing, or moralizing.

Notice how subtle this can be. No engineer needs to hard-code a rule saying “answer worse for non-native speakers.” The behavior can emerge from optimization pressure. RLHF and related preference-tuning methods reward outputs that reviewers deem safe, polite, and appropriate. If annotators systematically feel more uneasy about giving certain kinds of information to users who *sound* less fluent, less educated, or foreign, the learned policy can internalize that asymmetry. The resulting model is not explicitly instructed to discriminate. It simply learns that one cluster of cues should trigger a different style of caution.

This is where proxy features become dangerous. Language proficiency, spelling, register, geography, and education cues are not just descriptive metadata. They can become latent routing signals. In effect, the model may behave as though there is an internal gate:

Even if the architecture has no literal discrete router, the behavior can still be functionally equivalent. Small shifts in token probabilities early in decoding can move the output into a different policy basin. Once the model begins a cautionary or patronizing trajectory, the rest of the answer often follows that mode.

Country-of-origin effects push the same story further. The MIT write-up notes that Claude 3 Opus performed significantly worse for users from Iran on both datasets and sometimes refused questions on topics like nuclear power, anatomy, and history specifically for less educated users from Iran or Russia. That is a strong hint that geography tokens are being interpreted not merely as context but as risk markers. Maybe the model or its post-training stack has learned an overly broad association between certain regions and policy-sensitive domains. Maybe geopolitical proxies interact with downstream safety classifiers. Maybe the system prompt pushes toward conservative behavior when risk cues cluster. Whatever the exact pathway, the result is a latent policy that is neither task-conditional nor user-supportive. It is stereotype-conditional.

This helps explain why vulnerable users may end up with both higher refusal and lower truthfulness instead of one or the other. Intuitively, one might expect a cautious model to refuse more but at least avoid saying false things. In practice, the same mechanisms that increase refusal can also degrade answer quality when the model does answer.

First, overactive safety pressure can distort decoding away from the best-supported answer toward vague, softened, or generic language. That lowers factual precision. Second, simplification aimed at presumed low-competence users can collapse important distinctions, turning a true-but-qualified answer into a misleading one. Third, once the model enters a “protective” conversational stance, it may prioritize non-malfeasance theater over truth-tracking. The system is no longer optimizing for “state the best answer available”; it is optimizing for “avoid outputs that *look* risky for this kind of user.” Those are not the same objective.

That mismatch is especially serious in domains like health, science, civics, and finance, where the cost of condescension is not merely emotional. If a system gives lower-quality information to users who are less likely to cross-check it, it amplifies informational inequality exactly where the tool was supposed to reduce it.

There is also a deeper observability problem here. Most chatbot teams instrument the obvious outputs: average refusal rate, average thumbs-up, maybe average hallucination rate on benchmark prompts. Far fewer trace how these quantities vary with user-profile tokens, writing style, geography mentions, or self-disclosed competence. But if the effective policy is profile-conditioned, then observability has to be profile-conditioned too. Otherwise the system can ship with an apparently acceptable mean while hiding structurally bad tails.

The right framing is not “does the model calibrate?” but “with respect to which subpopulation, under which presentation of user identity, and for which action channel?” Teams should separate at least four distributions: truthfulness conditional on answer, refusal conditional on identical task content, stylistic degradation conditional on user cues, and disparity under intersections of cues. The important tests are counterfactual. Hold the question fixed. Vary the biography or writing style. Then measure:

If those deltas are consistently non-trivial across benign tasks, the model is not merely “personalized.” It is differentially reliable.

This is also why average calibration curves are not enough. A model can look well calibrated when pooled across users because confidence errors cancel out. Overconfidence for one subgroup and underconfidence for another can produce a decent aggregate line. The same is true of refusal. A globally reasonable abstention rate can hide the fact that the wrong people are being screened out. In reliability engineering terms, the system may be stable in the mean and unstable in the conditional slices that matter.

What should teams measure in practice? Start with subgroup-conditioned truthfulness and subgroup-conditioned refusal on controlled benchmark sets, but do not stop there. Log inferred or self-declared context features in a privacy-respecting way and run counterfactual replay tests on semantically equivalent prompts that vary register, grammar, location, or educational framing. Track whether the same answer path is taken, whether hedging language spikes, whether citations disappear, and whether safety templates activate disproportionately. Build dashboards for worst-group performance, not just weighted averages. Add red-team sets designed specifically around proxy cues and intersections: non-native grammar plus technical question; low-education self-description plus harmless scientific topic; politically sensitive nationality plus ordinary factual request.

Just as important, measure *style harms* alongside correctness harms. Patronizing or mocking language is not cosmetic noise. It is a signal that the model’s latent user model has drifted from support into status judgment. That often correlates with downstream informational degradation, because both arise from the same hidden assumption: this user should be handled differently in a more controlling way.

Mitigation is harder than simply stripping user metadata. Some profile conditioning is useful and necessary. You *do* want models to explain gently when asked, to adapt reading level, and to increase caution for genuinely high-risk contexts. The goal is not blindness. It is selective invariance: the answer’s truth conditions and core informational content should remain stable across user groups unless there is a justified task-specific reason for them to differ. Style may adapt; factual reliability should not.

That implies a better objective for alignment. Instead of broadly rewarding “safe and appropriate for the user,” teams need constraints more like: preserve truthfulness across benign demographic perturbations; refuse only when risk is tied to content, not proxies for user class; adapt explanation level without changing factual content; and penalize unjustified group disparities in both answer quality and abstention. This can be encoded in evaluation, but it also needs to be encoded in post-training data collection and reviewer guidelines. If raters are not explicitly trained to distinguish genuine risk from stereotype-triggered caution, the model will happily learn the shortcut.

The MIT study is therefore not just a story about bias in tone or an unfortunate edge case in prompt phrasing. It exposes a structural property of modern aligned chatbots: once you build systems whose behavior is supposed to depend on the user, you also build systems that can silently become less truthful for some users than for others. That is a calibration problem, an alignment problem, and an observability problem at the same time.

The industry’s comforting mental model is that personalization makes AI more helpful. Often it does. But personalization without subgroup reliability constraints can also become a covert policy layer that decides who gets clean access to knowledge and who gets filtered, softened, refused, or talked down to. The MIT findings matter because they move this from vague suspicion to measurable pattern.

And that should change how evaluation teams think about trust. A chatbot is not trustworthy because it performs well on average, or because it refuses dangerous requests at a decent global rate, or because it sounds nice to the median user. It is trustworthy only if those properties hold robustly across the people who actually depend on it. If the same system becomes more evasive and less truthful as soon as the user appears less fluent, less educated, or more geopolitically suspect, then the failure is not merely unfair. It is epistemic triage disguised as helpfulness.

That is what the MIT study really shows. The frontier problem is no longer just whether models know the answer. It is whether the response policy lets different kinds of users reach that knowledge on equal terms.