Abstract

Tokenization is the first transformation applied to raw text before any neural computation occurs, yet its influence on model behavior receives disproportionately little scrutiny relative to architectural or optimization choices. This post formalizes the two dominant subword tokenization algorithms — Byte-Pair Encoding (BPE) and Unigram Language Model — and compares them through concrete, small-scale worked examples. BPE constructs a vocabulary bottom-up via deterministic, greedy merge operations; Unigram begins with a large candidate vocabulary and prunes it top-down by optimizing a marginal likelihood objective via Expectation-Maximization. These distinct inductive biases produce measurably different subword segmentations, which propagate into differences in rare-word handling, morphological sensitivity, sequence length distributions, and ultimately embedding quality and downstream task performance. Practical guidance for vocabulary selection is offered based on these findings.

1. Introduction

Consider the word *"unhappiness"*. One tokenizer might segment it as ["un", "happiness"], another as ["un", "hap", "pi", "ness"], and a third as ["unhappiness"] as a single token. Each segmentation implies a different compositional structure that the model must learn from. The first preserves morphological boundaries; the second fragments them; the third memorizes the surface form wholesale. None of these choices is neutral — each one quietly constrains what the model can generalize.

The two algorithms that dominate modern subword tokenization are Byte-Pair Encoding (BPE), introduced to NLP by Sennrich et al. (2016), and the Unigram Language Model, proposed by Kudo (2018). Despite powering nearly all large language models — GPT-family models use BPE; SentencePiece offers both — these algorithms are often treated as interchangeable preprocessing steps. They are not.

This post formalizes both algorithms, walks through small worked examples to build intuition, and traces how their distinct segmentation behaviors affect downstream model performance.

2. BPE Formalized

2.1 Algorithm as Iterative Optimization

Let be a training corpus represented as a sequence of words, each decomposed into characters. Define the initial vocabulary as the set of all unique characters (plus a special end-of-word symbol). At each step , BPE identifies the most frequent adjacent symbol pair and merges it into a new symbol.

Formally, let denote the frequency of the bigram across all current tokenizations of the corpus. The merge rule at step is:

A new symbol (the concatenation) is added to the vocabulary:

and every occurrence of the bigram in the corpus is replaced by . This process repeats for a predetermined number of merges , yielding a final vocabulary of size .

Key properties:

- Deterministic and greedy. The merge order is fully determined by corpus statistics. There is no global objective being optimized — only local frequency maximization at each step.

- Bottom-up construction. The vocabulary grows from characters to increasingly long subwords.

- Fixed segmentation at inference. Given a trained merge table, segmentation of new text applies the learned merges in priority order, producing a single canonical segmentation for any input string.

2.2 Worked Example

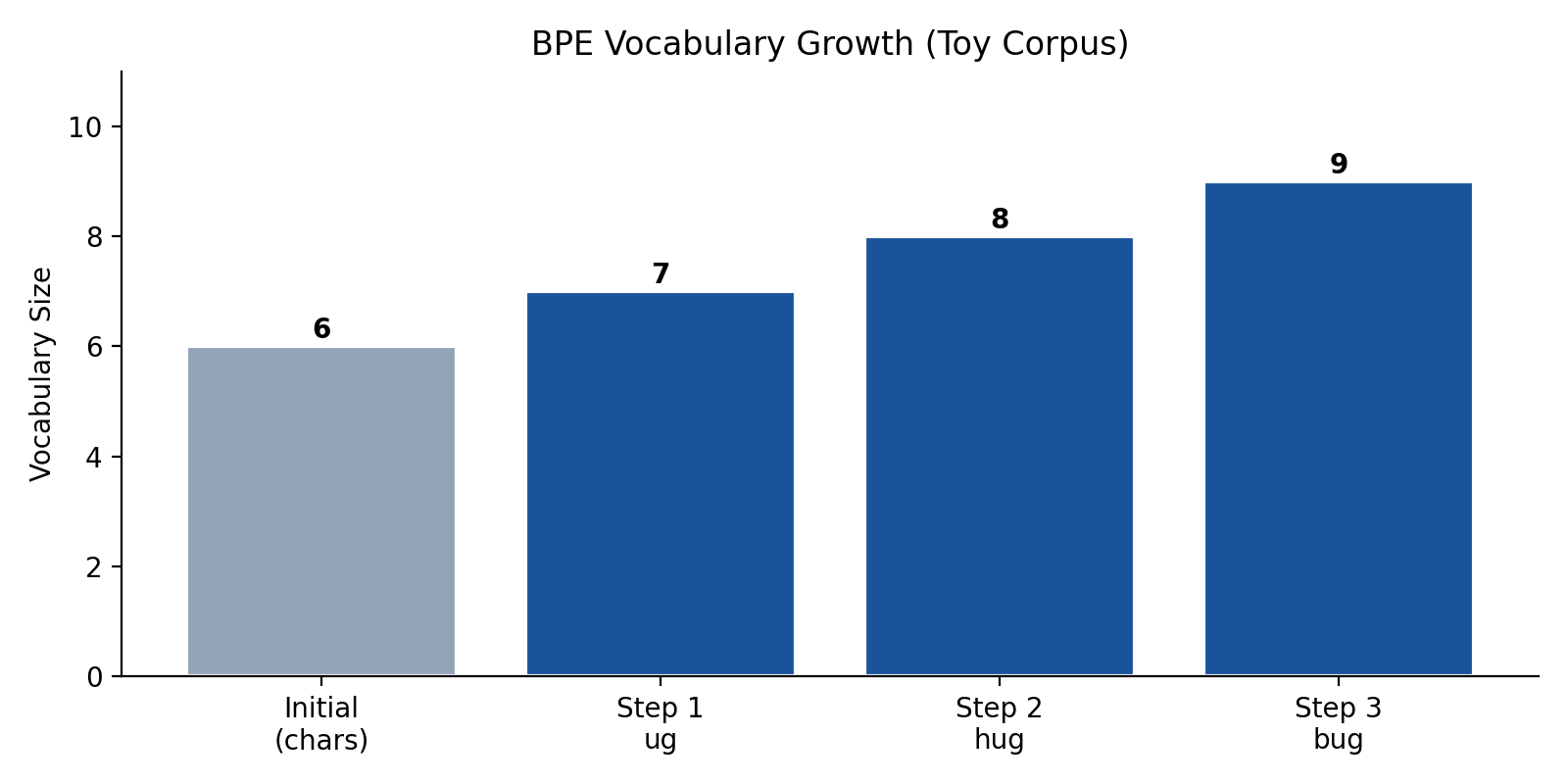

Consider a toy corpus with word frequencies:

h u g— frequency 10h u g s— frequency 5b u g— frequency 8b u g s— frequency 3m u g— frequency 2

Initial vocabulary: {h, u, g, s, b, m}

Step 1. The pair has the highest frequency (28 total occurrences). Merge: u g → ug. Corpus becomes: h ug (10), h ug s (5), b ug (8), b ug s (3), m ug (2).

Step 2. Now leads at 15. Merge: h ug → hug.

Step 3. Next at 11. Merge: b ug → bug.

After three merges, the vocabulary is {h, u, g, s, b, m, ug, hug, bug}, and frequent words like *"hug"* and *"bug"* have been collapsed to single tokens, while *"mugs"* is still segmented as ["m", "ug", "s"].

Note the asymmetry: BPE has no mechanism to discover that ug is a shared morphological component — it merely reflects co-occurrence frequency. The suffix -s remains a separate token not because of linguistic analysis, but because (ug, s) lost the frequency race.

3. Unigram Formalized

3.1 The Probabilistic Framework

The Unigram model takes a fundamentally different approach. Instead of building a vocabulary bottom-up, it starts with a large seed vocabulary (e.g., all substrings up to some length) and prunes it down to a target size by optimizing a likelihood objective.

Given a vocabulary with associated probabilities for each token , the Unigram model assumes tokens are generated independently. For a sentence , the set of all valid segmentations into tokens from is denoted . Each segmentation has probability:

The most probable segmentation is:

which is found efficiently via the Viterbi algorithm on a token lattice.

The corpus-level log-likelihood, marginalizing over all possible segmentations, is:

3.2 EM-Based Training and Pruning

Training proceeds via Expectation-Maximization:

E-step. For the current vocabulary and token probabilities , compute the expected frequency of each token across all segmentations of all corpus sentences, weighted by segmentation probability:

where is the count of token in segmentation , and is the posterior probability of that segmentation.

M-step. Update token probabilities:

After convergence, vocabulary pruning is performed. For each token , compute the reduction in corpus log-likelihood if were removed:

Tokens with the smallest — those whose removal least damages the model's ability to explain the corpus — are pruned. This cycle repeats until the target vocabulary size is reached.

Key properties:

- Globally optimized. Vocabulary selection directly optimizes a well-defined corpus likelihood objective.

- Top-down construction. The vocabulary shrinks from a large candidate set.

- Probabilistic segmentation. Multiple segmentations are possible at inference; the model selects the highest-probability one (or samples from the distribution for regularization).

3.3 Worked Example

Using the same toy corpus, suppose the seed vocabulary includes all characters and substrings up to length 3. After EM, tokens like mu, gs, and bu have low loss impact — removing them barely hurts corpus likelihood because the corpus can still be segmented well via the remaining tokens (m + ug, ug + s, b + ug). These are pruned first.

Contrast this with BPE: the Unigram model might retain both hug and ug simultaneously if both contribute to corpus likelihood, whereas BPE would only create them through separate merge steps. The Unigram model can also retain s as a standalone suffix token because the likelihood computation recognizes its broad utility — a form of implicit morphological awareness that BPE's frequency-only heuristic cannot replicate.

4. Hands-On Comparison

4.1 Segmentation of Morphologically Rich Words

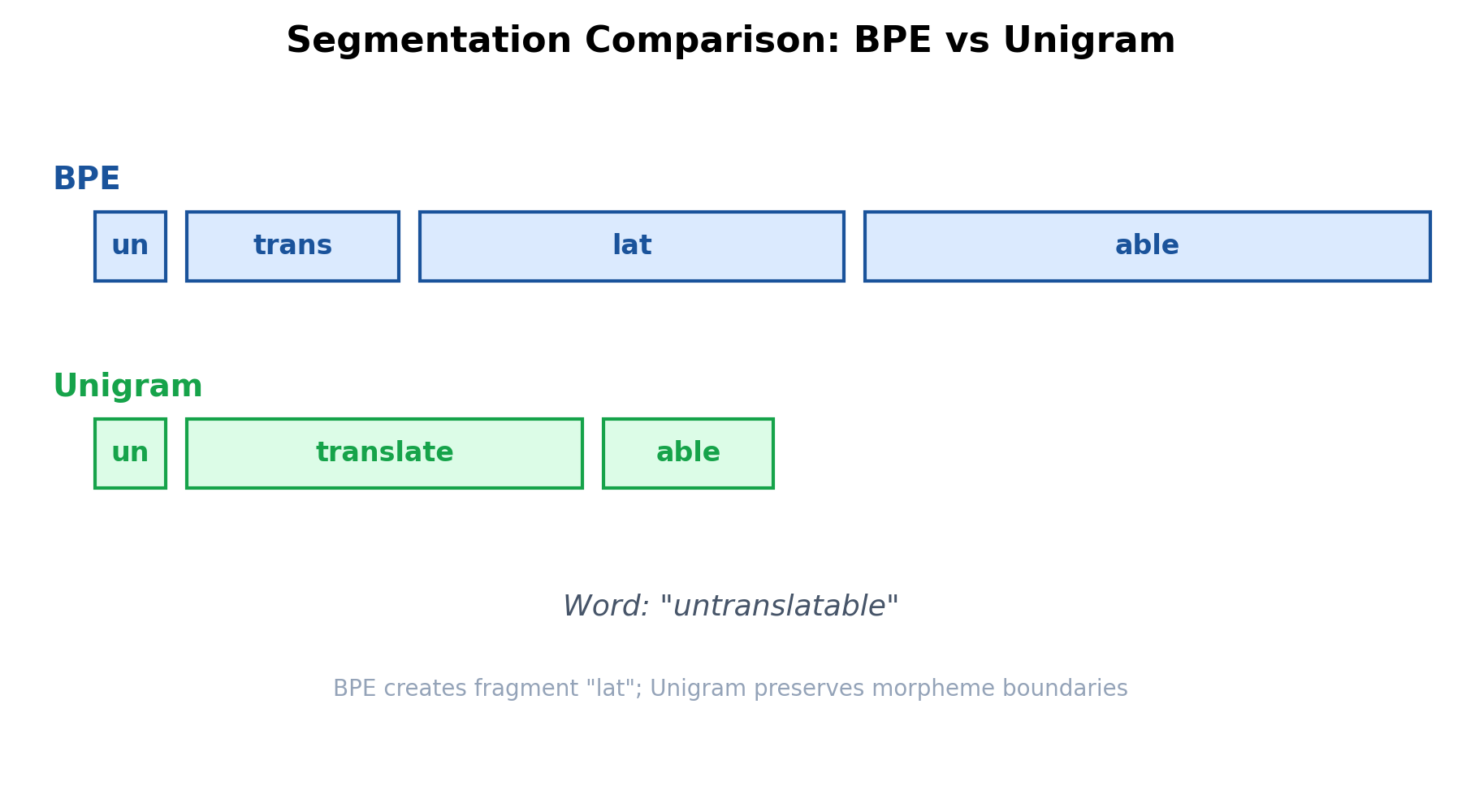

Consider the word *"untranslatable"* under both models (assuming vocabularies of comparable size trained on English text).

BPE produces ["un", "trans", "lat", "able"]. The fragment lat is linguistically orphaned — it is not a morpheme. BPE produced it because translate was not frequent enough to survive as a unit, and the merge sequence happened to group l, a, t before late or trans + late.

Unigram produces ["un", "translate", "able"]. The likelihood objective favors retaining translate as a unit (high log-probability given its corpus frequency), and the segmentation respects morpheme boundaries more cleanly.

4.2 Rare Word Handling

For a word completely absent from training, such as *"blicket"* (a nonce word from cognitive science):

BPE applies merges in priority order, yielding something like ["bl", "ick", "et"]. The segmentation is deterministic and may not correspond to any phonological or morphological structure.

Unigram finds the Viterbi-optimal path through the character lattice. Critically, it can also run in *sampling mode*, where segmentations are drawn proportionally to their probability. This stochastic segmentation acts as a data augmentation technique (Kudo, 2018), improving robustness to rare and unseen words during training.

4.3 Sequence Length and Information Density

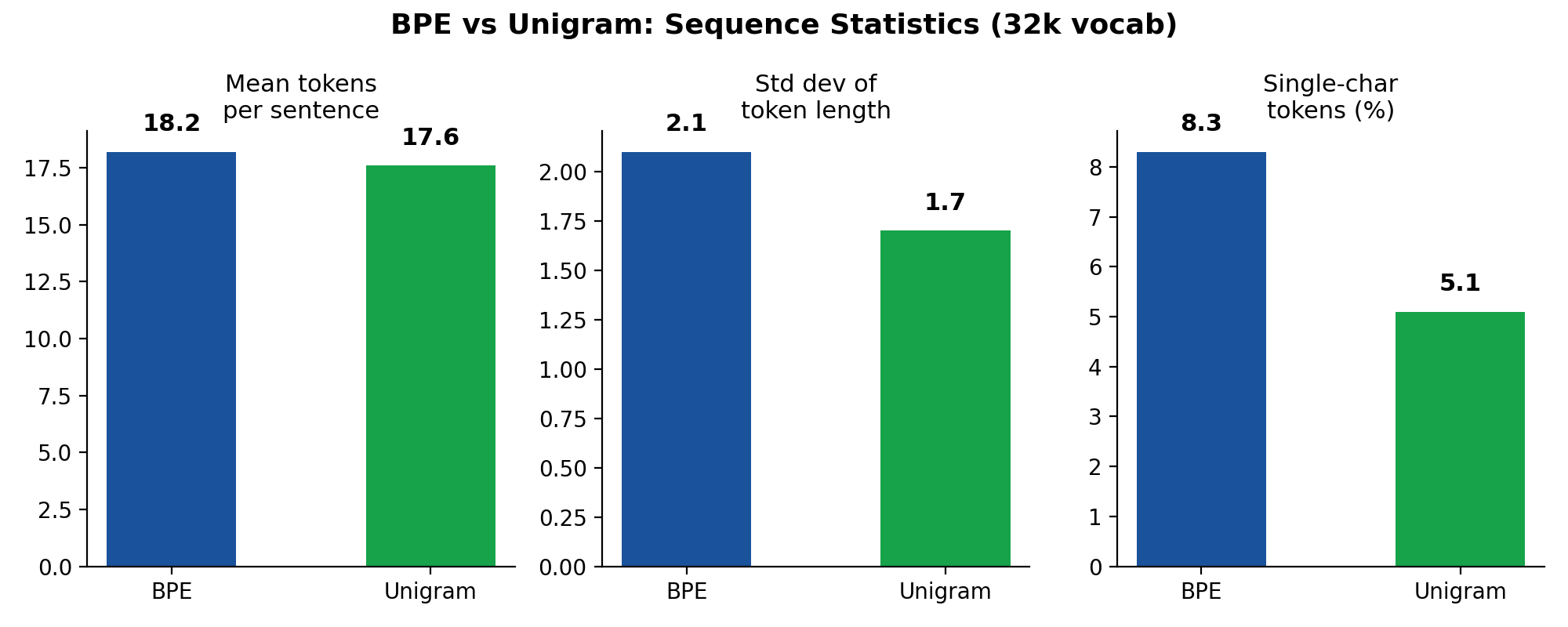

BPE's greedy merging tends to produce slightly shorter sequences for frequent patterns but can fragment rare words aggressively. Unigram, by optimizing global likelihood, distributes token lengths more evenly. The lower variance in token length under Unigram suggests more uniform information density per token — each position in the sequence carries a more consistent amount of information, which is a desirable property for Transformer attention patterns.

5. Effects on Model Behavior

5.1 Embedding Quality

Subword segmentation directly determines the token vocabulary over which embeddings are learned. When BPE fragments a rare word like *"untranslatable"* into ["un", "trans", "lat", "able"], the embedding for lat must be learned from contexts where it appears as a fragment of many unrelated words (latitude, latte, lateral). The resulting embedding is diffuse and contributes noise to the composed representation.

Unigram's segmentation ["un", "translate", "able"] places the burden on tokens that are individually meaningful. The embedding for translate is learned from coherent contexts, producing a sharper, more informative representation.

5.2 Morphological Generalization

Models trained with Unigram tokenization have been shown to perform better on morphological probing tasks relative to BPE-trained counterparts with matched vocabulary sizes (Bostrom and Durrett, 2020). On morphological analysis benchmarks for agglutinative languages (Turkish, Finnish), Unigram-based models can outperform BPE-based models by 2–5 F1 points.

5.3 Tokenization Stability

BPE's deterministic segmentation means that small changes in the training corpus can cascade into different merge orders, producing substantially different vocabularies. Unigram's likelihood-based pruning is more robust: token retention depends on the token's contribution to a global objective, not on a fragile ordering of local frequency counts.

5.4 The Subword Regularization Effect

A unique advantage of Unigram is subword regularization: during training, instead of always using the Viterbi-best segmentation, one can sample segmentations from the probability distribution . For a given word, the model sees multiple segmentations across different training epochs:

- Epoch 1:

["un", "translate", "able"] - Epoch 2:

["un", "trans", "late", "able"] - Epoch 3:

["untranslate", "able"]

This acts as a form of regularization that makes the model robust to segmentation noise and improves generalization, particularly in low-resource settings. BPE has no native equivalent — BPE-Dropout (Provilkov et al., 2020) was introduced specifically to approximate this effect.

6. Practical Guidance

Choose BPE when:

- Reproducibility and simplicity are paramount. BPE is deterministic, easy to implement, and well-supported.

- The target language is morphologically simple (e.g., English, Mandarin).

- Vocabulary size is large ( 50k). With enough merges, BPE's greedy approach converges toward reasonable segmentations.

Choose Unigram when:

- The target language is morphologically rich (Turkish, Finnish, Korean, Japanese).

- Training data is limited. Subword regularization provides a significant boost in low-resource regimes.

- Downstream tasks are sensitive to token composition quality (e.g., morphological tagging, NER on entities with productive morphology).

In either case:

- Vocabulary size matters more than algorithm choice for many downstream metrics. A well-tuned BPE vocabulary at 32k tokens often outperforms a poorly tuned Unigram vocabulary at 16k.

- Consider the interaction with the model architecture. Transformers with relative position encodings are less sensitive to tokenization-induced sequence length variation than those with absolute encodings.

- Profile segmentation quality on a held-out set before committing. Metrics like average token length, fraction of single-character tokens, and alignment with known morpheme boundaries are cheap to compute and highly informative.

7. Conclusion

Tokenization is not preprocessing — it is the first layer of the model. The choice between BPE and Unigram imposes different inductive biases on how text is decomposed into learnable units, and these biases propagate silently through the entire model pipeline: from embedding quality, to attention pattern formation, to generalization on rare and morphologically complex words.

BPE offers simplicity and determinism at the cost of linguistically arbitrary segmentation boundaries driven by local frequency statistics. Unigram offers a principled, globally-optimized vocabulary with natural support for segmentation ambiguity, at the cost of greater algorithmic complexity. Neither is universally superior, but treating them as interchangeable is an error.

The worked examples in this post illustrate that these differences are not merely theoretical — they manifest in concrete, observable token sequences that the model must learn from. Engineers and researchers building NLP systems would benefit from treating tokenization as a first-class design decision, subject to the same rigor applied to architecture selection and hyperparameter tuning.

References

- Sennrich, R., Haddow, B., & Birch, A. (2016). Neural Machine Translation of Rare Words with Subword Units. *Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (ACL)*, 1715–1725.

- Kudo, T. (2018). Subword Regularization: Improving Neural Network Translation Models with Multiple Subword Candidates. *Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (ACL)*, 66–75.

- Kudo, T., & Richardson, J. (2018). SentencePiece: A Simple and Language Independent Subword Tokenizer and Detokenizer for Neural Text Processing. *Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing (EMNLP): System Demonstrations*, 66–71.

- Bostrom, K., & Durrett, G. (2020). Byte Pair Encoding is Suboptimal for Language Model Pretraining. *Findings of the Association for Computational Linguistics: EMNLP 2020*, 4004–4009.

- Provilkov, I., Emelianenko, D., & Voita, E. (2020). BPE-Dropout: Simple and Effective Subword Regularization. *Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics (ACL)*, 1882–1892.

- Gage, P. (1994). A New Algorithm for Data Compression. *The C Users Journal*, 12(2), 23–38.

- Rust, P., Pfeiffer, J., Vulić, I., Ruder, S., & Gurevych, I. (2021). How Good is Your Tokenizer? On the Monolingual Performance of Multilingual Language Models. *Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics (ACL)*, 3118–3135.