Abstract

Chain-of-thought (CoT) prompting has emerged as a technique that improves the reasoning capabilities of large language models on complex tasks. This post provides a formal analysis of why CoT works from computational and information-theoretic perspectives. We formalize the distinction between direct prediction and chain-of-thought prediction , where represent intermediate reasoning tokens. We present the expressiveness argument showing how CoT effectively increases the serial computation depth available to transformer architectures, derive the role of autoregressive generation in enabling intermediate variable storage, and analyze the conditions under which CoT succeeds or fails. Empirical evidence from arithmetic, symbolic reasoning, and mathematical word problem benchmarks supports the theoretical framework. The analysis suggests that CoT's effectiveness stems from its function as external working memory that circumvents architectural limitations on serial computation depth.

The Inference Problem

Consider a language model tasked with answering a question. Given input , the model must produce output . In the standard paradigm, this requires computing:

For a transformer with layers, each forward pass performs sequential computational steps. The hidden state at layer can be written as:

where represents the computation at layer (self-attention followed by a feed-forward network). The final prediction depends on , which has undergone exactly serial transformations of the input. This creates a fundamental constraint: any computation the model performs must be expressible within sequential steps. While each layer has substantial width (the hidden dimension ) allowing parallel computation, the depth is fixed at . Many reasoning tasks, however, require computations with depth exceeding . Consider multiplying two -digit numbers: the standard algorithm requires sequential operations in the worst case, or with advanced methods. For sufficiently large , this exceeds what a fixed-depth transformer can compute in a single forward pass. The fundamental question becomes: how can a fixed-depth architecture solve problems requiring arbitrary computational depth? Chain-of-thought provides one answer.

Direct Prediction vs. Chain-of-Thought

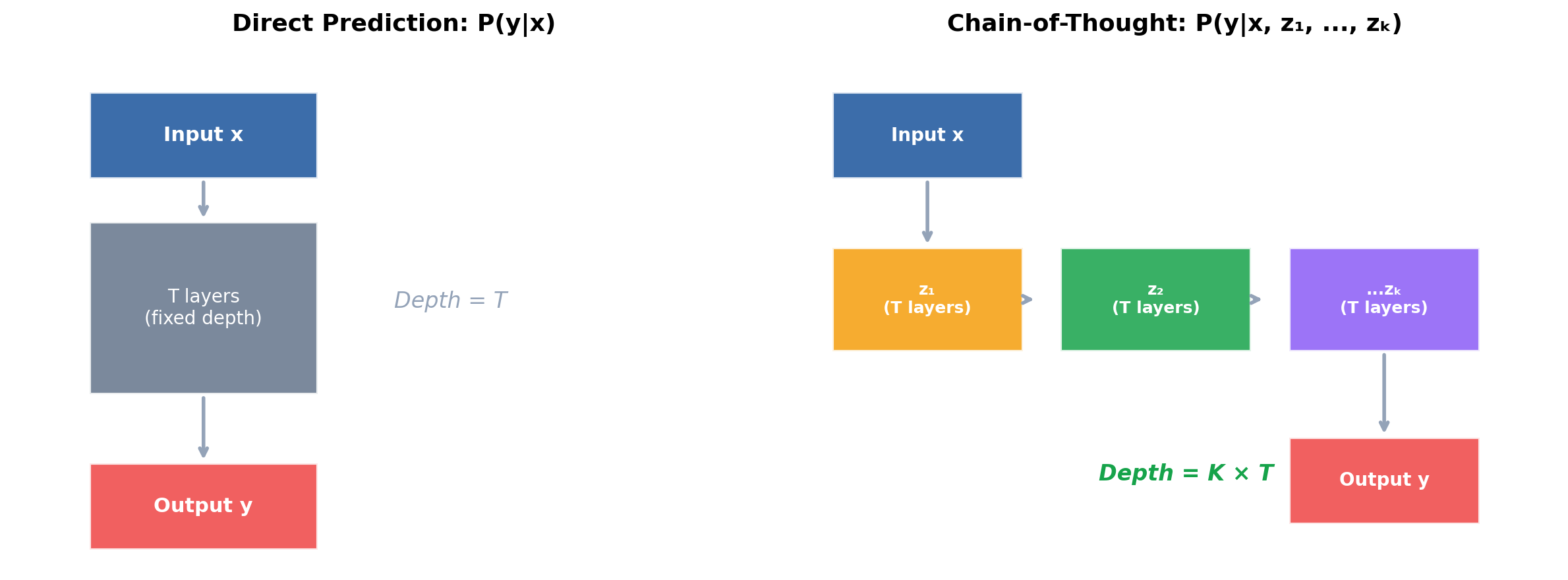

Direct Prediction

In direct prediction, the model maps input directly to output:

Each token of the output is generated conditioned on the input and previously generated output tokens. The key observation is that generating each involves exactly one forward pass through the -layer network. For problems where the answer length is short (e.g., a single number), the model has minimal opportunity to perform iterative computation. The entire reasoning must occur within layers operating in parallel across positions.

Chain-of-Thought Prediction

Chain-of-thought introduces intermediate reasoning tokens before the final answer:

In practice, we don't marginalize over all possible chains—we sample or greedily decode a single chain. The factorization becomes:

Each intermediate token is generated with a full forward pass, and subsequent tokens can attend to it. This means the computation of now has access to the results of forward passes rather than just one.

The Conditioning Effect

A subtle but important point: the chain-of-thought tokens don't just provide more computation—they also condition the final prediction. Consider the conditional probability:

If the reasoning tokens contain the correct intermediate results, the conditional distribution becomes sharply peaked around the correct answer. The model no longer needs to perform complex reasoning—it merely needs to read off the conclusion from its scratchpad. This is analogous to the difference between:

- Solving from scratch

- Computing given that these are the partial products

The second task is computationally simpler, conditioning on intermediate work.

Worked Example: Arithmetic

Consider computing . Direct prediction requires the model to output "1081" after a single forward pass (per token) that can only attend to "23 × 47". Chain-of-thought might produce:

- : "First, 23 × 7 = 161"

- : "Then, 23 × 40 = 920"

- : "Finally, 161 + 920 = 1081"

- : "1081"

The probability decomposes as:

Each factor involves a forward pass where the model can attend to all preceding reasoning steps. The intermediate results (161, 920) are explicitly stored in the context and accessible for subsequent computation.

A Second Example: Multi-Step Word Problems

Consider: "Alice has 3 apples. She buys 5 more, then gives half to Bob. How many does Alice have?" Direct prediction: The model must compute in a single forward pass. Chain-of-thought:

- : "Alice starts with 3 apples"

- : "After buying 5 more: 3 + 5 = 8 apples"

- : "After giving half to Bob: 8 / 2 = 4 apples"

- : "4"

The decomposition isolates each arithmetic operation to a separate forward pass. When generating , the model can attend to "8 apples" from , reducing the problem to a single division.

The Expressiveness Argument

Computational Complexity of Transformers

A transformer with layers, hidden dimension , and context length can be viewed as a computational model. Following the analysis of Merrill and Sabharwal (2023), a single forward pass computes a function in the complexity class —constant-depth threshold circuits with polynomial size. More precisely, for a fixed architecture, the transformer computes a function where the circuit depth is and width is . The class is powerful—it includes addition, multiplication of fixed-precision numbers, and sorting—but it does not include all polynomial-time computations. Crucially, does not contain problems requiring a super-constant number of sequential steps that cannot be parallelized. This includes:

- Evaluating Boolean formulas of unbounded depth

- Computing iterated functions

- Simulating arbitrary circuits with depth

Depth vs. Width Tradeoffs

Circuit complexity theory establishes fundamental tradeoffs between depth and width. A circuit with depth and polynomial width can simulate computations requiring sequential steps. However, increasing width alone cannot always compensate for insufficient depth. The intuition is that depth represents sequential computation time, while width represents parallel resources. Some computations are inherently sequential—they require results from step before step can proceed. For example, iterated composition of functions—computing with applications—requires depth regardless of width, assuming each application of requires at least one computational step. No amount of parallelism can accelerate strictly sequential dependencies.

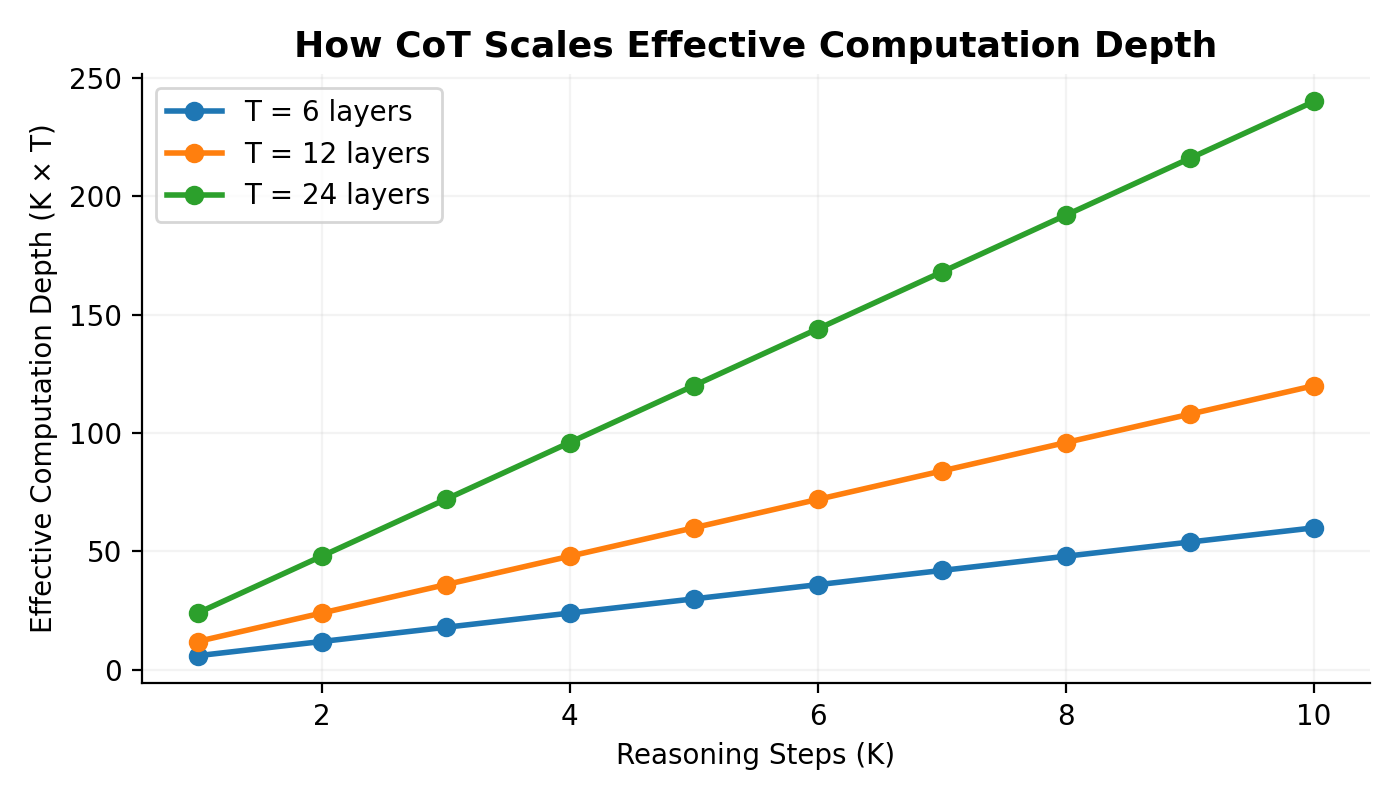

CoT as Depth Extension

Here's the key insight: autoregressive generation with intermediate tokens effectively transforms a depth- computation into a depth- computation. Let denote the function computed by a -layer transformer in one forward pass. When generating tokens autoregressively, the model computes:

The final output is a function of sequential applications of , where each application can use the results of all previous applications. This yields effective computational depth of . Theorem (informal): A transformer with layers generating tokens autoregressively can simulate any computation requiring sequential steps, given sufficient hidden dimension and appropriate training. This explains why CoT helps with tasks requiring deep sequential reasoning: it provides the necessary computational depth that a single forward pass cannot.

Formal Statement

Let denote the class of functions computable by a -layer autoregressive transformer generating tokens. We have:

In fact, with unbounded is Turing complete—it can simulate any computation given enough generation steps. This follows from the ability to simulate a Turing machine's tape using the context window and performing one computational step per token generated. Feng et al. (2023) formalize this precisely: for problems solvable by a Turing machine in steps with tape size , a transformer with CoT can solve them using reasoning tokens and context length .

The Role of Attention in Depth Extension

Attention mechanisms are essential for depth extension to work. When generating token , the model attends to all of . This allows:

- Information retrieval: Earlier computation results stored in can be retrieved

- State tracking: The model can track multiple variables across generation steps

- Conditional branching: Different attention patterns can implement control flow

Without attention (e.g., in a pure recurrent model with bounded state), the model would be limited by its hidden state size. The context window essentially provides unbounded external memory accessible via attention.

Information-Theoretic View

Effective Compute and Serial Bottlenecks

From an information-theoretic perspective, consider the mutual information between input and output. For a direct prediction:

where represents the information-processing capacity of a single forward pass—bounded by the depth-width product of the network. For chain-of-thought:

The intermediate tokens can carry information that exceeds the capacity of a single forward pass, effectively increasing the channel capacity between input and output.

Data Processing Inequality and Its Circumvention

The data processing inequality states that for a Markov chain :

Processing cannot create information. So how does CoT help? The key is that CoT doesn't violate the data processing inequality—it changes the computational structure. Without CoT, information flows as , where is the final hidden state. With CoT, information flows as:

Each has access to all previous , creating a richer information pathway. The bottleneck is no longer a single forward pass but the accumulated computation across passes.

The Scratchpad Hypothesis

Consider the human analogy: when solving a complex problem, we write intermediate results on paper rather than holding everything in working memory. The written notes serve as external storage that offloads cognitive burden. For transformers, the context window functions analogously. Each generated token becomes part of the model's "working memory" accessible via attention in subsequent forward passes. The chain-of-thought tokens form an external scratchpad. Formally, let denote the model's internal state capacity (bounded by hidden dimension ) and denote the scratchpad size (number of reasoning tokens times vocabulary embedding dimension). The effective memory available for computation is:

For tasks requiring intermediate storage exceeding , the scratchpad is necessary for correct computation. Consider a task requiring tracking of variables, each with bits of precision. The total state requirement is bits. If the model's hidden state can only reliably encode bits, direct computation fails. But with a scratchpad, each variable can be explicitly written to context, enabling arbitrarily large .

Hidden State Propagation

When generating token , the model has access to all previous tokens through attention. This means information computed during the generation of can influence in two ways:

- Explicit: The token itself is attended to

- Implicit: The hidden states at each layer can propagate information through attention patterns

The explicit pathway is more robust—the token is discretized and stored in a fixed vocabulary representation. The implicit pathway through attention provides continuous information flow but is more difficult to control and interpret. This dual pathway explains why well-structured chain-of-thought (with clearly stated intermediate results) outperforms vague or disorganized reasoning: explicit storage is more reliable and less prone to degradation across many steps.

When CoT Fails

Chain-of-thought is not universally beneficial. Understanding failure modes clarifies the mechanism.

Pure Recall Tasks

For factual recall—"What is the capital of France?"—CoT provides no benefit and may harm performance. The answer exists in model parameters and requires no sequential computation. Mathematically, if where is directly stored in model weights and computable in one forward pass, then:

The chain-of-thought introduces additional points of failure (incorrect reasoning tokens) without providing computational benefit.

Highly Parallel Problems

Tasks with inherently parallel structure don't benefit from serial decomposition. Consider checking whether each element of a list satisfies some property independently. This requires depth with width—exactly what a transformer provides in one pass. CoT would serialize inherently parallel checks:

- Check element 1: satisfies

- Check element 2: satisfies

- ...

This increases computation time without improving accuracy, since the parallel checks don't benefit from intermediate results. The key diagnostic: if a problem decomposes into independent subproblems with no information flow between them, CoT adds latency without benefit.

Insufficient Model Capability

CoT requires the model to:

- Identify the appropriate reasoning steps

- Execute each step correctly

- Integrate results across steps

If the model cannot perform (1)—decomposing the problem—no amount of computation helps. This explains why CoT shows larger benefits for larger models: they have better problem decomposition abilities. Formally, let denote the "reasoning plan" for input . The model must learn . If this distribution is poorly learned (small models, out-of-distribution problems), CoT fails at the planning stage before computation even begins.

Reasoning Token Errors Propagate

A critical failure mode: errors in intermediate tokens propagate to the final answer. If is incorrect:

For chains of length where each step has accuracy , the overall accuracy degrades as approximately in the worst case (when errors are independent and fatal). This explains why self-consistency (sampling multiple chains and voting) helps: it provides robustness against intermediate errors by effectively ensembling over reasoning paths.

Length Generalization Failures

CoT can fail when problems require longer chains than seen during training. The model may have learned to reason for steps but fails at steps. This is a form of distributional shift in the "reasoning length" dimension.

Empirical Evidence

Arithmetic and Symbolic Reasoning

Nye et al. (2021) demonstrated that "scratchpad" prompting dramatically improves arithmetic performance. On 8-digit addition:

- Direct: ~0% accuracy

- With scratchpad: >95% accuracy

The scratchpad allows the model to perform carry operations sequentially, storing intermediate sums in the context. Without the scratchpad, the model must somehow compute all carries in parallel within a single forward pass—a task exceeding its architectural capacity. Similar patterns emerge in symbolic reasoning tasks:

- Parity of a bit string: CoT allows iterative XOR

- Last letter concatenation: CoT enables sequential character extraction

- Coin flip tracking: CoT maintains explicit state

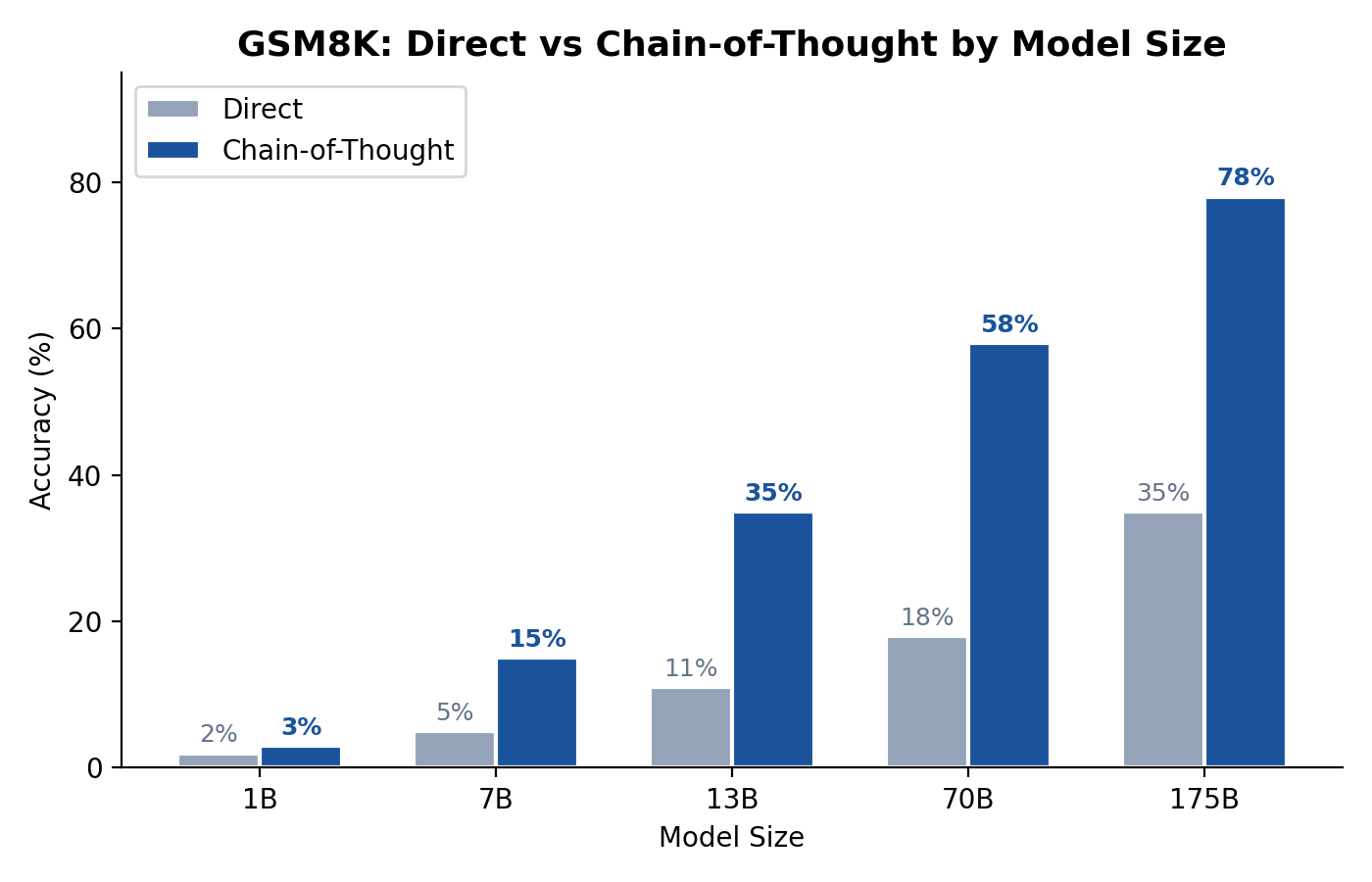

GSM8K

The GSM8K benchmark (Cobbe et al., 2021) contains grade-school math word problems requiring multi-step reasoning. Results show clear CoT benefits:

| Method | Accuracy |

|---|---|

| Direct answer (175B) | 18% |

| Chain-of-thought (175B) | 57% |

| CoT + self-consistency | 74% |

| CoT + verifier (trained) | 78% |

The problems require multi-step arithmetic wrapped in natural language—exactly the setting where CoT provides computational depth benefits. The jump from 18% to 57% cannot be explained by prompt engineering alone; it reflects a fundamental computational capability gained through intermediate generation.

BIG-Bench Hard

Suzgun et al. (2022) evaluated CoT on challenging BIG-Bench tasks:

- Average improvement with CoT: +13.5 percentage points

- Largest gains on: multi-step arithmetic, logical deduction, tracking shuffled objects

- Smallest gains on: sarcasm detection, movie recommendations (no sequential computation needed)

The pattern matches theoretical predictions: CoT helps tasks requiring serial computation depth. The correlation between "number of reasoning steps required" and "CoT improvement" is strong across the benchmark.

Scaling Analysis

Wei et al. (2022) showed that CoT benefits emerge with scale:

- Models <10B parameters: minimal CoT benefit

- Models 10-100B parameters: moderate CoT benefit

- Models >100B parameters: substantial CoT benefit

This supports the interpretation that CoT requires baseline capabilities in problem decomposition that emerge at scale. Small models cannot identify the correct reasoning steps; thus, additional computation from CoT cannot help. The emergence pattern suggests a capability threshold: below some model size, CoT is useless; above it, CoT provides increasing returns.

Ablation Studies

Several ablation studies isolate the mechanisms: Token content matters: Replacing reasoning tokens with "...." (same length, no content) eliminates benefits. This confirms that explicit information storage, not just additional forward passes, drives improvement. Structure matters: Unstructured reasoning ("hmm, let me think...") underperforms structured step-by-step decomposition. This supports the explicit storage hypothesis. Relevance matters: Irrelevant reasoning tokens can hurt performance, consistent with the error propagation analysis.

Practical Implications

When to Use CoT

Based on the analysis, CoT is most beneficial when:

- The task requires multi-step reasoning with dependencies between steps

- Intermediate results need to be stored and referenced

- The model has sufficient capability to decompose the problem

- The reasoning chain is relatively short (limiting error propagation)

- The task is not purely parallel or purely recall-based

Prompt Engineering

Effective CoT prompts should:

- Explicitly state intermediate results (leverage the explicit storage pathway)

- Use consistent notation for values being tracked

- Decompose into steps that are individually tractable for the model

- Demonstrate the target reasoning structure via few-shot examples

The format of reasoning matters. "Let x = 5. Then x + 3 = 8." is more reliable than "thinking about numbers... probably 8."

Architectural Implications

The analysis suggests architectural research directions:

- Adaptive computation: Allow models to allocate more compute to harder problems dynamically

- External memory: Provide explicit scratchpad mechanisms beyond context windows

- Error correction: Build in mechanisms to detect and recover from reasoning errors (verification heads, backtracking)

- Latent reasoning: Enable "thinking" in continuous space rather than discrete tokens to reduce error propagation

Computational Cost

CoT increases inference cost linearly with chain length. For a chain of tokens, cost scales as where is context length and is model depth. This is acceptable when improved accuracy justifies additional compute, but not for latency-sensitive applications requiring simple computations. A practical heuristic: estimate the "reasoning depth" required and use CoT only when it exceeds the model's layer count divided by some constant factor.

Conclusion

Chain-of-thought prompting improves model inference by addressing a fundamental architectural limitation: fixed computational depth in transformer forward passes. By generating intermediate reasoning tokens, CoT effectively extends the serial computation available from layers to effective steps, where is the number of reasoning tokens. The mechanism operates through two pathways: explicit storage of intermediate results in generated tokens, and implicit information flow through attention patterns. The explicit pathway is more robust and benefits from well-structured reasoning chains. CoT is not universally beneficial—it fails for pure recall tasks, highly parallel problems, and when models lack decomposition capabilities. Error propagation through reasoning chains remains a practical limitation, motivating techniques like self-consistency and learned verifiers. The theoretical framework—viewing CoT as computation depth extension and external working memory—explains empirical patterns across arithmetic, symbolic reasoning, and mathematical word problem benchmarks. It also suggests that future architectures with adaptive computation or explicit memory mechanisms may reduce reliance on lengthy reasoning chains while preserving their benefits. Understanding why CoT works enables more principled application: use it for tasks requiring serial depth, structure chains for explicit intermediate storage, and consider self-consistency for robustness against reasoning errors.

References

Cobbe, K., Kosaraju, V., Bavarian, M., Chen, M., Jun, H., Kaiser, L., Plappert, M., Tworek, J., Hilton, J., Nakano, R., Hesse, C., & Schulman, J. (2021). Training verifiers to solve math word problems. *arXiv preprint arXiv:2110.14168*. Feng, G., Zhang, B., Gu, Y., Ye, H., He, D., & Wang, L. (2023). Towards revealing the mystery behind chain of thought: A theoretical perspective. *Advances in Neural Information Processing Systems*, 36. Merrill, W., & Sabharwal, A. (2023). The parallelism tradeoff: Limitations of log-precision transformers. *Transactions of the Association for Computational Linguistics*, 11, 531-545. Nye, M., Andreassen, A. J., Gur-Ari, G., Michalewski, H., Austin, J., Biber, D., Dohan, D., Lewkowycz, A., Bosma, M., Luan, D., Sutton, C., & Odena, A. (2021). Show your work: Scratchpads for intermediate computation with language models. *arXiv preprint arXiv:2112.00114*. Suzgun, M., Scales, N., Schärli, N., Gehrmann, S., Tay, Y., Chung, H. W., Chowdhery, A., Le, Q. V., Chi, E. H., Zhou, D., & Wei, J. (2022). Challenging BIG-bench tasks and whether chain-of-thought can solve them. *arXiv preprint arXiv:2210.09261*. Wang, X., Wei, J., Schuurmans, D., Le, Q., Chi, E., Narang, S., Chowdhery, A., & Zhou, D. (2023). Self-consistency improves chain of thought reasoning in language models. *International Conference on Learning Representations*. Wei, J., Wang, X., Schuurmans, D., Bosma, M., Ichter, B., Xia, F., Chi, E., Le, Q., & Zhou, D. (2022). Chain-of-thought prompting elicits reasoning in large language models. *Advances in Neural Information Processing Systems*, 35, 24824-24837.