Category: News & Briefs

What happened this week

OpenAI launched GPT-5.4 across ChatGPT, the API, and Codex, framing it as its most capable and token-efficient frontier model for professional work. The release is not just about benchmark gains: it adds practical changes for builders, including native computer use for a general-purpose model, a 1M-token context option in Codex/API contexts, and a new tool-search approach to reduce prompt bloat in large tool ecosystems.

At the same time, Anthropic signaled a dual track of product expansion and governance posture. Product-wise, Opus 4.6 introduced “agent teams,” a 1M-token context window, and deeper productivity integration (including a PowerPoint side panel), according to TechCrunch’s reporting. Policy-wise, Anthropic launched The Anthropic Institute and expanded public policy operations, while cloud partners including Google (and, as reported, Microsoft and Amazon) publicly stated Anthropic products remain available for non-defense use after U.S. defense restrictions.

For engineers, this is less a “who won” week and more a “stack architecture changed” week.

What GPT-5.4 changed for engineers (practical view)

1) Agent architecture got more viable out of the box

The biggest implementation-level shift is that GPT-5.4 combines three things teams often had to stitch together manually:

- strong coding performance,

- stronger tool orchestration,

- and native computer-use capability.

OpenAI explicitly positions GPT-5.4 as the first general-purpose model in its lineup with native state-of-the-art computer use, and reports improved outcomes on computer-use benchmarks like OSWorld-Verified. That matters because a lot of real enterprise automation still lives behind browser UIs and legacy desktop flows, not clean APIs.

In plain terms: if your workflow touches brittle web consoles, internal portals, or mixed app environments, GPT-5.4 appears designed for that class of work rather than only “clean API chatbot” scenarios.

2) Tool-heavy systems can get materially cheaper and cleaner

The new tool search behavior in the API is probably the most underrated launch detail.

OpenAI’s framing is straightforward: instead of injecting every tool definition into every prompt, the model can look up tool definitions when needed. Their reported result on 250 MCP Atlas tasks with 36 servers enabled: 47% lower token usage at the same accuracy.

That has direct architectural implications:

- less context pollution,

- better cache preservation,

- lower per-task prompt overhead,

- and fewer painful tradeoffs between capability and latency/cost.

If your agent stack has grown into dozens of tools/connectors, this is a concrete lever—not a vague “model feels smarter” upgrade.

3) Longer-horizon work is becoming first-class, not edge-case

OpenAI says GPT-5.4 supports up to 1M tokens of context (with specific product limits and billing caveats), and frames it as useful for planning/executing/verification across longer task horizons.

Whether you use full 1M windows regularly is a separate question. The practical shift is that the platform direction is clearly toward fewer handoffs between retrieval, summarization, and action loops for complex workflows.

For teams building coding agents, enterprise copilots, or document-heavy automation, this reduces how often you need brittle “chunk and pray” orchestration.

4) The “professional work” target is now explicit

GPT-5.4’s launch narrative emphasizes business outputs: spreadsheets, presentations, documents, coding, and web research. OpenAI also attached concrete eval claims (for example, GDPval, SWE-Bench Pro, Toolathlon, BrowseComp, and internal spreadsheet/presentation quality evaluations).

Even if you treat vendor benchmarks skeptically (you should), the product signal is clear: model competition is moving from isolated IQ-style tasks to complete deliverable workflows.

If you’re deciding where to invest engineering effort, orchestration and UX around deliverable generation now matter more than incremental prompt tweaks.

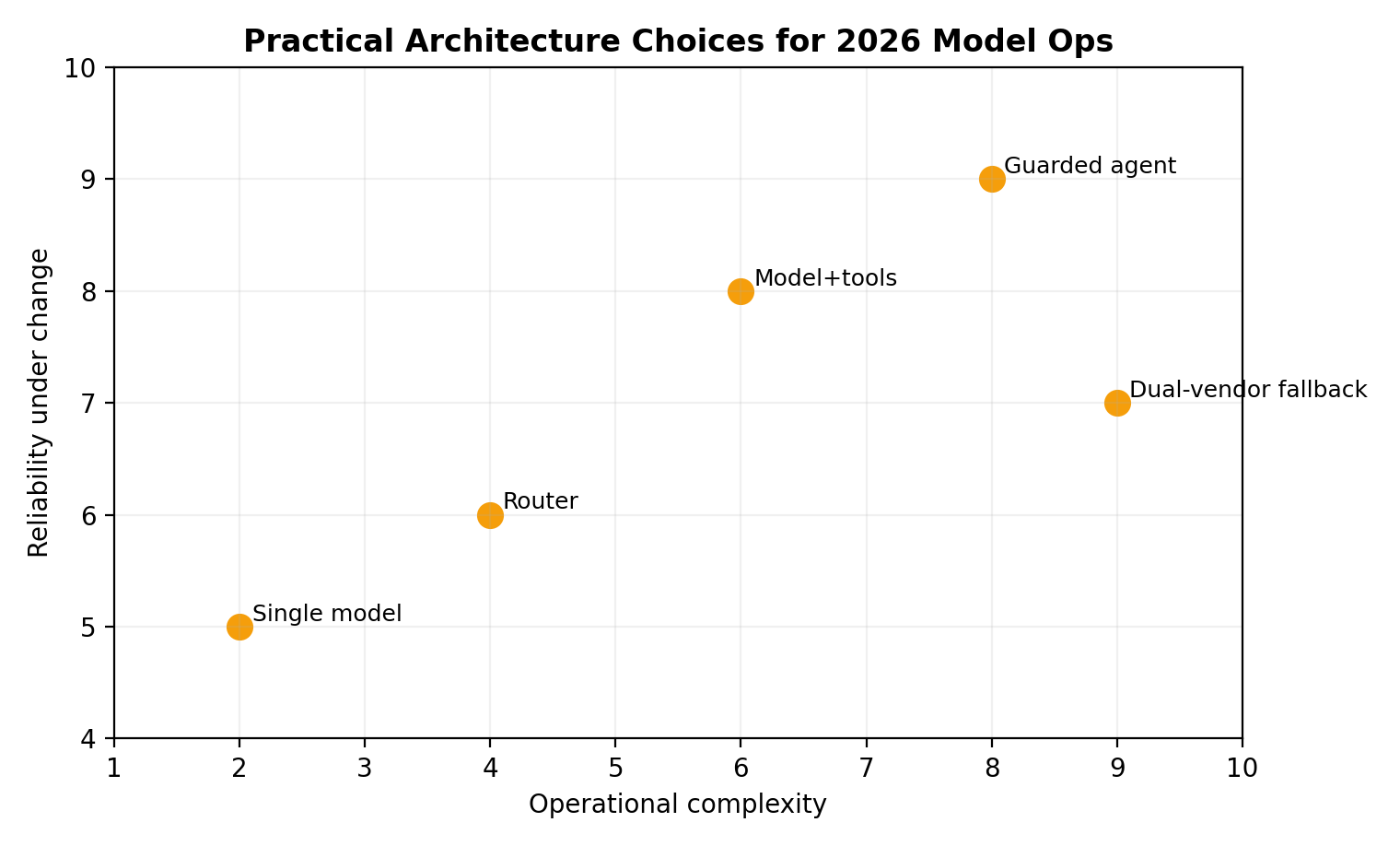

How this changes stack decisions in practice

Decision 1: Do you centralize on one “do-it-all” model tier or keep specialized routing?

GPT-5.4 is being pitched as a consolidated frontier option for reasoning + coding + tool use + computer use. That can simplify routing logic and reduce operational complexity.

But specialized routing still makes sense when:

- you need strict latency/cost bands,

- you have clear task segmentation,

- or you’re balancing multiple vendors for availability/risk.

A practical near-term pattern: use GPT-5.4 (or Pro) for high-value, multi-step workflows, while keeping lighter models for narrow, deterministic tasks.

Decision 2: Do you redesign your tool registry now?

If your current setup pushes all tool schemas into every call, GPT-5.4’s tool-search model justifies revisiting that design.

Priority migration candidates:

- MCP-heavy environments,

- broad connector catalogs,

- internal “tool sprawl” platforms.

Expected gain: lower token overhead and less context crowding without shrinking capability surface area.

Decision 3: Is browser/desktop automation now in scope?

Many teams previously avoided UI-level automation because it was flaky, expensive, or hard to steer safely. GPT-5.4’s computer-use positioning suggests this category is becoming mainstream.

Still, production readiness requires:

- strict policy gating,

- confirmation checkpoints for risky actions,

- robust logging and replay,

- and clear fallback paths.

OpenAI’s own safety language around cyber risk and request-level protections is a reminder: capability and control have to ship together.

Decision 4: How do you budget for capability vs efficiency?

OpenAI states GPT-5.4 is priced higher per token than GPT-5.2, while also claiming higher token efficiency. Translation: your total cost curve depends on workflow design, not sticker price alone.

Teams should benchmark end-to-end task completion cost/time, not just input-output token rates.

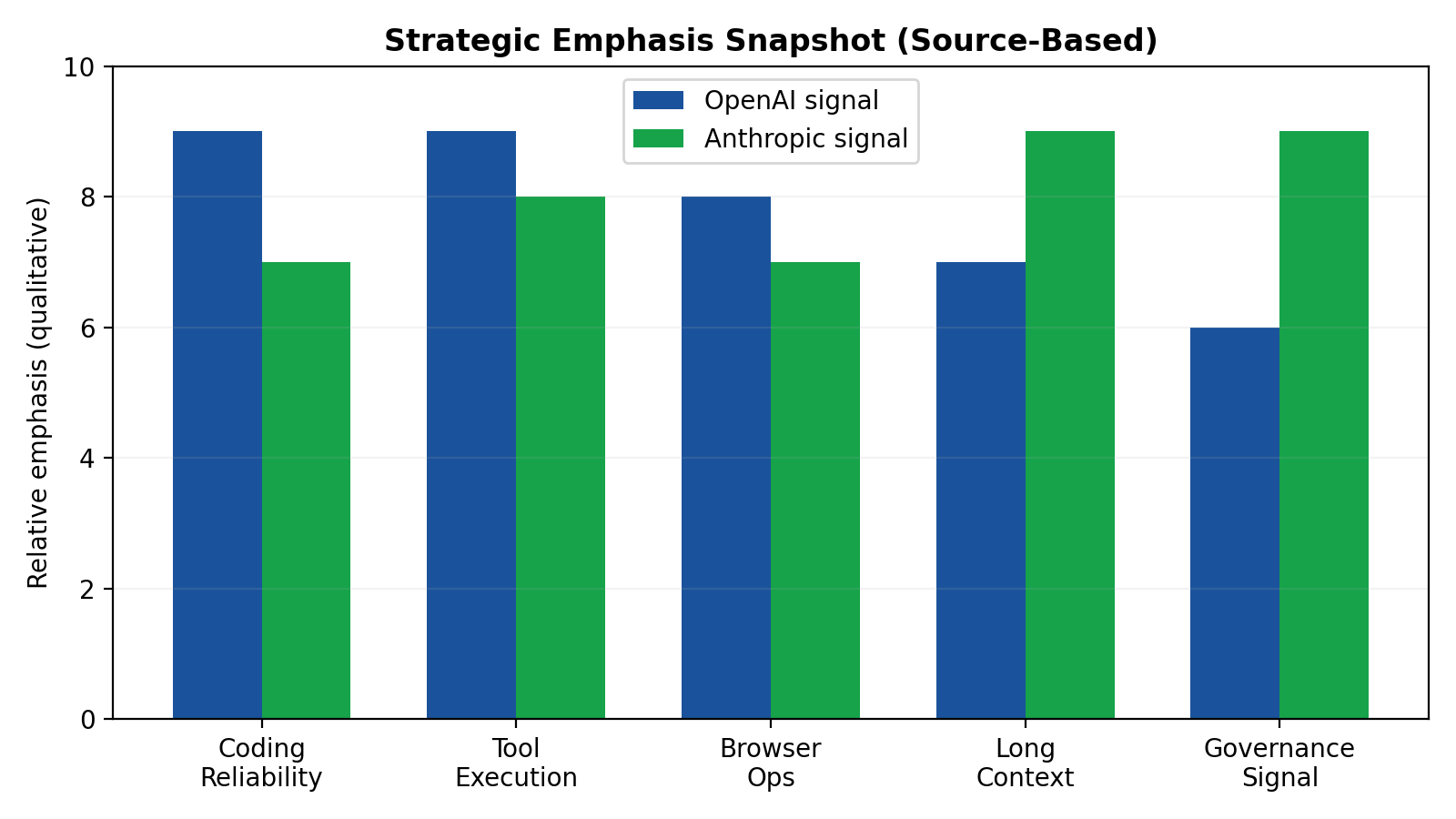

OpenAI vs Anthropic: strategic signals (product + policy)

OpenAI signal: integrated capability stack for builders

From the GPT-5.4 release, OpenAI’s direction is operationally clear:

- merge top-tier coding and reasoning into a mainline model,

- improve agent reliability across large tool ecosystems,

- and expand computer-use workflows for real task execution.

This is a product strategy optimized for developer throughput and enterprise task completion, with explicit attention to token efficiency and workflow latency.

Anthropic signal: broadened product surface plus explicit governance posture

Across the three Anthropic-related sources, the signal is two-layered:

- Product expansion (TechCrunch reporting): Opus 4.6 adds agent teams, 1M context, and broader knowledge-worker positioning beyond software engineering.

- Public-interest/policy institutionalization (Anthropic announcement + CNBC context): Anthropic launched The Anthropic Institute (bringing together red teaming, societal impacts, and economic research), expanded public policy operations, and is actively engaging governance questions while managing active policy friction around defense-related availability.

What this means for builders right now

Without speculating beyond the cited sources, the contrast looks like this:

- OpenAI: emphasizes integrated execution performance for professional workflows.

- Anthropic: advances product capability while simultaneously formalizing policy/public-benefit infrastructure and navigating high-visibility policy constraints.

For engineering orgs, this reinforces a familiar conclusion: vendor choice is no longer purely a model-quality decision. It is now a capability + governance + availability-context decision.

Sources

- https://openai.com/index/introducing-gpt-5-4/

- https://www.anthropic.com/news/the-anthropic-institute

- https://www.cnbc.com/2026/03/06/google-says-anthropic-remains-available-outside-of-defense-projects.html

- https://techcrunch.com/2026/02/05/anthropic-releases-opus-4-6-with-new-agent-teams/