Category: Fine-tuning & Alignment

Abstract

Low-Rank Adaptation (LoRA) constrains parameter updates to a low-dimensional subspace by representing each layer update as a rank- factorization. This reduces trainable parameters and optimizer state while preserving much of the performance of full fine-tuning in many practical settings. The central assumption is that task-relevant weight changes are approximately low-rank, or at least concentrated in a few dominant directions. This article formalizes LoRA as , relates it to unconstrained full fine-tuning, and analyzes the approximation from a truncated singular value decomposition perspective. We derive parameter, memory, and compute scaling laws, then connect LoRA behavior to spectral properties of gradients and local curvature. We also identify regimes where the low-rank assumption becomes fragile: large distribution shift, strongly compositional transformations, multimodal fusion demands, and layers that are under-ranked relative to their intrinsic adaptation dimension. Practical diagnostics are presented, including singular value decay of update surrogates, layerwise sensitivity probing, and validation loss under rank sweeps. A numeric example based on 7B-scale transformer dimensions illustrates concrete trade-offs for . The conclusion is operational: LoRA is best viewed as a rank budget allocation problem over layers and tasks, not a universally valid replacement for full fine-tuning.

LoRA Formulation

Consider a pretrained parameter matrix . Full fine-tuning learns an unconstrained update so that

For a linear transform , full adaptation modifies the output by

LoRA constrains to rank at most :

with

A common parameterization scales the update by :

where controls effective update magnitude. Typical initialization sets to zero and random (or vice versa), ensuring the initial forward pass matches the base model.

For a transformer layer with projections , LoRA is often applied to a subset, frequently and , sometimes all attention and MLP projections.

Relation to Full Fine-Tuning as a Constrained Optimization

Let be the downstream loss. Full fine-tuning solves

LoRA solves the constrained problem

which is equivalent to minimizing over in the nonconvex set

Hence LoRA can be interpreted as projecting adaptation onto a low-dimensional matrix manifold. The optimization is nonconvex in , but the effective degrees of freedom are drastically reduced.

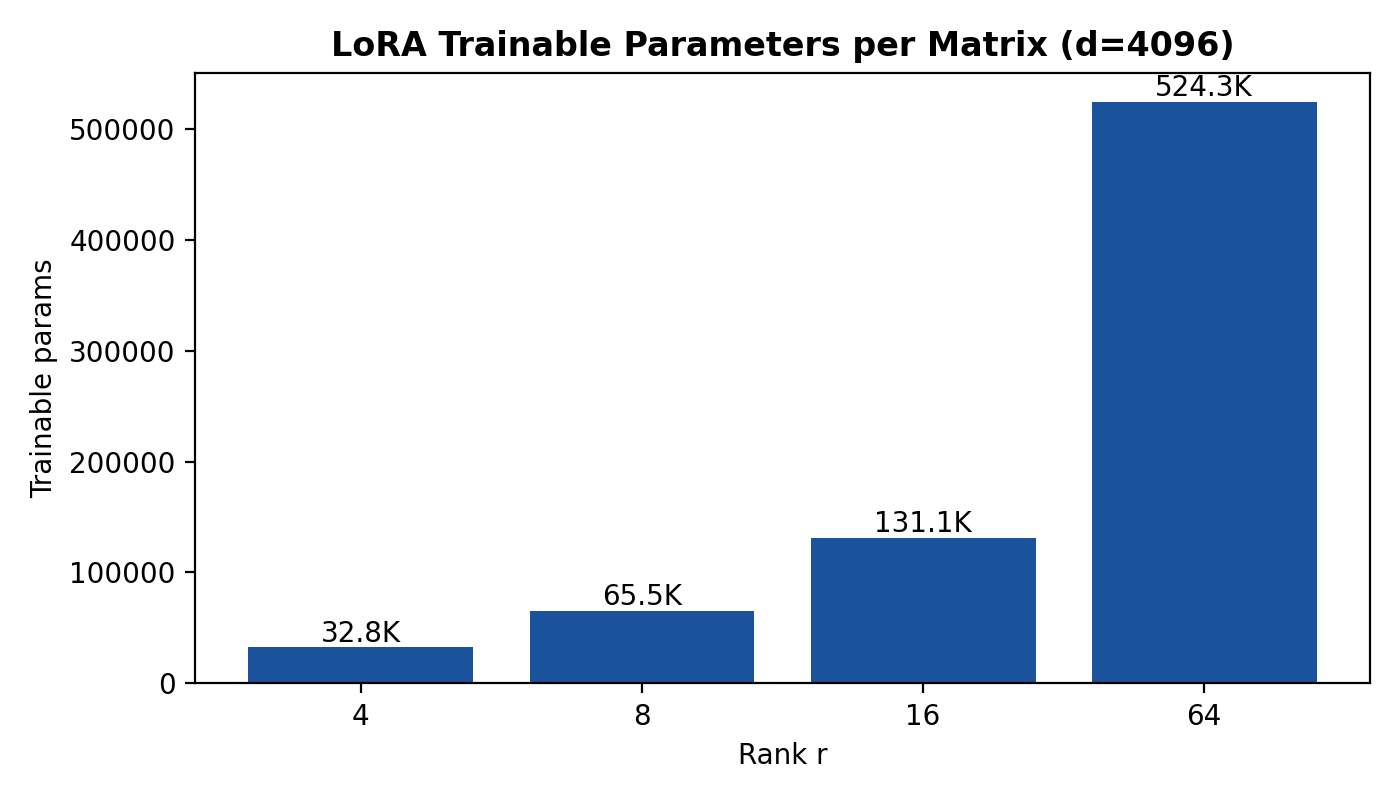

Parameter Count and State Complexity

For one matrix :

- Full fine-tuning trainable parameters: .

- LoRA trainable parameters: .

Reduction factor:

When ,

For , this is at , at , at , and at .

Memory savings are larger than parameter savings because full fine-tuning with Adam-like optimizers stores parameters, gradients, and two moments. Ignoring activation checkpointing details and assuming bf16 weights + fp32 moments, trainable-state memory scales roughly linearly with trainable parameter count. Thus LoRA yields approximately the same multiplicative reduction in optimizer-state memory as in trainable parameters.

Forward/Backward Compute Overhead

At inference, if LoRA is merged into , no additional matrix multiplications are needed. Without merge, per token a linear layer computes

Extra cost is approximately

multiply-add units, versus for the base projection. Relative overhead:

For square , , usually small for .

Training compute is subtler: forward/backward through frozen remains, but gradient updates are computed only for . This removes full-weight optimizer updates and moment maintenance, reducing step cost and significantly reducing memory bandwidth pressure.

Why Low-Rank Can Work

LoRA’s success depends on an empirical rank-deficiency hypothesis: the task-induced update that full fine-tuning would learn has most of its energy in a low-dimensional subspace.

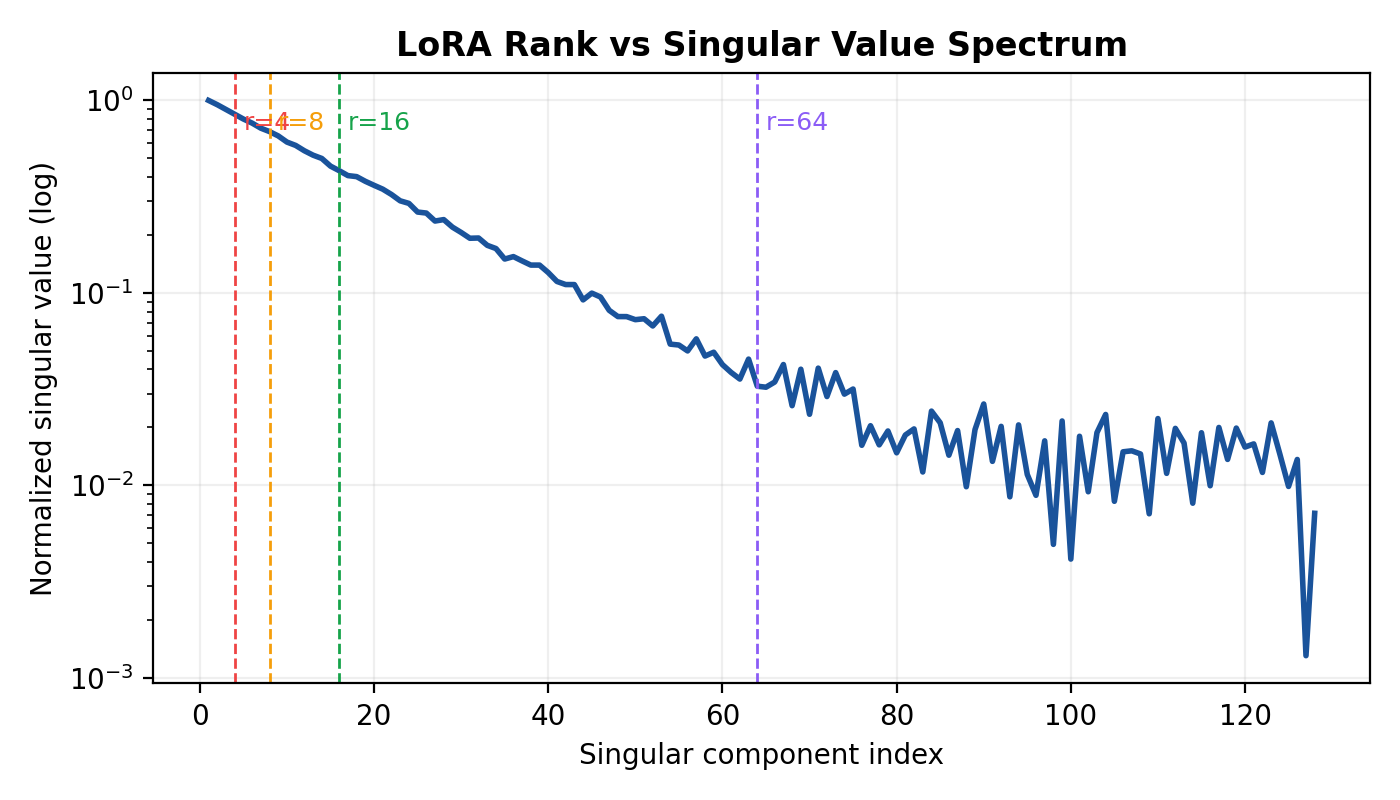

Spectral View of Updates

Suppose the full optimum update for a layer has SVD

If decays rapidly, rank- truncation

captures most Frobenius energy:

Then constraining to imposes limited bias.

First-Order Gradient Subspace Argument

Locally, a gradient step gives

If is approximately low-rank, then a low-rank adapter can represent the dominant descent directions. Empirically, gradients in overparameterized transformers often exhibit anisotropy: a few singular directions carry much of the norm, especially for task-specific heads and middle/upper attention blocks.

Curvature and Effective Dimension

Under second-order expansion around :

where is a Hessian (or block Hessian approximation). If large curvature directions align with a low-dimensional subspace that also overlaps with task gradient directions, adaptation is effectively low-dimensional. In that regime, adding parameters outside this subspace yields diminishing returns.

Task Similarity and Localized Adaptation

LoRA tends to work when:

- Downstream task is near pretraining manifold. Required representation shifts are incremental.

- Adaptation is localized. Only certain layers/projections need modification.

- Loss landscape is smooth near \(W_0\). Small, structured updates suffice.

- Prompt format and token statistics are familiar. Distribution support overlap reduces need for broad reconfiguration.

In these settings, full fine-tuning may spend many parameters modeling weak corrections that contribute little to validation error relative to a compact low-rank update.

Error Analysis

Best Rank- Approximation Bound

By Eckart–Young–Mirsky, the best rank- approximation in Frobenius norm is truncated SVD:

with error

In spectral norm:

This quantifies the approximation bias induced by rank restriction.

Translating Parameter Error to Loss Gap

Assume is -Lipschitz in . Then

This upper bound is loose but operationally useful: if singular tail energy decays rapidly, the loss penalty for low-rank truncation is bounded tightly.

Under a strongly convex local model with curvature , one also gets a lower relation between excess loss and parameter error:

Hence both curvature and spectral tail jointly determine practical impact.

Multi-Layer Allocation

For layer , let singular values of full update be . With per-layer rank , total truncation error is approximately

Given rank budget , optimal allocation places rank where marginal tail reduction is largest:

This formalizes why uniform rank across layers can be suboptimal.

Numeric Example: 7B-Scale Dimensions

Use representative dimensions , MLP expansion , 32 layers.

Per matrix trainable counts:

- Attention projection : full .

- MLP up projection : full .

- MLP down projection : full .

LoRA counts:

So for : . For : .

Concrete values:

| Matrix | Full FT | ||||

|---|---|---|---|---|---|

| Attn | 16,777,216 | 32,768 | 65,536 | 131,072 | 524,288 |

| MLP | 45,088,768 | 60,416 | 120,832 | 241,664 | 966,656 |

Relative to full FT:

- Attention matrix: .

- MLP matrix: .

If LoRA is attached to and only (two matrices per layer):

Across 32 layers:

Thus total trainables are:

- : 2,097,152

- : 4,194,304

- : 8,388,608

- : 33,554,432

These counts are far below full-model fine-tuning scales, explaining why LoRA enables adaptation on limited hardware.

Empirical Failure Modes

The low-rank constraint fails when the intrinsic update rank is high or when rank is misallocated.

1. Large Distribution Shift

If downstream data distribution differs strongly from pretraining , many features must be reconfigured. In language modeling terms, token co-occurrence, style, syntax, and long-range dependencies may all shift simultaneously. The resulting often has slower spectral decay, increasing .

Observable symptoms:

- Validation loss plateaus early for small .

- Increasing yields monotonic, substantial gains up to high ranks.

- Layerwise gradient spectra become flatter.

2. Compositional or Multi-Skill Tasks

Tasks requiring simultaneous acquisition of several independent transformations (e.g., domain transfer + strict formatting + tool-grounded reasoning + style constraints) can induce near-additive update components. If these components lie in distinct subspaces, required rank grows roughly with number of independent factors.

Formally, if

with weakly aligned singular spaces, effective rank can approach . Under-ranked LoRA then forces interference between components.

3. Multimodal Adapters and Cross-Modal Alignment

In multimodal systems, adaptation may need to couple heterogeneous feature spaces (text, vision, audio). Cross-modal projections can require richer transformations than low-rank perturbations around a language-pretrained prior. If modality alignment errors are distributed across many channels, low bottlenecks information routing.

This is often pronounced in projection layers bridging modality encoders to LLM token space, where singular spectra of optimal updates decay slowly.

4. Under-Ranked Critical Attention Layers

Not all layers are equally rank-efficient. Early layers may encode lexical priors, middle layers relational composition, and late layers task decoding behavior. If high-sensitivity layers receive insufficient rank, global performance degrades disproportionately.

A frequent mistake is uniform across all target matrices. Under-ranked or specific mid-depth blocks can become bottlenecks even when total rank budget is high.

5. Long-Context and Retrieval-Heavy Regimes

Tasks requiring robust behavior over long contexts can demand coordinated changes to positional interaction patterns and attention routing across heads. Such changes may not be representable by low rank in only a few projections, especially when context-length extrapolation differs from pretraining conditions.

6. Alignment Under Strict Behavioral Constraints

Preference optimization or safety alignment sometimes imposes many small directional constraints across diverse prompts. If constraints are widespread rather than concentrated, low-rank adapters may satisfy some behaviors while regressing others, indicating insufficient adaptation dimension.

Diagnostics in Practice

Robust LoRA deployment benefits from direct measurement rather than fixed defaults.

1. Singular Value Decay Probing

Approximate update spectrum per layer using one of:

- Short full-FT pilot (few hundred steps), then SVD of .

- Accumulated gradient covariance surrogate.

- Low-rank Hessian-informed approximations (e.g., top eigenpairs + projected gradients).

Compute cumulative energy curve:

Layers where saturates quickly are LoRA-friendly; slow saturation indicates higher needed rank.

2. Layerwise Sensitivity Analysis

Estimate effect of adapting each layer alone at fixed rank:

- Insert LoRA in one layer , freeze others.

- Train for short budget.

- Measure validation improvement .

This yields a priority ordering for rank allocation. A stronger variant computes marginal gains when adding rank increments to each layer:

Allocate ranks greedily by largest .

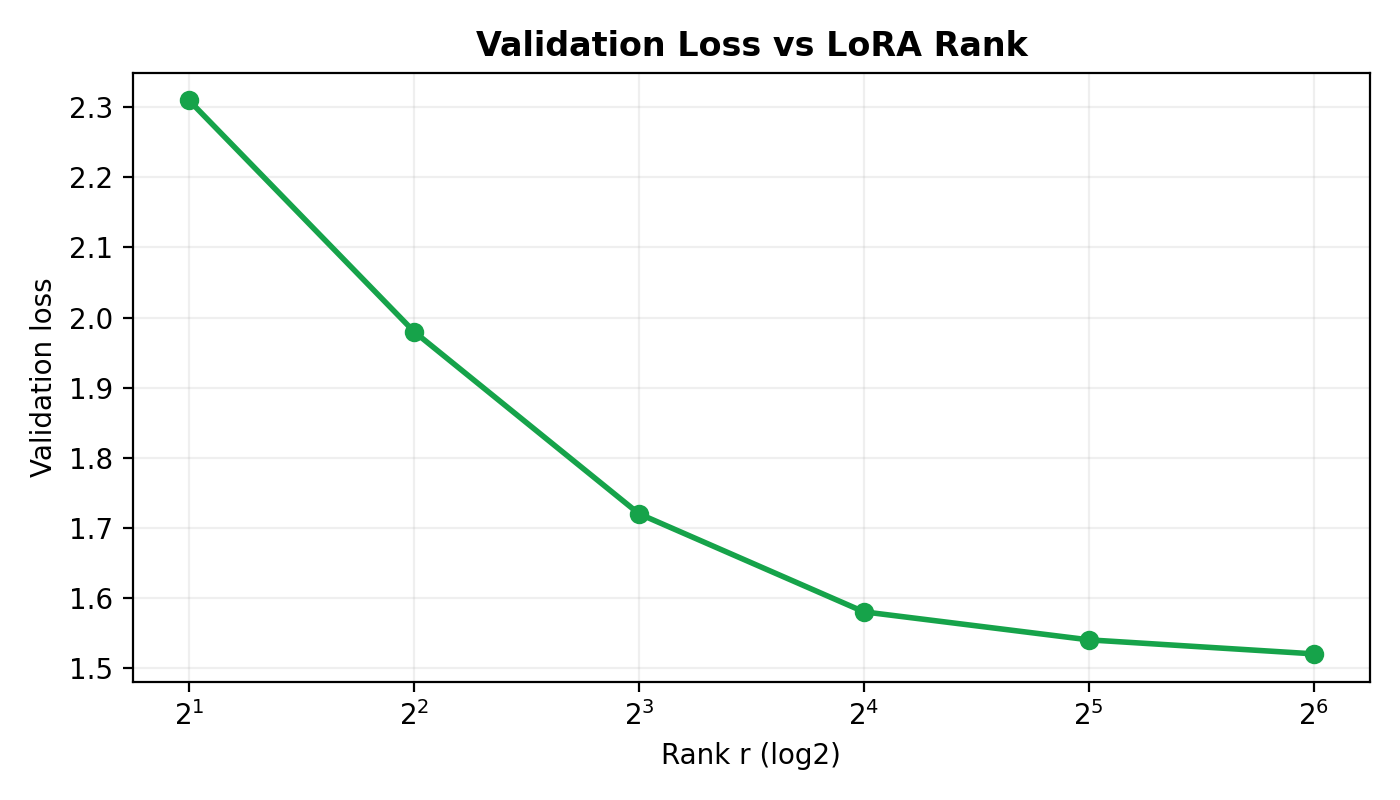

3. Validation Loss Rank Sweep

Run controlled experiments for , fixed data order and hyperparameters. Typical patterns:

- Fast saturation: minimal gain beyond or , indicating low intrinsic rank.

- Gradual decline: gains persist to , indicating moderate/high intrinsic rank.

- Noisy or unstable: optimization issues (learning rate, scaling , adapter placement).

Track both in-domain and shifted validation sets. A rank that appears sufficient in-domain may underperform under shift.

4. Gradient-Subspace Overlap Metric

Given top singular vectors of pilot update , monitor overlap with ongoing gradients:

Low suggests adapter subspace is misaligned with current descent directions; rank or placement may need adjustment.

5. Residual Error Audits

If occasional full-FT checkpoints are feasible, compare LoRA-implied update to unconstrained update via

Large pinpoints layers where low-rank bias is dominant.

Rank Selection Strategy

Rank selection is a constrained optimization problem under memory and latency budgets.

Step 1: Define Budget and Targets

Let memory budget permit total trainable parameters . For layer set :

Define target metrics (e.g., perplexity, exact match, reward score) and robustness set under distribution shift.

Step 2: Start with Non-Uniform Prior

Instead of uniform , initialize with heuristic weights:

- Higher rank for middle/upper attention blocks.

- Moderate rank for and MLP down projections when generation control is important.

- Lower rank in layers with historically low sensitivity.

A simple prior:

where is sensitivity score from pilot runs.

Step 3: Coarse-to-Fine Sweep

- Global sweep with shared .

- Choose smallest within tolerance of best validation.

- Redistribute rank layerwise around this budget using marginal-gain diagnostics.

Step 4: Regularization and Stability Controls

High rank increases capacity and overfitting risk, especially for small datasets. Apply:

- Adapter dropout.

- Weight decay on .

- Early stopping on shifted validation.

- Conservative scaling to avoid unstable large updates.

Step 5: Decide Escalation Path

If diagnostics indicate persistent high truncation error, escalate in order:

- Increase rank in identified bottleneck layers.

- Expand adapter placement (add , MLP projections).

- Consider hybrid PEFT (LoRA + selective unfrozen biases/norms).

- Move to partial or full fine-tuning when low-rank bias remains dominant.

Worked End-to-End Budget Example

Assume 7B model, LoRA on and across 32 layers. Per-layer parameters: . Total: .

Suppose budget is 10M trainable parameters. Then

Feasible uniform choices: (8.39M params) or if implementation allows arbitrary rank.

If sweep yields validation losses:

- : 2.31

- : 2.24

- : 2.20

- : 2.18

The gain from is small relative to 4x parameter increase. Under 10M budget, is efficient. Remaining headroom (about 1.6M params) can be assigned non-uniformly: for example, increase rank to 32 in top-8 sensitive layers while keeping others at 16, often outperforming uniform .

Conclusion

LoRA is a principled low-rank constraint on fine-tuning updates, not merely a memory trick. Its effectiveness follows from a spectral property: many downstream adaptations concentrate in a low-dimensional subspace around pretrained weights. When singular values of the required update decay quickly, rank-limited adapters recover most of full fine-tuning performance at a small fraction of parameter and optimizer-state cost.

The same framework clarifies failure cases. When adaptation demands are distributed across many independent directions—under strong distribution shift, compositional objectives, multimodal coupling, or mis-ranked critical layers—the singular tail is substantial and low-rank bias becomes performance-limiting. In these regimes, uniform low ranks can produce brittle behavior and incomplete alignment.

Operationally, rank should be selected by measurement: spectral decay probes, layerwise sensitivity, and validation rank sweeps. The practical objective is not maximal rank, but efficient allocation of adaptation dimension under compute and memory constraints. Treating LoRA as a rank-budget optimization problem provides a reliable path to deciding when low-rank adaptation is sufficient and when escalation toward broader fine-tuning is necessary.

References

- Hu, E. J., Shen, Y., Wallis, P., Allen-Zhu, Z., Li, Y., Wang, S., Wang, L., & Chen, W. (2021). *LoRA: Low-Rank Adaptation of Large Language Models*. arXiv:2106.09685.

- Aghajanyan, A., Zettlemoyer, L., & Gupta, S. (2020). *Intrinsic Dimensionality Explains the Effectiveness of Language Model Fine-Tuning*. arXiv:2012.13255.

- Li, X. L., & Liang, P. (2021). *Prefix-Tuning: Optimizing Continuous Prompts for Generation*. ACL.

- Lester, B., Al-Rfou, R., & Constant, N. (2021). *The Power of Scale for Parameter-Efficient Prompt Tuning*. EMNLP.

- Dettmers, T., Pagnoni, A., Holtzman, A., & Zettlemoyer, L. (2023). *QLoRA: Efficient Finetuning of Quantized LLMs*. NeurIPS.

- Ben Zaken, E., Goldberg, Y., & Ravfogel, S. (2022). *BitFit: Simple Parameter-efficient Fine-tuning for Transformer-based Masked Language-models*. ACL.

- Grosse, R., & Martens, J. (2016). *A Kronecker-factored Approximate Fisher Matrix for Convolution Layers*. ICML.

- Golub, G. H., & Van Loan, C. F. (2013). *Matrix Computations* (4th ed.). Johns Hopkins University Press.

- Mirsky, L. (1960). *Symmetric gauge functions and unitarily invariant norms*. Quarterly Journal of Mathematics.

- Eckart, C., & Young, G. (1936). *The approximation of one matrix by another of lower rank*. Psychometrika.

- Yao, Z., Gholami, A., Keutzer, K., & Mahoney, M. W. (2021). *PyHessian: Neural Networks Through the Lens of the Hessian*. IEEE BigData.

- Kopiczko, D. J., Blankevoort, T., & Asano, Y. M. (2024). *DoRA: Weight-Decomposed Low-Rank Adaptation*. arXiv:2402.09353.