*Category: Inference & Serving*

In contemporary large language model serving, compute is no longer the sole limiting resource. At moderate context lengths and low concurrency, tensor-core throughput can still dominate latency; however, once long-context decoding and multi-tenant traffic become the norm, memory capacity and bandwidth for the key-value (KV) cache become first-order constraints. This shift changes what “optimization” means in production. The precision format used for KV storage now directly determines how many sessions can be admitted, how stable p99 latency remains during bursts, and how much output quality drift appears at long generation horizons.

KV cache compression is a systems-and-numerics co-design problem. The memory math is only the entry point: precision changes perturb attention logits, allocator policy decides how much saved memory is usable, and workload class determines whether drift is acceptable. The useful framework starts from per-token cache growth, then follows quantization error into attention, paging, admission control, and rollout policy.

KV Cache Scaling as the Core Serving Constraint

For decoder-only transformers, each new token appends one key and one value vector in every attention layer. Neglecting metadata for the moment, the per-token KV footprint is

where is the number of layers, is the number of KV heads, is head dimension, and is bytes per stored element. The factor of two reflects both K and V tensors. Aggregate memory for batch with sequence lengths is

where includes per-block scales, zero-points, page tables, and scheduler bookkeeping, while captures allocator slack from partially filled pages and policy-induced fragmentation.

This simple model explains why context length and concurrency dominate serving economics. Model weights are effectively fixed after load, but KV grows linearly with active and historical tokens. A deployment that appears compute-bound during short interactive prompts can become memory-bound when users retain long conversations, upload large retrieval contexts, or when multi-session decode overlap increases. In other words, KV pressure is not a corner case; it is the natural operating regime of production LLM systems.

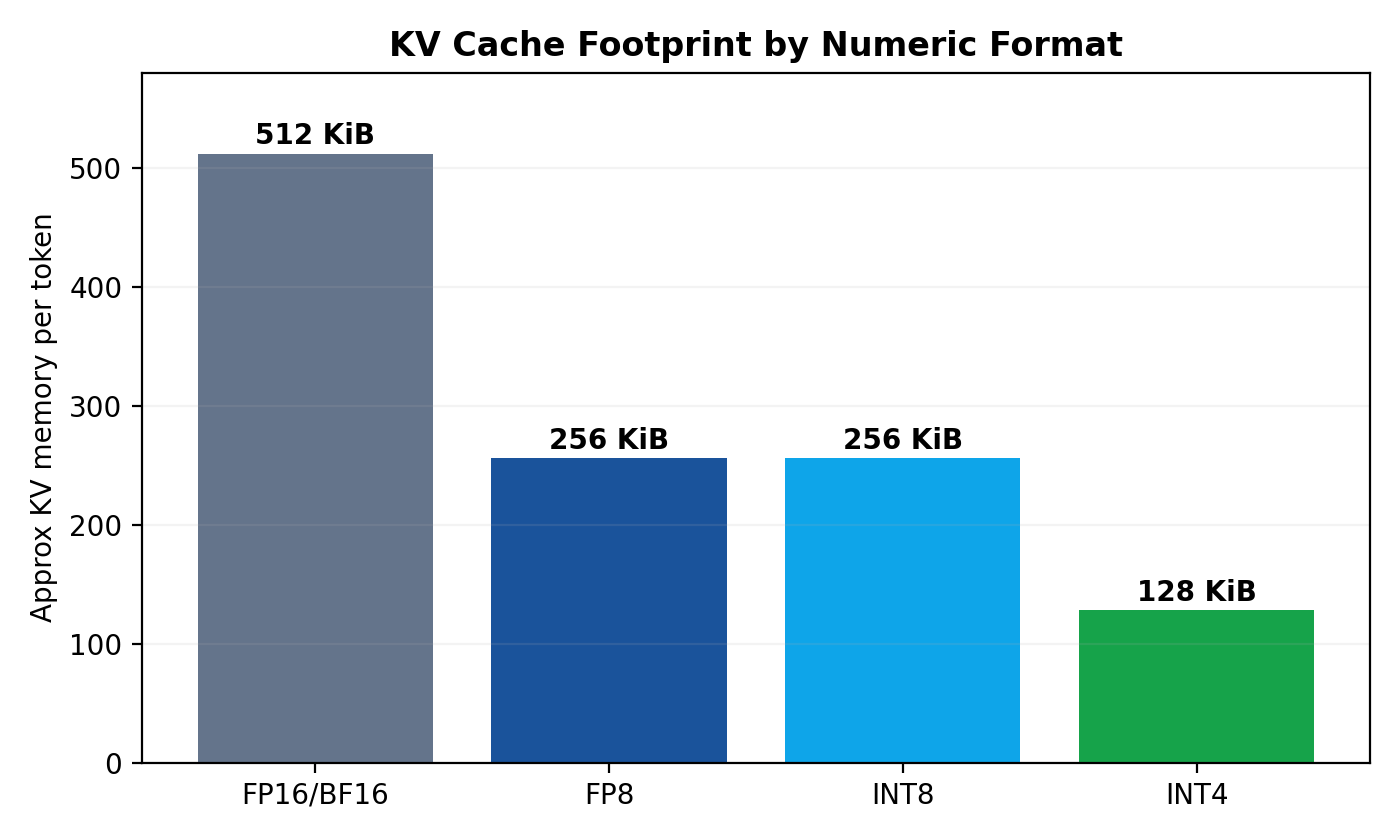

A concrete 7B-like configuration illustrates scale. Assume , , and . Then

which corresponds to roughly 512 KiB/token in FP16, 256 KiB/token in FP8/INT8, and 128 KiB/token in raw INT4 before metadata. At 8,192 tokens, a single sequence consumes about 4.0 GiB (FP16), 2.0 GiB (FP8), or 1.0 GiB (INT4 raw). Even allowing architectural variation, the practical conclusion is stable: one long sequence can consume nontrivial fractions of a GPU’s allocatable KV budget.

A 40-layer grouped-query attention (GQA) serving example changes the numbers but reinforces the same logic. Let , query heads, KV heads, and . Per-token KV elements become

so FP16 is approximately 160 KiB/token, FP8 about 80 KiB/token, and INT4 roughly 40 KiB/token before metadata. At 16,384 tokens, this maps to approximately 2.5 GiB, 1.25 GiB, and 0.625 GiB respectively. The broader insight is that architecture and precision are multiplicative levers: GQA can reduce KV pressure significantly, and compression compounds that gain.

Precision Regimes: FP8 and INT4 Beyond Raw Bit Counts

The baseline FP16/BF16 cache remains attractive for numerical stability and implementation simplicity. It minimizes surprises, aligns with many model execution paths, and avoids scale/zero-point metadata complexity. Its cost is straightforward: maximum memory footprint and bandwidth demand, which lowers admission headroom under fixed VRAM.

FP8 is a common first compressed KV option because it halves footprint relative to FP16 while preserving strong quality on many workloads. In practical implementations, cached values are normalized by a scale then rounded into FP8 format:

The quality profile depends less on “FP8” as a label and more on scaling granularity. Coarse scaling gives low metadata overhead but can waste representational capacity when outliers dominate dynamic range; finer scaling improves fidelity but increases scale loads and addressing overhead.

INT8 uses affine quantization,

with symmetric variants setting . In principle, INT8 and FP8 have similar raw memory efficiency. In practice, relative performance depends heavily on hardware kernels and fusion quality. Integer pathways can be excellent on some stacks and mediocre on others; therefore, empirical profiling is mandatory.

INT4 is the aggressive capacity option. Raw storage is 4 bits/value, but net savings depend on group-wise metadata and alignment. If group size is and each group stores one FP16 scale, scale overhead per value is bytes. For , this is 0.03125 bytes/value, raising effective storage above raw 0.5 bytes/value. Net improvement versus FP16 often lands in the ~3.4×–3.8× range rather than a perfect 4×.

The critical point is that precision choice cannot be reduced to bit width alone. Granularity, outlier handling, metadata layout, and kernel fusion determine whether theoretical memory wins convert into real throughput and acceptable drift. For this reason, many robust deployments adopt mixed policies such as higher precision for keys than values, or high-precision retention for a recent token window.

Attention Error Propagation and Long-Horizon Drift

KV quantization affects output quality through perturbations in attention logits and weighted value aggregation. At decoding step , head-level logits are

If cached keys are perturbed by quantization, , then

Assuming zero-mean error with covariance ,

Thus, drift depends on how query directions align with quantization-error structure. Even when mean bias is small, variance increase can flatten softmax confidence and alter token ranking under close logit margins.

Value quantization introduces a second channel of error. With

a first-order decomposition gives

The first term is weight drift induced by key perturbations; the second is direct value-content distortion. Because softmax is nonlinear, can amplify seemingly modest logit noise in regions where candidates are close.

Why does long-context behavior degrade more than short-context benchmarks suggest? The autoregressive loop compounds perturbations. A small drift at step slightly alters hidden state, which changes subsequent queries , which in turn changes how future quantization error projects into logits. Divergence becomes path-dependent and often superlinear in perceived severity once generation runs long enough. This is precisely why cache format decisions that appear harmless on short canaries can fail under long retrieval-heavy sessions.

The classic scalar quantization relation (under standard assumptions) still provides useful intuition: lower bit width implies larger effective step size , hence larger variance. Finer scaling reduces local but increases metadata and runtime overhead. Engineering decisions therefore balance error statistics against systems costs, rather than optimizing either in isolation.

In practical mixed-precision policies, keys often get more conservative treatment than values because key error directly affects attention weights. A common compromise is FP8 keys with more aggressive value quantization, sometimes combined with a high-precision tail for the most recent tokens where local coherence is most sensitive.

Paged KV Caches: Where Memory Theory Meets Allocator Reality

Even an ideal precision format can underperform if allocation policy wastes capacity. Contiguous per-sequence KV buffers are simple but fragile under variable-length traffic, frequent churn, and mixed prefill/decode timelines. Paged KV designs solve this by mapping logical token positions to fixed-size blocks, allocated from a global pool and referenced via per-sequence block tables.

This architecture decouples logical growth from physical contiguity and enables stronger admission behavior under fragmentation pressure. It also supports prefix sharing and block recycling strategies that are difficult in contiguous layouts. However, paged designs introduce their own overheads: metadata traffic, lookup indirection, and sensitivity to block-size choice.

Fragmentation appears in two forms. Internal fragmentation is the partially filled tail block per sequence. External fragmentation arises when free blocks exist but are not effectively reusable due to allocator policy, locality constraints, or pool segmentation. If block size is tokens and residual lengths are approximately uniform modulo , expected internal waste is about tokens per live sequence. At high concurrency, this becomes substantial.

Consider an illustrative case with 2,000 live sequences, block size 32 tokens, and an average FP8 KV density of 200 KiB/token (model-dependent). Expected internal waste is roughly 15.5 tokens per sequence, or about 31,000 wasted tokens total. That corresponds to approximately 6.2 GiB of stranded memory. In such regimes, allocator inefficiency can erase much of the gain from compression.

Choosing smaller blocks reduces tail waste but increases page-table overhead, metadata fetches, and pointer-chasing costs. Larger blocks improve metadata efficiency but worsen internal slack. There is no universal optimum; the best point depends on workload length distribution, microbatch shape, and kernel access patterns. In operational terms, allocator tuning should be treated with the same rigor as model-kernel tuning.

Policy choices matter as much as data structure choices. Free-list strategy, reserve pools for decode-critical paths, precision-specific pool partitioning, and safe compaction/recycling policies all influence p99 behavior. A cache format migration that ignores allocator policy can produce misleading results: nominal memory usage may improve while tail latency worsens due to allocation jitter.

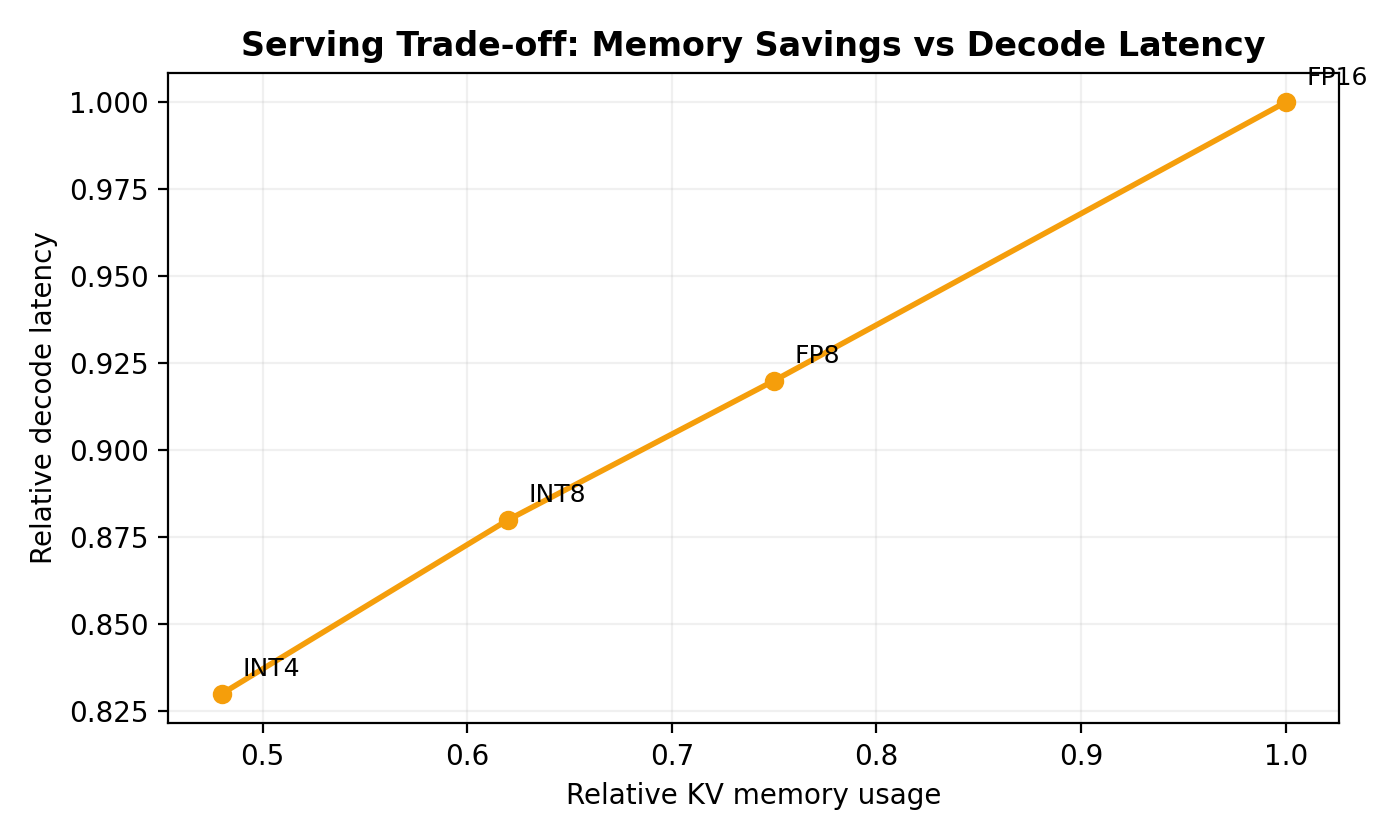

Throughput, Latency, and Admission Under Compression

Compression is often justified by capacity arithmetic, but realized service impact depends on full critical-path decomposition. A simplified latency model for token generation can be written as

Lower precision reduces by shrinking bytes transferred for KV reads, yet can increase when kernels are poorly fused. If dequantization and attention are separated into multiple kernels, theoretical bandwidth savings may not translate to higher throughput. Conversely, well-fused kernels can realize substantial wins, especially in decode-heavy traffic where attention repeatedly scans growing KV state.

Service-level gains are often more visible at tails than medians. Additional KV headroom reduces emergency eviction/recompute events and dampens allocator contention, improving p95/p99 even when p50 changes modestly. This distinction matters in production because user-perceived reliability is usually governed by tail outcomes.

A concrete capacity scenario clarifies the operational stakes. Suppose an 80 GiB GPU retains about 28 GiB for KV after weights and runtime overheads. For a model at approximately 160 KiB/token in FP16, nominal capacity is about 183k live tokens. FP8 lifts this to about 366k; an INT4 regime with realistic metadata/alignment overhead might reach around 630k. If policy enforces a 70% utilization ceiling for tail safety, safe budgets are roughly 128k, 256k, and 441k tokens respectively. These differences directly affect admission control and concurrency envelopes during long-context traffic spikes.

Prefill/decode asymmetry further shapes outcomes. Prefill is relatively compute-dense, while decode is memory-dominated because each generated token revisits accumulated KV state. Compression therefore helps chat-style and iterative workflows more than short one-shot generations. Teams should benchmark with realistic traffic mixes; otherwise they risk overestimating benefits from prefill-heavy synthetic tests.

Task-Dependent Accuracy Sensitivity and Practical Policy Design

Quantized KV quality is not uniform across tasks. Copy-sensitive workloads such as exact code transcriptions or strict template generation rely on sharp token discrimination. Small key perturbations can reduce logit margins between near-duplicate candidates, causing punctuation drift, delimiter mistakes, or off-by-one structure errors. Retrieval-heavy long-context QA depends on preserving weak but semantically critical links across distant spans; quantization noise can bury these low-amplitude signals, especially in distractor-rich contexts. Long-chain reasoning introduces cumulative sensitivity: even minor early deviations can alter later latent trajectories.

By contrast, broad semantic generation and summarization are often more tolerant, particularly under FP8. This heterogeneity implies that “average benchmark regression” is a poor single decision metric. Precision should be selected by workload class and risk tolerance, not by one global scalar.

The figure below is an illustrative risk map, not a benchmark result. Treat each bar as a relative sensitivity signal for planning eval coverage, not as a measured quality drop.

A robust production strategy therefore combines systems telemetry with targeted quality probes. On the systems side, operators should monitor KV reserved-versus-live bytes, live token counts, fragmentation ratio

allocation latency percentiles, block churn, and eviction/recompute events. On the quality side, a layered approach is practical: shadow traffic against FP16 baseline, canary log-prob divergence using

top-k overlap drift, and lightweight task-specific checks (for example exact-match templates for copy tasks and retrieval hit-rate for long-context QA). Crucially, all quality and latency metrics should be stratified by context-length bins. Many compression failures are invisible below 2k tokens and only manifest beyond 8k or 32k.

Rollout discipline is equally important. Precision changes should follow progressive traffic ramps with automatic rollback triggered by composite SLO breaches spanning both quality and latency. Per-model and per-workload overrides are essential; a single global precision flag is convenient operationally but often counterproductive for heterogeneous traffic.

In practice, decision frameworks that work well begin with capacity quantification (including metadata and fragmentation), then segment workloads by correctness sensitivity, run offline and shadow-online evaluations, and finally deploy hybrid policies. Conservative enterprise settings often settle on FP8 as default with selective FP16 fallback for high-stakes tasks. Capacity-constrained environments may adopt mixed schemes such as FP8 keys plus INT4 values, potentially with a high-precision recent-token tail. Adaptive controllers can downshift precision under memory pressure and upshift when drift monitors trigger.

Conclusion

KV cache compression has moved from optional optimization to central serving architecture. The memory arithmetic is linear and compelling, but production outcomes are shaped by nonlinear interactions among quantization error, attention dynamics, allocator behavior, and workload semantics. FP8 is frequently the strongest default operating point because it offers substantial capacity improvement with manageable quality risk. INT4 can unlock significantly higher admission and lower OOM pressure, but usually requires mixed-precision safeguards, stronger observability, and stricter workload qualification.

The most reliable pattern in modern deployments is policy-based precision rather than static format selection. Keys and values need not share identical precision; recent tokens need not share the same precision as distant history; and high-risk workloads need not share policy with tolerant ones. Teams that treat KV precision as a runtime control surface—integrated with allocator telemetry, quality canaries, and automatic rollback—typically achieve both higher utilization and more stable user experience under long-context load.

References

- Dao, T. et al. FlashAttention: Fast and Memory-Efficient Exact Attention with IO-Awareness. NeurIPS 2022.

- Dao, T. et al. FlashAttention-2: Faster Attention with Better Parallelism and Work Partitioning. 2023.

- Kwon, W. et al. Efficient Memory Management for Large Language Model Serving with PagedAttention. SOSP 2023.

- Ainslie, J. et al. GQA: Training Generalized Multi-Query Transformer Models from Multi-Head Checkpoints. 2023.

- Liu, Z. et al. KIVI: A Tuning-Free Asymmetric 2bit Quantization for KV Cache. ICML 2024.

- Jia, J. et al. SAW-INT4: System-Aware 4-Bit KV-Cache Quantization for Real-World LLM Serving. 2026.

- vLLM documentation. Quantized KV Cache.

- vLLM Team. The State of FP8 KV-Cache and Attention Quantization in vLLM.

- Hugging Face Transformers documentation. Cache strategies.

- NVIDIA TensorRT-LLM documentation. Multi-Head, Multi-Query, and Group-Query Attention.