Abstract

Self-attention has become the core primitive for modern sequence models, yet much of the intuition around queries, keys, and values (Q/K/V) remains abstract. This post provides a view of attention that makes the geometry explicit and connects scaling behavior to head count. We derive the standard scaled dot-product attention, inspect its softmax temperature, analyze variance under random projections, and show how the number of heads changes the distribution of similarity scores and the effective rank of the attention map. We then connect these effects to practical phenomena—token mixing, feature specialization, gradient stability, and compute–quality tradeoffs. The goal is to provide a working mental model: attention is a learned kernel machine whose statistics shift with head scaling, and those shifts alter both what patterns are found and how confidently they are used.

Intuition

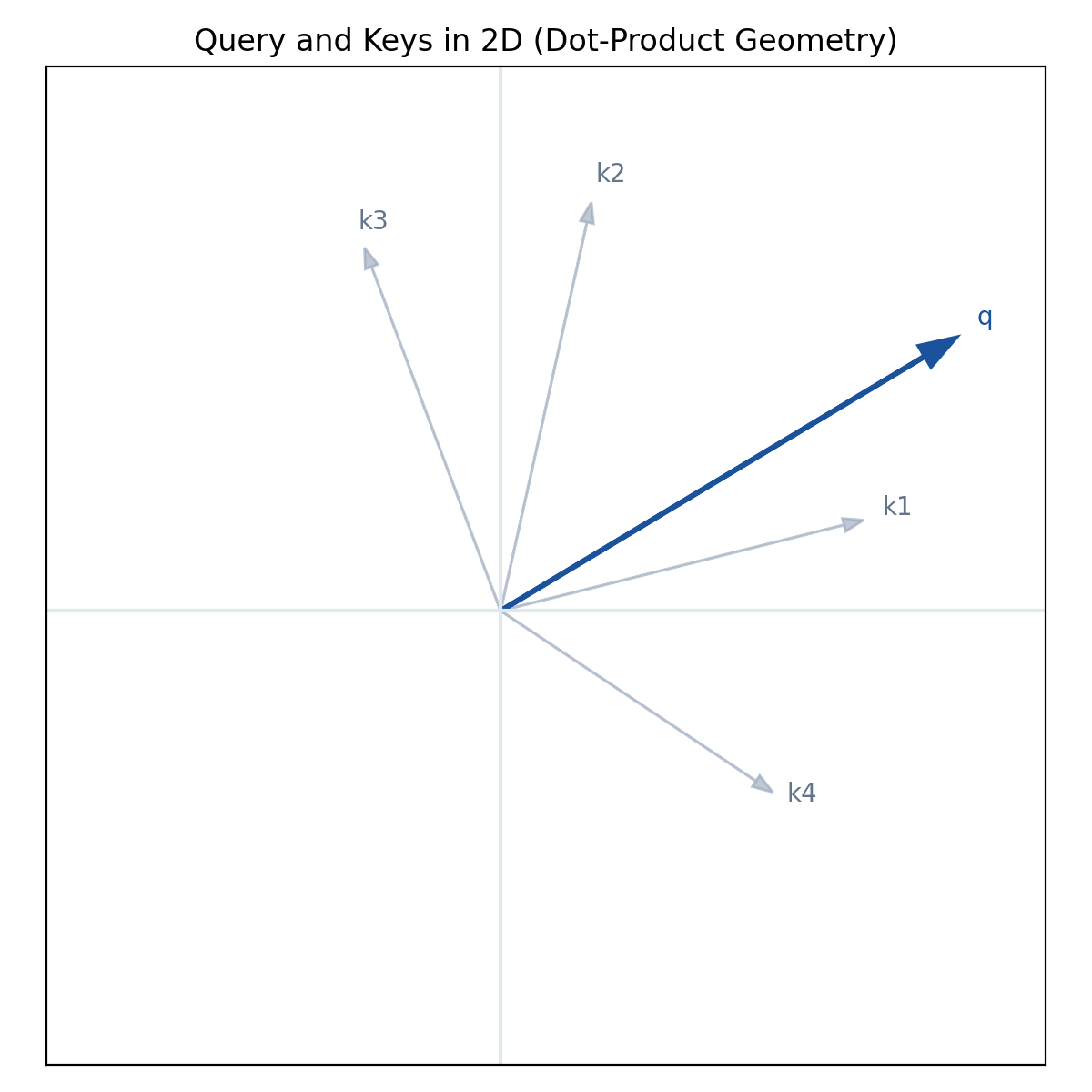

Attention can be seen as learned nearest-neighbor search in a content-based embedding space. For each token position , the model produces:

- a query representing “what I’m looking for,”

- a key representing “what I contain,”

- a value representing “what I’ll provide.”

The attention output at position is a weighted average of values , where weights are determined by the similarity between and . In practice, the similarity is a dot product or a scaled dot product. Because the softmax is exponential, small changes in dot products can cause large shifts in weight allocation. Thus, the statistical distribution of dot products is not an implementation detail—it shapes attention behavior.

Multi-head attention is often introduced as “multiple subspaces.” But a more precise mental model is: each head imposes its own kernel, with its own statistics, on the sequence. As we scale the number of heads, we change not only the subspaces but also the per-head dimensionality, which changes the variance and concentration of dot-product scores. This shifts the sharpness of the attention distribution and alters how “confident” attention becomes.

In short: head scaling changes behavior because it changes the geometry and variance of similarity scores.

Mathematical Formulation

Consider an input sequence with tokens. A single attention head uses linear projections:

where . Here is the per-head dimensionality. The unnormalized attention scores are:

and scaled dot-product attention is:

Why the scale?

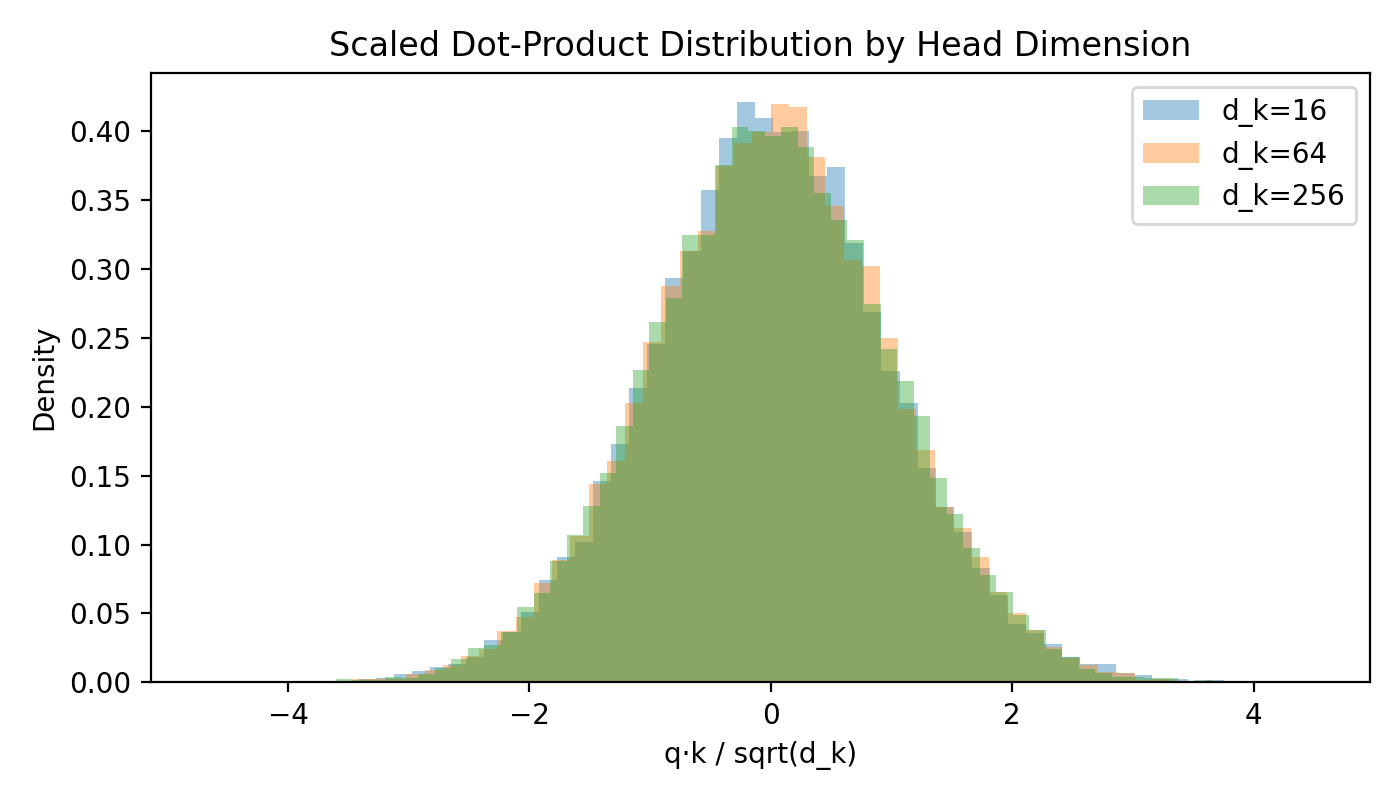

Assume entries of and are approximately i.i.d. with zero mean and unit variance. Then for a single pair of tokens:

If and are independent with variance 1, then:

Thus the dot products scale with . Without scaling, larger yields larger magnitude scores and a sharper softmax, potentially saturating and hurting gradient flow. Scaling by normalizes the variance:

So the “temperature” of attention depends on .

Multi-head attention

Multi-head attention splits the model dimension into heads:

Each head produces:

and the outputs are concatenated and projected:

Scaling the number of heads implies shrinking per-head dimension \(d_k\) if is fixed. That is the core lever that changes behavior.

Head Scaling Effects

Head scaling typically means increasing while keeping fixed. This reduces , which has several consequences.

1) Variance of dot products and softmax sharpness

If shrinks, the variance of unscaled dot products shrinks:

After scaling by , the variance is normalized to 1, but the distributional shape still changes. In small , dot products are sums of fewer terms and thus exhibit higher kurtosis and more variability in tail events (non-Gaussianity). Empirically, this leads to either:

- more peaky distributions if a few dimensions align strongly, or

- more uniform attention if projections are weak.

These two effects appear contradictory, but both can happen depending on the learned projections. In practice, smaller yields higher variance across heads and more “specialized” behavior.

2) Effective rank and expressivity

The attention matrix for a head is:

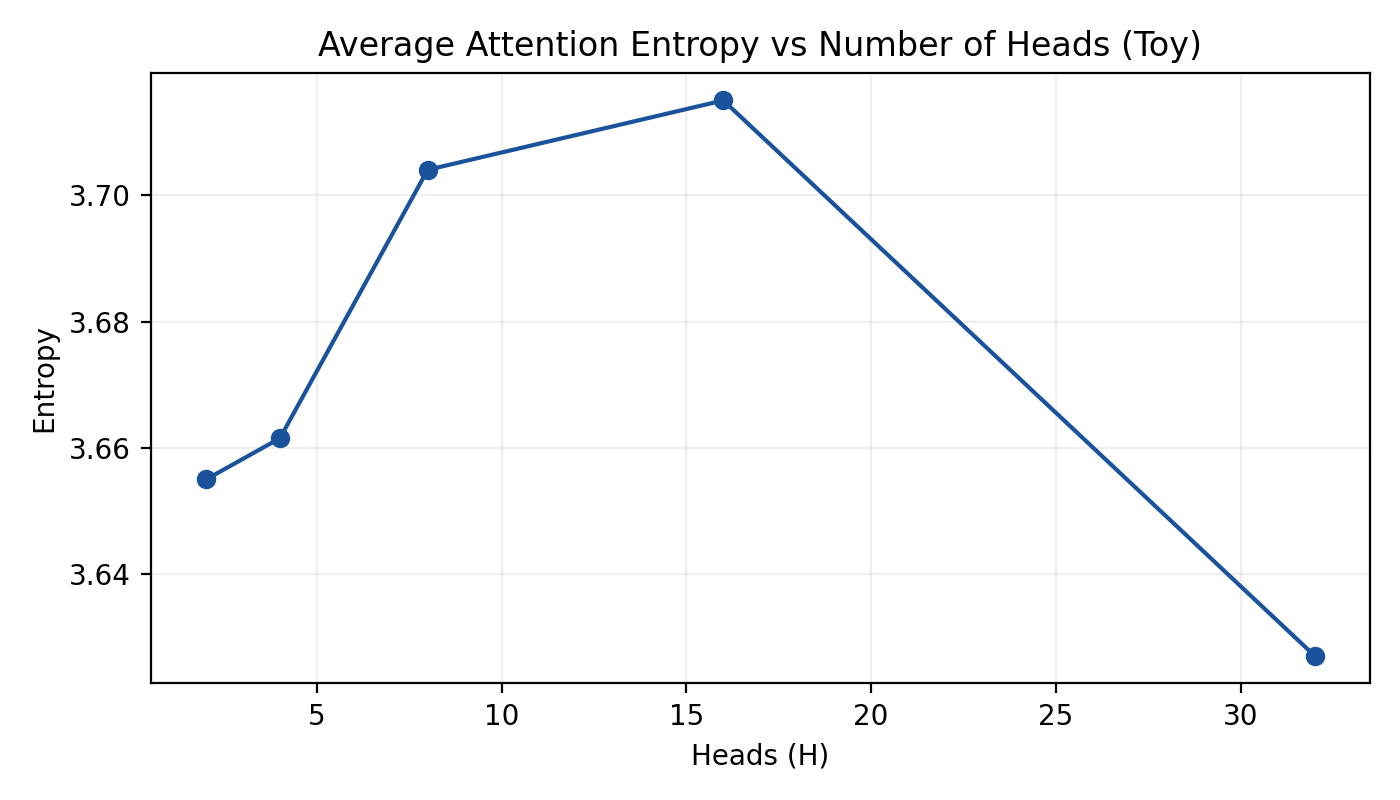

Even if is full rank, the softmax tends to produce low-rank-like structure because it concentrates mass. As increases, each head produces a smaller projection but there are more attention matrices. The combined output can be seen as a mixture of low-rank kernels. Increasing heads increases the mixture count while decreasing each kernel’s embedding dimension.

This mirrors the tradeoff in mixture models: more components, less capacity per component.

3) Geometric interpretation

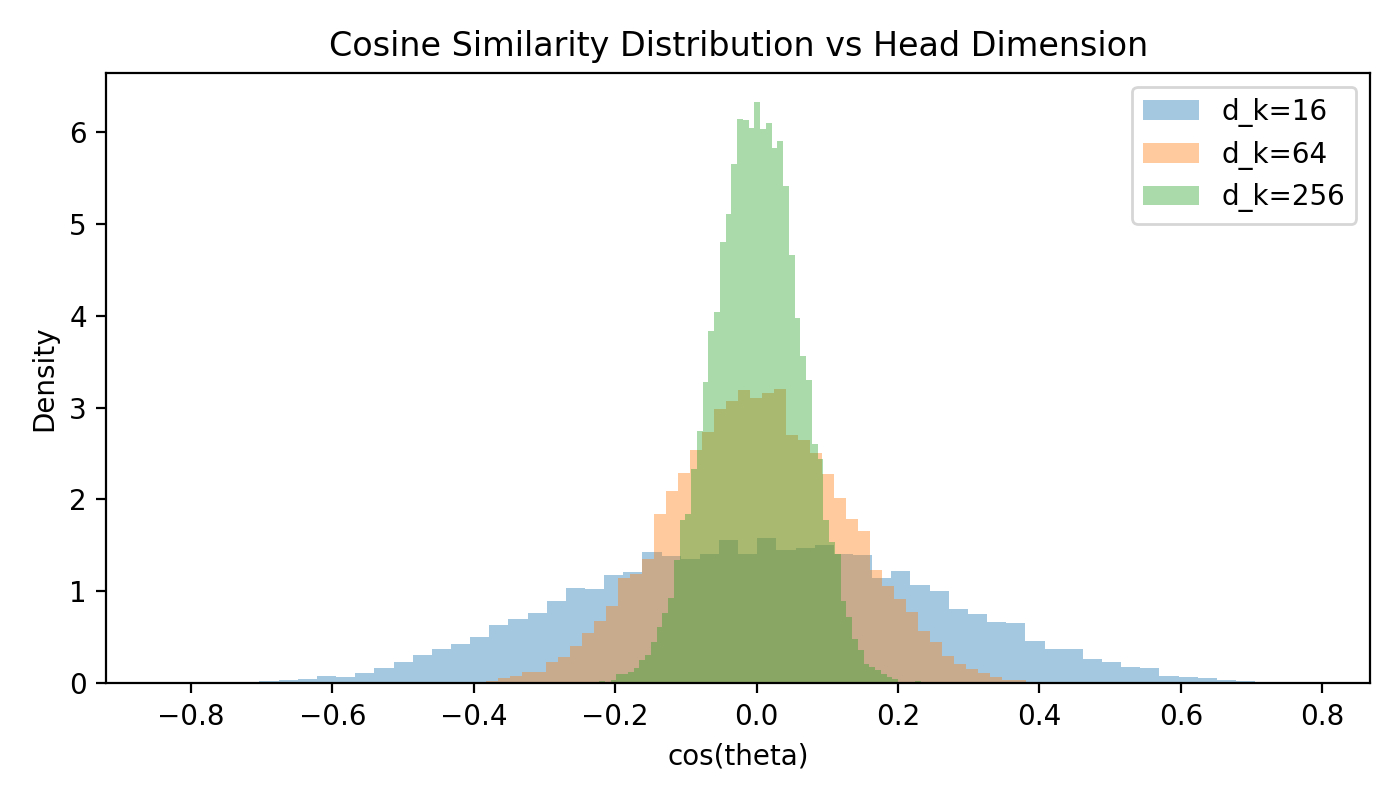

Consider the normalized similarity:

When is large, random vectors concentrate around orthogonality, and cosine similarities are tightly distributed around 0. When is small, cosine similarities are more spread, making it easier for random alignments to produce high similarity. This affects the baseline probability of attention to unrelated tokens.

Thus, head scaling changes the signal-to-noise ratio of similarity measures.

4) Gradient and optimization dynamics

Attention output is:

The gradient depends strongly on softmax sharpness. If attention is too sharp, gradients vanish for non-selected tokens; if too flat, gradients are diffuse and weak. Smaller (with more heads) can shift heads into either of these regimes depending on learned scale. In practice, models often learn per-head scaling or use techniques like RMSNorm/LayerNorm to stabilize.

5) Why “scaling heads” changes behavior

Combining the above:

- More heads → smaller \(d_k\) → more variance in similarity and specialization

- Fewer heads → larger \(d_k\) → more stable, averaged similarity distributions

Hence, scaling head count changes what attention “looks like” (more diverse patterns) and how it behaves during training (different gradient regimes).

Practical Implications

1) Specialization vs. generalization

Empirically, increasing head count tends to produce more specialized heads: some attend to syntax, some to positional patterns, others to semantic matching. But this comes at the cost of reduced per-head capacity. If becomes too small, each head may be underpowered for capturing complex patterns. This explains why simply increasing heads does not always improve performance.

2) Head dimension as temperature control

Although the scaling is fixed, the learned projections can amplify or suppress the effective temperature. Yet, per-head dimension still matters because it sets the range of achievable dot products. Smaller heads can lead to brittle or noisy attention maps. If you observe overly diffuse or overly spiky attention, consider adjusting or .

3) Distributional effects in large models

In large-scale models, attention maps are often analyzed in aggregate. But head scaling alters the distribution of attention entropy across heads. For instance, with many heads, entropy variance increases: some heads become extremely sparse while others are diffuse. This can be good (diversity) or bad (instability), depending on the task and training regime.

4) Compute tradeoffs

Computational cost of attention is:

But per-head attention uses matrices of size . Increasing head count increases the number of matrix multiplications but keeps total dimensions constant. In practice, kernel fusion and GPU efficiency often favor a moderate number of heads rather than extreme head counts.

5) Interpreting attention maps

If you visualize attention in a model with many heads, you’ll see many seemingly “noisy” heads. This is not necessarily failure—it’s a consequence of smaller and higher variance in similarity. When evaluating head behavior, consider both head count and head dimension.

Visual Aids

Vector geometry (query vs. keys)

Scaled dot-product distribution by head dimension

Cosine similarity distribution vs. head dimension

Average attention entropy vs number of heads (toy)

Conclusion

Attention is not just a convenient architectural trick—it is a learned kernel whose statistics govern model behavior. Queries, keys, and values define the geometry, while scaling and head count determine the distribution of similarity scores. As head count increases, per-head dimension shrinks, altering dot-product variance, attention sharpness, specialization, and gradient dynamics. This is why scaling heads changes behavior.

For practitioners, the takeaway is clear: head count and head dimension are not cosmetic hyperparameters. They shape the attention landscape, impacting both interpretability and performance. Understanding these effects allows more informed scaling decisions and better diagnosis of model behavior in practice.

References

- Vaswani et al., *Attention Is All You Need*, NeurIPS 2017.

- Choromanski et al., *Rethinking Attention with Performers*, ICLR 2021.

- Dong et al., *Attention Is Not Explanation*, ACL 2022.

- Xiong et al., *Layer Normalization in the Transformer Architecture*, NeurIPS 2020.

- Jain & Wallace, *Attention is not Explanation*, NAACL 2019.

- Press et al., *Train Short, Test Long: Attention with Linear Biases*, ICLR 2022.

---